I just read Eric’s article about all the topics he covered around vSphere 5 over the last couple of weeks and as I just published the last article I had prepared I figured it would make sense to post something similar. (Great job by the way Eric, I always enjoy reading your articles and watching your videos!) Although I did hit roughly 10.000 unique views on average per day the first week after the launch and still 7000 a day currently I have the feeling that many were focused on the licensing changes rather then all the new and exciting features that were coming up, but now that the dust has somewhat settled it makes sense to re-emphasize them. Over the last 6 months I have been working with vSphere 5 and explored these features, my focus for most of those 6 months was to complete the book but of course I wrote a large amount of articles along the way, many of which ended up in the book in some shape or form. This is the list of articles I published. If you feel there is anything that I left out that should have been covered let me know and I will try to dive in to it. I can’t make any promises though as with VMworld coming up my time is limited.

- Live Blog: Raising The Bar, Part V

- 5 is the magic number

- Hot of the press: vSphere 5.0 Clustering Technical Deepdive

- vSphere 5.0: Storage DRS introduction

- vSphere 5.0: What has changed for VMFS?

- vSphere 5.0: Storage vMotion and the Mirror Driver

- Punch Zeros

- Storage DRS interoperability

- vSphere 5.0: UNMAP (vaai feature)

- vSphere 5.0: ESXCLI

- ESXi 5: Suppressing the local/remote shell warning

- Testing VM Monitoring with vSphere 5.0

- What’s new?

- vSphere 5:0 vMotion Enhancements

- vSphere 5.0: vMotion enhancement, tiny but very welcome!

- ESXi 5.0 and Scripted Installs

- vSphere 5.0: Storage initiatives

- Scale Up/Out and impact of vRAM?!? (part 2)

- HA Architecture Series – FDM (1/5)

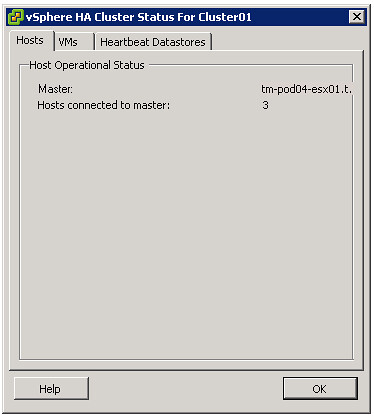

- HA Architecture Series – Primary nodes? (2/5)

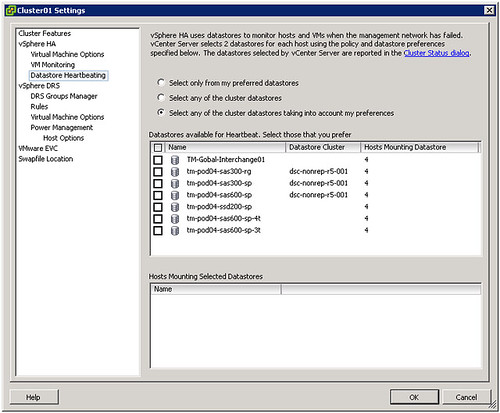

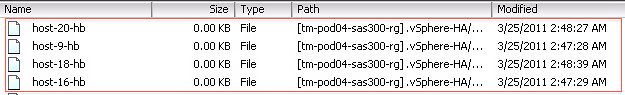

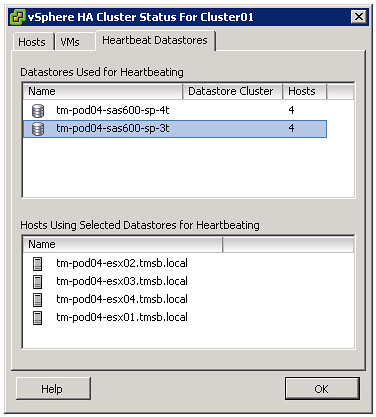

- HA Architecture Series – Datastore Heartbeating (3/5)

- HA Architecture Series – Restarting VMs (4/5)

- HA Architecture Series – Advanced Settings (5/5)

- VMFS-5 LUN Sizing

- vSphere 5.0 HA: Changes in admission control

- vSphere 5 – Metro vMotion

- SDRS and Auto-Tiering solutions – The Injector

Once again if there it something you feel I should be covering let me know and I’ll try to dig in to it. Preferably something that none of the other blogs have published of course.