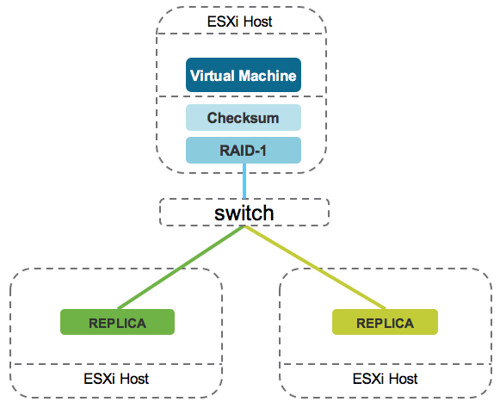

Today I was talking to a customer about the checksum functionality that is part of VSAN 6.2. They asked me if VSAN was still prone to bit rot scenarios, and they mentioned other potential bottlenecks like no data locality… It was fairly straight forward to set it straight as with VSAN 6.2 we do have a “host local read cache” and we checksum all data by default on write and on read, and yes we also scrub the disk to pro-actively detect potential issues. I’ve already written about these features a couple of times, but today when explaining to this customer how VSAN’s checksumming functionality is implemented the customer immediately realized the benefits of our hypervisor based implementation. Note that the diagram below shows the VM running on a different host then where the actual data is located, the VM could easily be running on the same host as where one of the replicas is located…

When it comes to checksums, these are calculated on the host where the VM resides. Why? Well you can imagine that you will want to protect your data against all types of potential corruption and issues. Not just when at rest, but you want to calculate the checksum before the data leaves the host, before it is replicated / distributed, before it hits the disk controller, before it goes to persistent media! Even if a bit flips while traveling across the network to be written to persistent media this will be detected and corrected.That is exactly what VSAN does, which is unique. As the title says, checksumming where you should… at the source.