On VMTN a question was asked about the size of the cluster being supported for vSAN Express Storage Architecture (ESA). There appears to be some misinformation out there on various blogs. Let me first state that you should rely on official documentation when it comes to support statements, and not on third party blogs. VMware has the official documentation website, and of course, there’s core.vmware.com with material produced by the various tech marketing teams. This is what I would rely on for official statements and or insights on how things work, and then of course there are articles on personal blogs by VMware folks. Anyway. back to the question, which cluster size is supported?

For vSAN ESA, VMware supports the exact same configuration when it comes to the cluster size as it supports for OSA. In other words, as small as a 2-node configuration (with a witness), as large as a 64-node configuration, and anything in between!

Now when it comes to sizing your cluster, the same applies for ESA as it does for OSA, if you want VMs to automatically rebuild after a host failure or long-term maintenance mode action, you will need to make sure you have capacity available in your cluster. That capacity comes in the form of storage capacity (flash) as well as host capacity. Basically what that means is that you need to have additional hosts available where the components can be created, and the capacity to resync the data of the impacted objects.

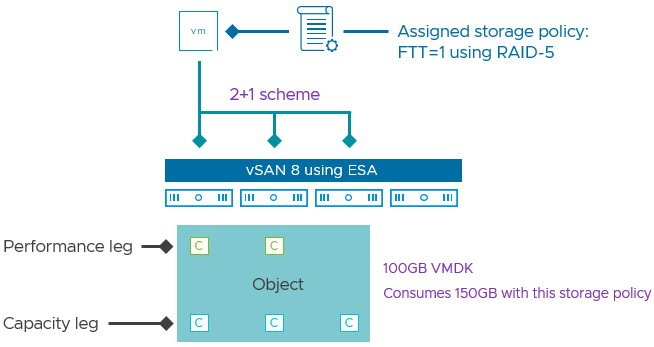

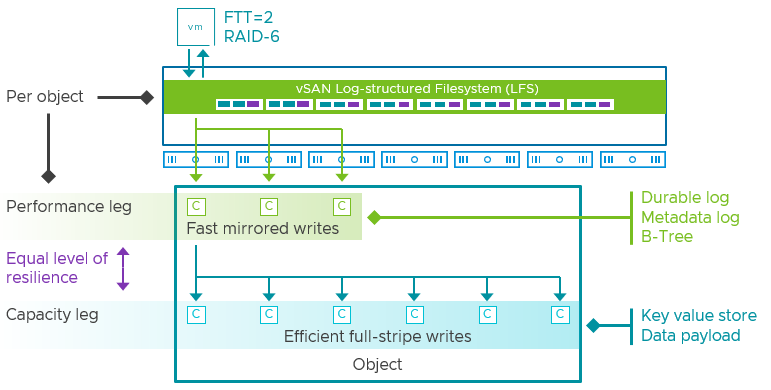

If you look at the diagram below, you see 6 components in the capacity leg and 7 hosts, which means that if a host fails you still have a host available to recreate that component, again, on this host you also still need to have capacity available to resync the data so that the object is compliant again when it comes to data availability.

I hope that explains first of all what is supported from a cluster size perspective, and secondly why you may want to consider adding additional hosts. This of course will depend on the requirements you have and the budget you have.