Over the last couple of days the same VMware EVO:RAIL questions keep popping up over and over again. I figured I would do a quick VMware EVO:RAIL Q&A post so that I can point people to that instead of constantly answering them on twitter.

- Can you explain what EVO:RAIL is?

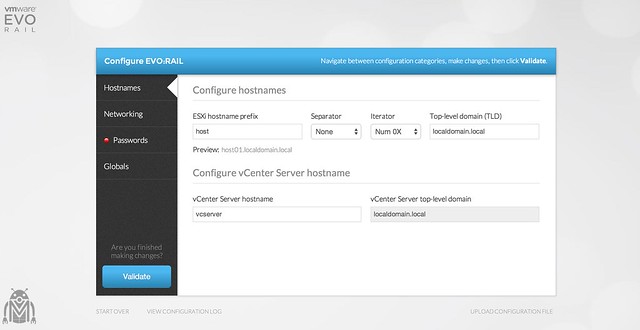

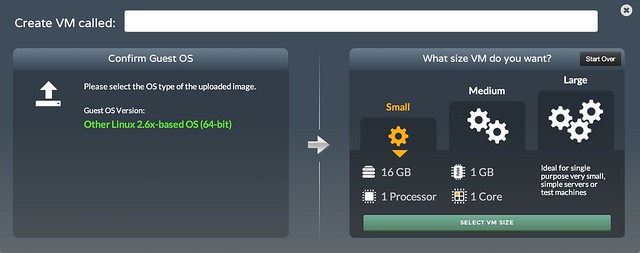

- EVO:RAIL is the next evolution of infrastructure building blocks for the SDDC. It delivers compute, storage and networking in a 2U / 4 node package with an intuitive interface that allows for full configuration within 15 minutes. The appliance bundles hardware+software+support/maintenance to simplify both procurement and support in a true “appliance” fashion. EVO:RAIL provides the density of blade with the flexibility of rack. Each appliance comes with 100GHz of compute power, 768GB of memory capacity and 14.4TB of raw storage capacity (plus 1.6TB of flash for IO acceleration purposes). For full details, read my intro post.

- Where can I find the datasheet?

- What is the minimum number of EVO:RAIL hosts?

- Minimum number is 4 hosts. Each appliance comes with 4 independent hosts, which means that 1 appliance is the start. It scales per appliance!

- What is included with an EVO:RAIL appliance?

- 4 independent hosts each with the following resources

- 2 x E5-2620 6 core

- 192GB Memory

- 3 x 1.2TB 10K RPM Drive for VSAN

- 1 x 400Gb eMLC SSD for VSAN

- 1 x ESXi boot device

- 2 x 10GbE NIC port (SFP / RJ45 can be selected)

- 1 x IPMI port

- vSphere Enterprise Plus

- vCenter Server

- Virtual SAN

- Log Insight

- Support and Maintenance for 3 years

- 4 independent hosts each with the following resources

- What is the total available storage capacity?

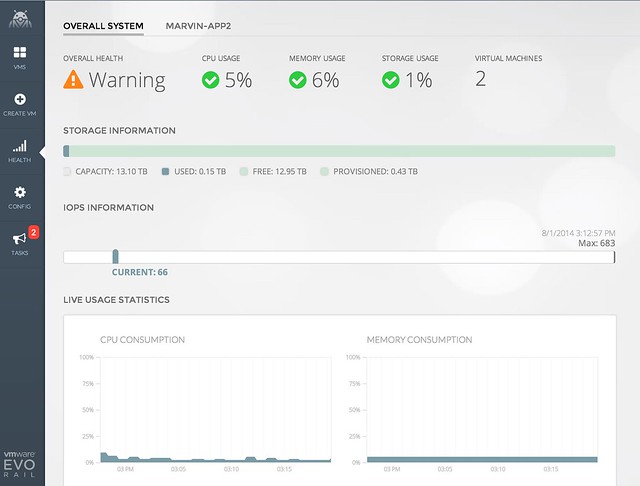

- After the VSAN Datastore is formed and vCenter Server is installed / configured there is about 13.1TB left

- How many VMs can I run on one appliance?

- That will very much depend on the size of the virtual machine and the workload. We have been able to comfortably run 250 desktops on one appliance. With Server VMs we ended up with around 100. However, again this very much depends on things like workload / capacity etc.

- How many EVO:RAIL appliance can I scale to?

- With the current release EVO:RAIL scales to 4 appliance (aka 16 hosts)

- If licensing / maintenance / support is three 3 years, what happens after?

- After 3 years support/maintenance expires. It can be extended, or the appliance can be replaced when desired.

- How is support handled?

- All support is handled through the OEM the EVO:RAIL HCIA has been procured through. This ensures that “end to end” support will be provided through the same channel.

- Who are the EVO:RAIL qualified partners?

- The following partners were announced at VMworld: Dell, EMC, Fujitsu, Inspur, Net One Systems, Supermicro, Hitachi Data Systems, HP, NetApp

- How much does an EVO:RAIL appliance cost?

- Pricing will be set by qualified partners

- I was told Support and Maintenance is for 3 years, what happens after 3 years?

- You can renew your support and maintenance with 2 years at most (as far as I know).

- If not renewed then the EVO:RAIL appliance will remain functioning, but entitlement to support is gone.

- What if I buy a new appliance after 3 years, can I re-use my licenses that come with the EVO:RAIL appliance??

- No, the licenses are directly tied to the appliance and cannot be transferred to any other appliance or hardware.

- Will NSX work with EVO:RAIL?

- EVO:RAIL uses vSphere 5.5 and Virtual SAN. Anything that works with that will work with EVO:RAIL. NSX has not been explicitly tested but I expect that this should be no problem.

- Does it use VMware Update Manager (VUM) for updating/patching?

- No EVO:RAIL does not use VUM for updating and patching. It uses a new mechanism which is built from scratch and comes as part of the EVO:RAIL engine. This to provide a simple updating and patching mechanism, while avoiding the need for a Windows VM (VUM requires Windows).

- What kind of NIC card is included?

- 10GbE dual port NIC per host. Majority of vendors will offer both SFP+ and RJ45. This means there is 8 x 10GbE switch port per EVO:RAIL appliance required!

- Is there a physical switch included?

- A physical switch is not part of the “recipe” VMware provided to qualified partners, but some may package one (or multiple) with it to simplify green field deployments.

- What is MARVIN or Mystic ?

- MARVIN (Modular Automated Rackable Virtual Infrastructure Node) was the codename used by VMware internally for EVO:RAIL. Mystic was the codename used by EMC. Both of them refer to EVO:RAIL

- Where does EVO:RAIL run?

- EVO:RAIL runs on vCenter Server. vCenter Server is powered-on automatically when the appliance is started and the EVO:RAIL engine can then be used to configure the appliance

- Which version of vCenter Server do you use, the Windows version or the Appliance?

- In order to simplify deployment EVO:RAIL uses the vCenter Server Appliance.

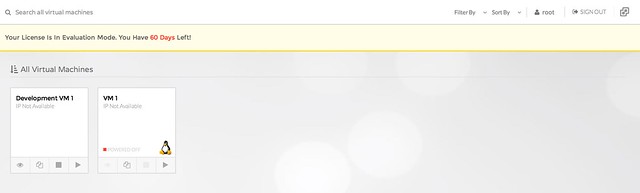

- Can I use the vCenter Web Client to manage my VMs or do I need to use the EVO:RAIL engine?

- You can use whatever you like to manage your VMs. Web Client is fully supported and configured for you!

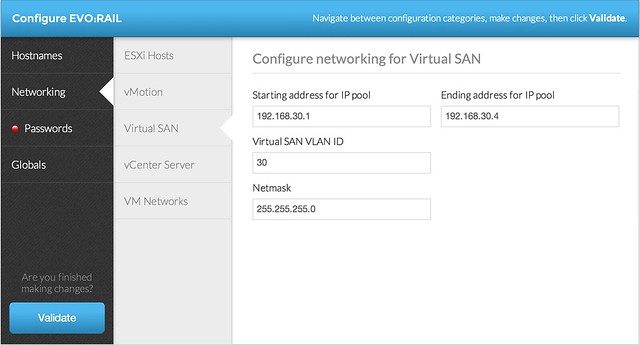

- Are there networking requirements?

- IPv6 is required for configuration of the appliance and auto-discovery. Multicast traffic on L2 is required for Virtual SAN

- …

Some great EVO:RAIL links:

- Introducing EVO:RAIL

- EVO:RAIL configuration and management Demo

- VMTN Community – EVO:RAIL

- Linkedin Group – EVO:RAIL

- VMware blog: VMware Horizon and EVO: RAIL – Value Add For Customers

- Chad Sakac – VMworld 2014 – EVO:RAIL and EMC’s approach

- Julian Wood – VMware Marvin comes alive as EVO:Rail, a Hyper-Converged Infrastructure Appliance

- Chris Wahl – VMware announces software defined infrastructure with EVO:RAIL

- Ivan Pepelnjak – VMware EVO:RAIL – One stop shop for your private cloud

- Podcast on EVO:RAIL with Mike Laverick

- EVO:RAIL engineering interview with Dave Shanley

- EVO:RAIL vs VSAN Ready Node vs Component based

- …

If you have any questions, feel free to drop them in comments section and I will do my best to answer them.