#STO1378 “vSAN Management Today and in the Future” by Junchi Zhang and Christian Dickmann. Christian did this session by himself last year, and it was one of the coolest sessions of last year, so the expectations are set high. In this session Junchi and Christian will be discussing what vSAN management looks like today, but more importantly they will demo what it will look like in the future.

Christian started with the basics. As Christian stated: when we started with vSAN we developed a storage solution, but we evolved to HCI, which means deep integration with vSphere and hardware. As Christian stated: it is our mission to provide Radically simple HCI with choice. This means building an “appliance-ike” experience on your choice of hardware. Deep integration with vSphere and vSphere workflows. All of this for environments with 2 hosts or 1000s of hosts.

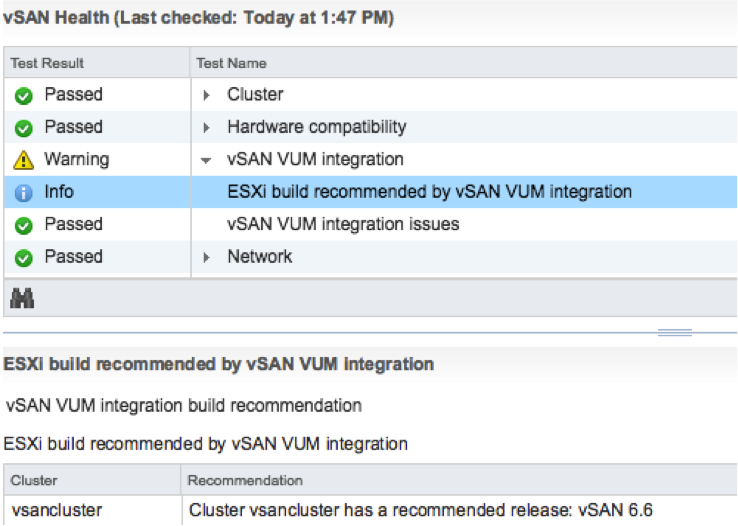

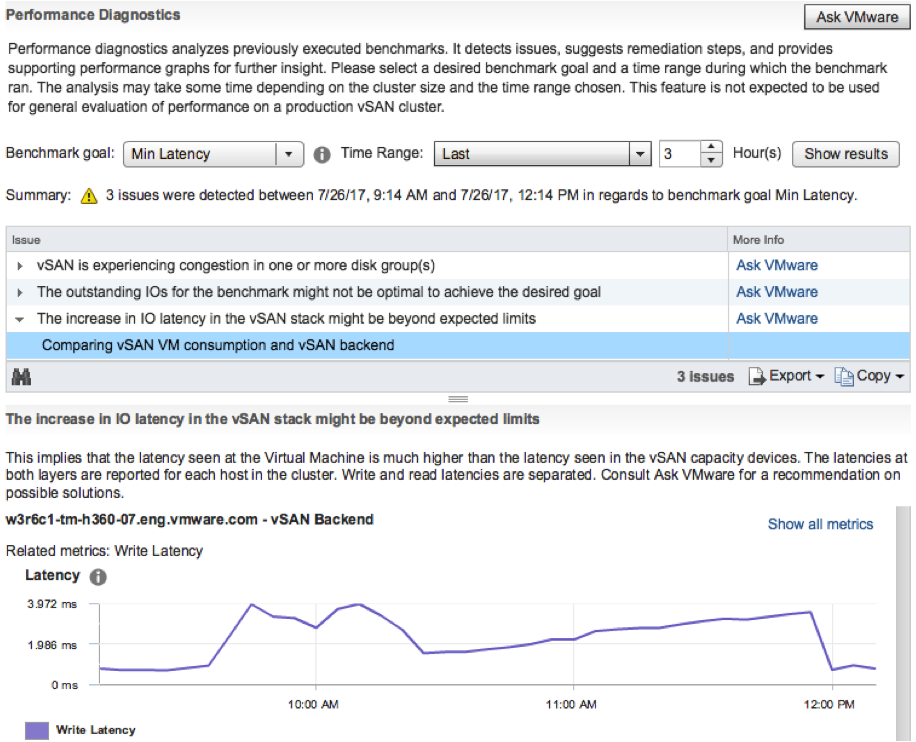

In the past releases vSAN provided a lot of new functionality to realize our mission. Just as an example: Health Checks in 6.0, Capacity Reporting in 6.2, Performance Analytics in 6.6. Going forward however the focus will be: Easier Liefecycle Management, Rich Monitoring, Infrastructure Analytics.

First demo up: HTML5 vSphere Client Demo. In the demo the HTML5 interface is shown, and what stood out to me is the responsiveness. As Christian said, we kept what worked well, but we also have new ways of presenting info. For instance the Health Check view has changed. But also the Virtual Object view has changed, we will be showing swap and for instance iSCSI object. On top of that it will provide filters to make it easier to find what you are looking for. Biggest change in the H5 UI is the SPBM interface. New screens, new layout, simpler, fast and more intuitive!

Up next: Patching ESXi / Firmware. In 6.6 we launched the capability to upgrade disk controllers using Config Assist. But what about VUM? And will it be able to not just do a disk controller but also the SAS Expander, BIOS, NICs etc? And that is what the next demo is about. First of all, they removed 1 required reboot. So down from 2 to just 1! Which will easily save 10-15 minutes per host. The demo next shows the current version of the iDRAC firmware, and the version of vSphere used. Leveraging VUM a new baseline is created which not only contains the latest version of vSphere but also a baseline for firmware. Which in this demo updates the iDRAC firmware and the bios. VUM places the host in maintenance mode, upgrades the firmware and BIOS, then updates the hosts itself and reboots the host and the host is done. As Christian said: One click for you as an admin, but many different steps happening in the back-end. The next thing the team will be looking at is not just doing per host updates, but remediating full clusters at a time. This will include full vSAN Health integrations, which will help validating the hosts if they are “safe / healthy” to run vSAN/Workloads again. In other words: update firmware>> update software >> validate health >> bring back in to cluster.

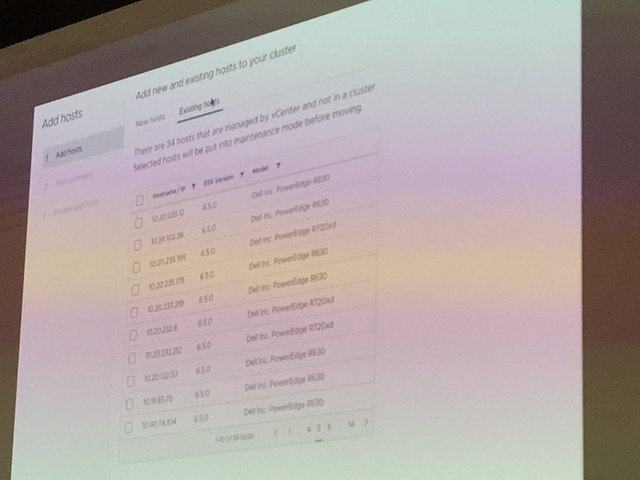

Next demo was around “HCI Cluster Creation and Extension”. How can we make the creation of clusters easier. Those who have seen the VxRail demoes know how simple things can be. But what if have a vSAN Ready Node? In this demo a simplified UI is shown. During the creation of the demo it is shown how for instance all hosts are added to a cluster at the same time. Before the hosts are added though the hosts are validated/verified. Yes, from a vSAN Health point of view. And when they are safe to add, they are added in to the cluster in “maintenance mode”. This is something I have personally been asking for for a long time now. Great to see this is finally added. Also the health check before adding it to the cluster is great, no point in adding a host which is not healthy. Not just adding hosts, but also configure the network as part of the cluster configuration and apply all the vSAN best practices are applied (yes you can change the settings as you go), not just vSAN but also HA/DRS/NTP/Security settings etc. Great demo, great work!

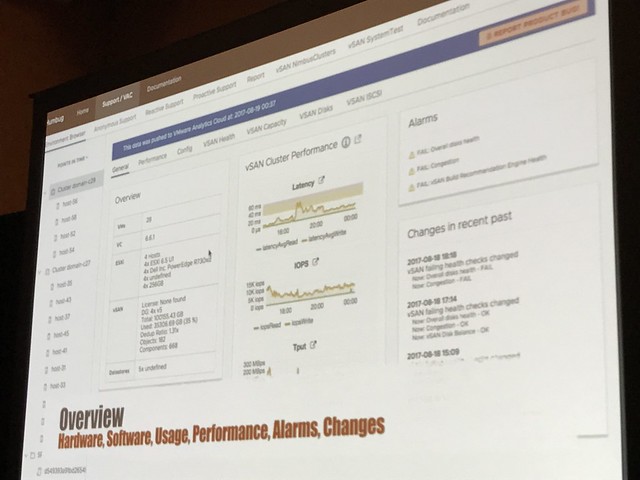

“Rich Infra Analytics” is up next. Christian explained that we already have some cool analytics tools in vSAN 6.6 and vSAN 6.6.1 with the Cloud Connected Health Check and the Performance Analytics tool. This demo is showing an internal tool. So this is not showing what the customers will be using, but showing how engineers and GSS can leverage internal tooling to make the support experience better! In the demo it is shown how GSS can instantly view your environment and details leveraging the “Phone Home” data. More or less replicating the H5 UI. It will allow support to validate if you set advanced settings, if health checks have failed, if you have contention etc. Not just that, also go deep on performance of your environment. They can even go back in time, everything worked fine day ago? Did anything change? What did the environment look like? Simply by going by that “point in time”. Not only when you phone us up, but we can also provide pro-active support. We have the data, we can contact you when something is about to fail, or configuration settings could cause issues in the future. (Depending on the bought support level of course) In order for this to work though, CEIP will need to be enabled.

Up next: Junchi talking about VROps Dashboards and what we are doing in this space. As it stands right now, there are different interfaces to monitor vSAN: VROps and vCenter. But can we collapse these when VROps is installed and provide all the info you need under the H5 Client? We have a great number of dashboards in VROps and in the future that data will automatically be exposes in the H5 Client when you have VROps installed.

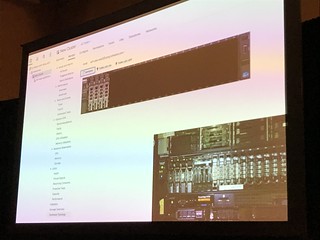

What about hardware topology? What if you need to swap a disk or add a disk. In the demo it is shown how the H5 will show you the hardware topology in a physical overview. You add a disk and the UI will instantly show you the new disk in the chassis. That was a very “simple” change, but very welcome!

Great session, highly recommended to watch the recording when it becomes available. I will add a link when it is published (DONE!) Thanks Junchi and Christian for showing what we are working on and what the future of vSAN/HCI looks like!