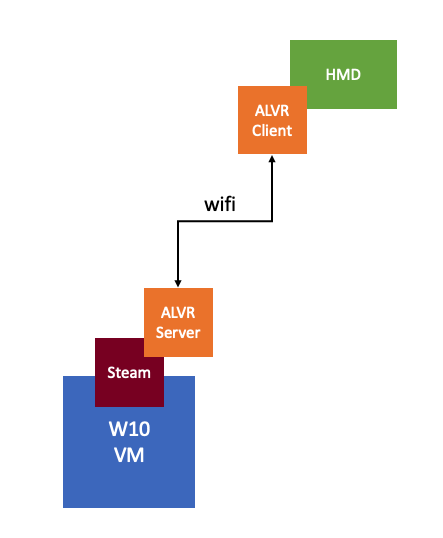

I’ve been down in the lab for the last week doing performance testing with virtual reality workloads and streaming these over wifi to an Oculus Quest headset. In order to render the graphics remotely, we leveraged NVIDIA GPU technology (RTX 8000 in my case here in the lab). We have been getting very impressive results, but of course at some point hit a bottleneck. We tried to figure out which part of the stack was causing the problem and started looking at the various NVIDIA stats through nvidia-smi. We figured the bottleneck would be GPU, so we looked at things like GPU utilization, FPS etc. Funny enough this wasn’t really showing a problem.

We then started looking at different angles, and there are two useful commands I would like to share. I am sure the GPU experts will be aware of these, but for those who don’t consider themselves an expert (like myself) it is good to know where to find the right stats. While whiteboarding the solution and the different part of the stacks we realized that GPU utilization wasn’t the problem, neither was the frame buffer size. But somehow we did see FPS (frames per second) drop and we did see encoder latency go up.

First I wanted to understand how many encoding sessions there were actively running. This is very easy to find out by using the following command. The screenshot below shows the output of it.

nvidia-smi encodersessions

As you can see, this shows 3 encoding sessions. One H.264 session and two H.265 sessions. Now note that we have 1 headset connected at this point, but it leads to three sessions. Why? Well, we need a VM to run the application, and the headset has two displays. Which results in three sessions. We can, however, disable the Horizon session using the encoder, that would save some resources, I tested that but the savings were minimal.

I can, of course, also look a bit closer at the encoder utilization. I used the following command for that. Note that I filter for the device I want to inspect which is the “-i <identifier>” part of the below command.

nvidia-smi dmon -i 00000000:3B:00.0

The above command provides the following output, the “enc” column is what was important to me, as that shows the utilization of the encoder. Which with the above 3 sessions was hitting 40% utilization roughly as shown below.

How did I solve the problem of the encoder bottleneck in the end? Well I didn’t, the only way around that is by having a good understanding of your workload and proper capacity planning. Do I need an NVIDIA RTX 6000 or 8000? Or is there a different card with more encoding power like the V100 that makes more sense? Figuring out the cost, performance and the trade-off here is key.

Two more weeks until the end of my Take 3 experience, and what a ride it has been. If you work for VMware and have been with the company for 5 years… Consider doing a Take 3, it is just awesome!

I first got introduced to Virtual Reality (VR) in the 90’s. Back then it was all about gaming of course. Even today though the perception is that it is mainly about gaming, and to be honest that was also my perception. When I spoke with Alan Renouf the first time about the project he was working on and I saw his keynote demo I didn’t really see the opportunity. It all felt a bit gimmicky, to be honest, but can you blame me when the focus of the demo is moving workloads to the cloud by picking up a VM and throwing it over “the fence”.

I first got introduced to Virtual Reality (VR) in the 90’s. Back then it was all about gaming of course. Even today though the perception is that it is mainly about gaming, and to be honest that was also my perception. When I spoke with Alan Renouf the first time about the project he was working on and I saw his keynote demo I didn’t really see the opportunity. It all felt a bit gimmicky, to be honest, but can you blame me when the focus of the demo is moving workloads to the cloud by picking up a VM and throwing it over “the fence”. It isn’t something I ever thought about, but in order to train firefighters they create a room inside a container, burn down the container and then have groups of firefighters try to figure out how and where the fire started. The problem is though if they train 10 groups per day, only the last group can touch the objects and do a proper investigation. With VR this problem is solved, as after every training session you reset and start over. Same for instance could apply to police force training for things like crime scene investigation. Or for instance training of personnel working (nuclear) power plants, oil platforms, etc etc. Or even customer services training for retailers like Walmart, let them deal with difficult customers in VR first, let them handle dozens of difficult situations in VR before they are exposed to “real” customers.

It isn’t something I ever thought about, but in order to train firefighters they create a room inside a container, burn down the container and then have groups of firefighters try to figure out how and where the fire started. The problem is though if they train 10 groups per day, only the last group can touch the objects and do a proper investigation. With VR this problem is solved, as after every training session you reset and start over. Same for instance could apply to police force training for things like crime scene investigation. Or for instance training of personnel working (nuclear) power plants, oil platforms, etc etc. Or even customer services training for retailers like Walmart, let them deal with difficult customers in VR first, let them handle dozens of difficult situations in VR before they are exposed to “real” customers.