Not that it is a big deal, but as some people are like me they probably prefer to use the right name. As of this week Virtual SAN was renamed to vSAN. I am not sure we will see the change in the product in 6.5 (I doubt it) and even the website changes will take a while, but expect to see this soon.

The correct name is vSAN. The small v shows the integration with vSphere. https://t.co/3FAHPCqFdA

— Lee Caswell (@leecaswell) October 24, 2016

Oh, and before I forget, there was the Q3 earnings announcement, vSAN is still growing strong, 5500 customers at the moment! And lets not forget: “software license bookings continue to exceed expectations with vSAN and VxRail increasing over 150% year over year.” I say: Bring on Q4! And I can’t wait for 6.5 to ship 🙂

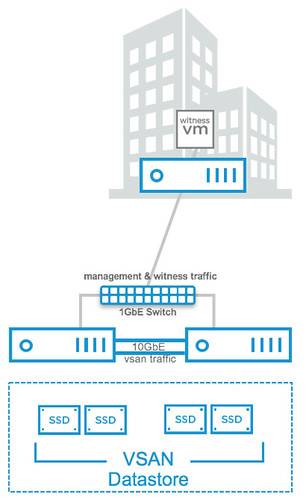

Next up on the list is 2-node direct connect. What does this mean? Well it basically means you can now cross-connect two VSAN hosts with a simple ethernet cable as shown in the diagram in the right. Big benefit of course is that you can equip your hosts with 10GbE NICs and get 10GbE performance for your VSAN traffic (and vMotion for instance) but don’t incur the cost of a 10GbE switch.

Next up on the list is 2-node direct connect. What does this mean? Well it basically means you can now cross-connect two VSAN hosts with a simple ethernet cable as shown in the diagram in the right. Big benefit of course is that you can equip your hosts with 10GbE NICs and get 10GbE performance for your VSAN traffic (and vMotion for instance) but don’t incur the cost of a 10GbE switch.