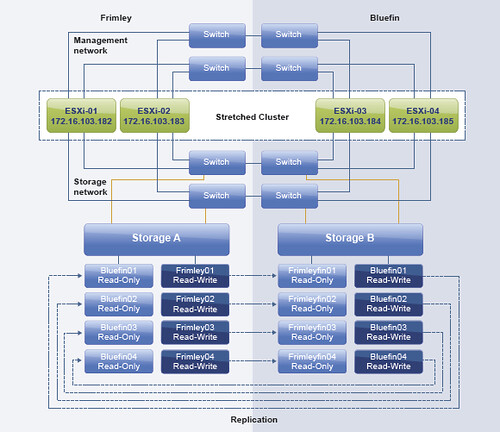

Today I received an email about the vSphere Metro Storage Cluster paper I wrote, or better said about stretched clusters in general. I figured I would answer the questions in a blog post so that everyone can chip in / read etc. So lets show the environment first so that the questions are clear. Below is an image of the scenario.

Below are the questions I received:

If a power outage occurs at Frimley the 2 hosts get a message by the UPS that there is a power outage. After 5 minutes (or any other configured value) the next action should start. But what will be the next action? If a scripted migration to a host at Bluefin starts, will DRS move some VMs back to Frimley? Or could the VMs get a mark to stick at Bluefin? Should the hosts at Frimley placed into Maintenance mode so the migration will be done automatically? And what happens if there is a total power outage both at Frimley and Bluefin? How a controlled shutdown across hosts could be arranged?

Lets start breaking it down and answer where possible. The main question is how do we handle power outages. As in any datacenter this is fairly complex. Well the powering-off part is easy, powering everything on in the right order isn’t. So where do we start? First of all:

- If you have a stretched cluster environment and, in this case, Frimley data center has a power outage, it is recommended to place the hosts in maintenance mode. This way all VMs will be migrated to the Bluefin data center without disruption. Also, when power returns it allows you to do check on the host before introducing them to the cluster again.

- If maintenance mode is not used and a scripted migration is done virtual machines will be migrated back probably by DRS. DRS is triggered every 5 minutes (at a minimum). Avoid this, use maintenance mode!

- If there is an expected power outage and the environment is brought down it will need to be manually powered on in the right order. You can also script this, but a stretched cluster solution doesn’t cater for this type of failure unfortunately.

- If there is an unexpected power outage and the environment is not brought down then vSphere HA will start restarting virtual machines when the hosts come back up again. This will be done using the “restart priority” that you can set with vSphere HA. It should be noted that the “restart priority” is only about the completion of the power-on task, not about the full boot of the virtual machine itself.

I hope that clarifies things.

Great post Duncan! As folks are beginning to implement these types of solutions more and more questions of this nature are coming up.

One thing to also consider would be if certain loads are kept on one side (datacenter) for a reason and the “must” DRS rule is employed for that, maintenance mode will not be able to migrate those VMs to the other side. Unless the “must” rule is disabled or re-adjusted.

Also, if the “must” rule is employed to keep the load on one datacenter, in case of an unexpected power failure of that datacenter, HA will not attempt to restart those VMs on the other side. HA will make sure the “must” rule is not violated. I am not for or against the “must” rule as long as we understand what it could do in certain situations.

On the flip side, a “should” rule gives HA the flexibility to restart VMs and violate the DRS rule. Credit: Duncan/Frank’s book(s)/blog(s)

Good summary, to complete this post it should be mentioned that the “cf forcetakeover –d” may have to be issued on the surviving node to initiate a site takeover on storage site, otherwise some LUNs would fail.

The “cf forcetakeover -d” is for a disaster takeover. A disaster takeover says “do a forced takeover and assimilate the partner’s mirrored disks”. It assumes the partner site is destroyed (if temporary you should fence hosts from storage using some other technique) and is no longer reachable. In this case it seems the storage is still healthy on both sites, but the power will go away soon. So in this case you can shift processing using a normal takeover using “cf takeover” and then power off the storage in the soon-to-run-out-of-UPS site.

Hope that helps.

Cheers,

Chris Madden

Storage Architect, NetApp EMEA

Great post! Thanks a lot.

Hi Duncan, was thinking about the scenario where you place all of your hosts at one site in maintenance mode. Let’s say for example you have 12 hosts at each site, and Frimley loses power. And say the 12 hosts at Frimley are fully loaded as far as capacity. If you don’t place all of your hosts at Frimley in maintenance mode all at once (ie, you do one or a few at a time), will DRS keep trying to migrate the vacated VMs to the other Frimley hosts that are still active in the cluster due to the “should” affinity rules (bogging them down)? Or do you just place all Frimley hosts in maintenance mode at the same time to avoid this?

I have never tested that scenario Stacy, but considering it is a stretched cluster you typically have 50% spare resources. This means that if you place them in maintenance mode 1:1 that VMs will move within the side until there is no place to go.

It is a good suggestion though for a feature, site maintenance. I will create a feature request for it.

Great, thanks Duncan.