The second session I watched was HCI1603BU Tech Preview of Native vSAN Data Protection by Michael Ng. I already discussed vSAN Data Protection last year, but considering the vSAN Beta is coming up soon that includes this functionality I felt it was worth covering again. Note that the beta will be a private beta, so if you are interested please sign up, you may be one of the customers getting selected for the beta.

Michael started out with an explanation about what an SSDC brings to customers, and how a digital foundation is crucial for any organization that wants to be competitive in the market. vSAN, of course, is a big part of the digital foundation, and for almost every customer data protection and data recovery is crucial. Michael went over the various vSAN use cases and also the availability and recoverability mechanisms before introducing Native vSAN Data Protection.

Michael started out with an explanation about what an SSDC brings to customers, and how a digital foundation is crucial for any organization that wants to be competitive in the market. vSAN, of course, is a big part of the digital foundation, and for almost every customer data protection and data recovery is crucial. Michael went over the various vSAN use cases and also the availability and recoverability mechanisms before introducing Native vSAN Data Protection.

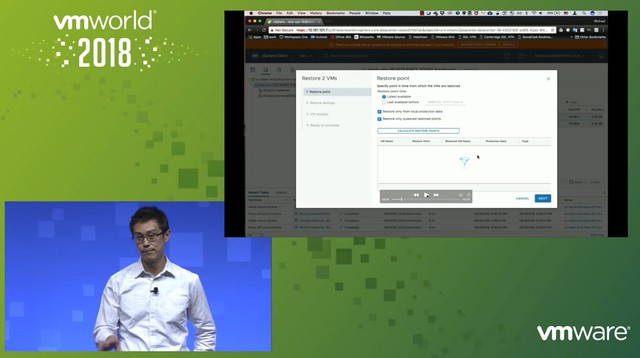

Now it is time for the vSAN Native Data Protection introduction. Michael first explains that we will potentially have a solution in the future where we can simply create snapshots locally through specifying the number of local snapshots you want in policy. On top of that, in the future, we will potentially provide the option to specify the snapshots (plus a full copy) will need to be offloaded to secondary storage. Secondary storage could be NFS, S3 Object Storage (both on-premises and in the cloud). Also, it should be possible to replicate VMs and snapshots to a DR location through policies.

What I think is very compelling is the fact that the native protection comes as part of vSAN/vSphere, there’s no need to install an appliance or additional software. vSAN Data Protection will be baked into the platform. Easy to enable and easy to consume through policy. The first focus is vSAN Local Data Protection.

vSAN Local Data Protection will provide Crash and Application-consistent snapshots at an RPO of 5 minutes and with a low RTO. On top of that, it will be possible to instant clone the snapshot. Meaning that you can restore the snapshot as an “instant clone”, this could be interesting when you want to test a certain patch or upgrade for instance. You can even specify during the recovery that the NIC doesn’t need to be connected. Application consistency is achieved by leveraging VSS providers on Windows and on Linux the VMware Tools pre- and post-scripts are being used.

What enables vSAN Data Protection is a new snapshotting technology. This new technology provides a lot better performance than traditional vSphere (or vSAN) snapshots. It also provides for better scale, meaning that you can go way above the 32 limit we currently have.

Next Michael demoed vSAN Data Protection, which is something I have done on various occasions if you are interested in what it looks like just watch the session. If I have time I may record a demo myself just so it is easier to share with you.

What I personally hadn’t seen yet were the additional performance views added. Very useful as it allows you to quickly check what the impact is of snapshots on general performance. Is there an impact? Do I need to change my policy?

Last but not least various questions were asked, most interesting parts was the following:

- “file level restore” is on the roadmap but the first feature they will tackle is offloading to secondary storage.

- “consistency groups” is something that is being planned for, especially useful when you have applications or services spanning VMs.

- Integration with vRealize Automation, some of it is planned for the first release, everything is SPBM based which already have APIs. Being planned for is “self-service restore”

- 100 snapshots per VM is tested for the first release

Good session, worth watching!