I just watched the session by Rakesh and Peng on Elastic vSAN, also known as “EBS Backed vSAN”. This session was high on my list to watch live at VMworld, but unfortunately, I couldn’t attend it due to various other obligations. If you are interested in the full session, make sure to watch it here, it is free. If you want to read a short summary then have a look below.

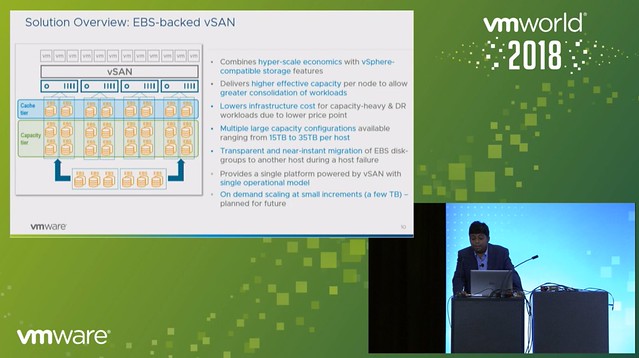

EBS backed vSAN is exactly what you expect it to be, having said that I do want to point out that EBS backed vSAN is supported for vSAN in VMware Cloud on AWS only. On top of that, it is recommended to run workloads on it which require high capacity. You could, for instance, consider leveraging EBS backed vSAN as a high capacity target for DR as a Service. But of course this could also be used in cases where there is sufficient CPU/Memory capacity available, but only storage needs to scale in VMware Cloud on AWS. 10TB is the capacity limit per host in VMC today, EBS backed vSAN removes this limit. With EBS backed vSAN you can increase the host per 15, 20, 25, 30 or 35TB per host. Which means you can deliver up to 140TB of capacity in a single 4 node cluster, for 16 nodes that is 560TB!

What is great about this solution is that it also solves another problem. Everyone knows that a host failure results in resyncing data. And depending on how much capacity the host was delivering this could take a long time. With EBS backed vSAN this problem does not exist any longer. When a host fails the EBS volumes simply will be mounted to another host, or a new host when this is introduced. This is a huge benefit if you ask me, even when there’s a high change rate as this happens within seconds.

One thing to point out as a constraint though is that today in VMC you can’t run the management workloads on EBS backed vSAN just yet. Rakesh did mention that this is being tested.

Next, the architecture was discussed, this is where Peng took over. He mentioned that the IOPS limit is set to 10K (regardless of the size) and the throughput is limited at 160MBps. All of this delivered typically with sub-millisecond latency, which is very impressive. Also, Peng mentioned that EBS backed vSAN provided very consistent and predictable performance in all tests. On top of that, EBS backed vSAN is also very reliable and highly available, even when compared to flash devices.

What I found interesting is the architecture, vSAN gets presented a SCSI device, however EBS is network attached and an EBS protocol client was implemented and then presented as an NVMe target through the PCI-e interface. The PCI-e interface allows for multi-volume, hot-add and hot-remove. This is what allows the EBS devices to be removed from a host which has failed (or has a failure) and then added to a healthy host.

When EBS backed vSAN is enabled each host will have 3 disk groups, and each disk group will have 3-7 capacity disks. Note that it is recommended to use RAID-5 for space efficiency and “Compression only mode” is enabled on these disk groups. Considering the target workloads, and the architecture (and EBS performance constraints) it didn’t make sense to use deduplication, hence the vSAN team implemented a solution where it is possible to have only compression enabled. Some I/O amplification is not an issue when you run all-flash and have hundreds of thousands of IOPS per device, but as stated EBS is limited to 10k IOPS per device, which means you need to be smart about how you use those resources.

During the Q&A one thing that was mentioned, which I found interesting, is that although today EBS backed vSAN needs to be introduced in certain increments across the whole cluster, that will not be the case in the future. In the future, according to Peng, it should be possible to add EBS volumes to disk groups on particular hosts even, allowing for full and optimal flexibility,

And for those who didn’t know, the VMworld Hands-On Labs was running on top of EBS backed vSAN and performance above expectations!

I love it.

Hi Duncan,

It´s amazing, so as per my understood, if you don´t use EBS backed VSAN you only can increase storage in a vsan on aws using EBS volumes, so you can add a disk Group based on ebs or mix ebs with the standard storage of hosts? i supose we have the same Group limitations of 5 disk Group per host, right?

for a while i asume that esxi hosts on aws are ecs instances but revieweng other articles from cormac i see that esxi runs on i3p (baremetal).

Thanks for all the information that you share.

we are waiting for a feature that enable iscsi targets for strecht cluster, very useful to give storage backed by vsan to physical hosts

That is correct, VMConAWS uses i3p and is not running nested or anything like that. We have these instances with and without NVMe. You can deploy with local NVMe and that will give you amazing performance, however it has a limited amount of capacity as AWS has a very specific hardware configuration and doesn’t offer the ability to expand right now. So the VMware team worked tirelessly to introduce Elastic vSAN, which now allows you to use EBS devices for your vSAN environment. Note that this is still in the early phases, so the whole cluster needs to be EBS backed at the moment, and for instance the management stack can’t run on it just yet. But this is being actively worked on, so I am guessing most constraints will be solved soon!