I just noticed that the Horizon documentation is offered in epub and mobi format. I have been told that this is the first of many more docs to be released in this more universal format. I am happy that VMware decided to adopt this format. It does lead to another question though. I am part of tech marketing and we produce a lot of collateral. Some documents are fairly lengthy and I always have the feeling that many people won’t read docs which are more than 50 pages. Is this any different with epub/mobi? Would you say that these formats enhance readability? If not, what would be a good way of offering documents of between 50 – 150 pages?

cloud

Storage IO Control Best Practices

After attending Irfan Ahmad’s session on Storage IO Control at VMworld I had the pleasure to sit down with Irfan and discuss SIOC. Irfan was so kind to review my SIOC articles(1, 2) and we discussed a couple of other things as well. The discussion and the Storage IO Control session contained some real gems and before my brain resets itself I wanted to have these documented.

Storage IO Control Best Practices:

- Enable Storage IO Control on all datastores

- Avoid external access for SIOC enabled datastores

- To avoid any interference SIOC will stop throttling, more info here.

- When multiple datastores share the same set of spindles ensure all have SIOC enabled with comparable settings and all have sioc enabled.

- Change latency threshold based on used storage media type:

- For FC storage the recommended latency threshold is 20 – 30 MS

- For SAS storage the recommended latency threshold is 20 – 30 MS

- For SATA storage the recommended latency threshold is 30 – 50 MS

- For SSD storage the recommended latency threshold is 15 – 20 MS

- Define a limit per VM for IOPS to avoid a single VM flooding the array

- For instance limit the amount of IOPS per VM to a 1000

SIOC, tying up some loose ends

After my initial post about Storage IO Control I received a whole bunch of questions. Instead of replying via the commenting system I decided to add them to a blog post as it would be useful for everyone to read this. Now I figured this stuff out be reading the PARDA whitepaper 6 times and by going through the log files and CLI of my ESXi host, this is not cast in stone. If anyone has any additional question don’t hesitate to ask them and I’ll be happy to add them and try to answer them!

Here are the questions with the answers underneath in italic:

- Q: Why is SIOC not enabled by default?

A: As datastores can be shared between clusters, clusters could be differently licensed and as such SIOC is not enabled by default. - Q: If vCenter is only needed when enabling the feature, who will keep track of latencies when a datastore is shared between multiple hosts?

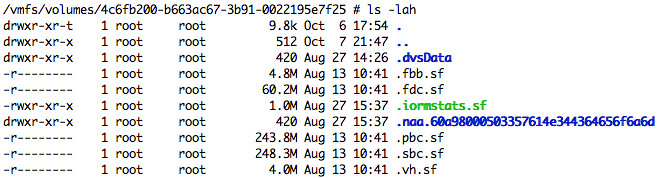

A: Latency values are actually stored on the Datastore itself. From the PARDA academic paper, I figured two methods could be used for this either through network communication or as stated by using the Datastore. Notice the file “iormstat.sf” in green in the screenshot below, I guess that answers the question… the datastore itself is used to communicate the latency of a datastore. I also confirmed with Irfan that my assessment was true.

- Q: Where does datastore-wide disk scheduler run from?

A: The datastore-wide disk scheduler is essentially SIOC or also known as the “PARDA Control Algorithm” and runs on each host sharing that datastore. PARDA consists of two key components which are “latency estimation” and “window size computation”. Latency estimation is used to detect if SIOC needs to throttle queues to ensure each VM gets its fair share. Window size computation is used to calculate what this queue depth should be for your host. - Q: Is PARDA also responsible for throttling the VM?

A: No, PARDA itself or better said the two major processes that form PARDA (latency estimation and window size computation) don’t control “host local” fairness, the Local scheduler (SFQ) is responsible for that. - Q: Can we in any way control the I/O contention in vCD VM environment (say one VM running high I/O impacting another VM running on same host/datastore)

A: I would highly recommend to enable this in vCloud Environments to prevent storage based DoS attacks (or just noisy neighbors) and to ensure IO fairness can be preserved. This is one of the reasons VMware developed this mechanism. - Q: I can’t enable SIOC with an Enterprise licence – “License not available to perform the operation”. Is it Enterprise Plus only?

A: SIOC requires Enterprise Plus - Q: Can I verify what the Latency is?

A: Yes you can, go to the Host – Performance Tab and select “Datastore”, “Real Time”, select the datastore and select “Storage I/O Control normalized latency”. Please note that the unit for measurement is microseconds! - Q: This doesn’t appear to work in NFS?

A: SIOC can only be enabled on VMFS volumes currently.

If you happen to be at VMworld next week, make sure to attend this session: TA8233 Prioritizing Storage Resource Allocation in ESX Based Virtual Environments Using Storage I/O Control!

Storage I/O Fairness

I was preparing a post on Storage I/O Control (SIOC) when I noticed this article by Alex Bakman. Alex managed to capture the essence of SIOC in just two sentences.

Without setting the shares you can simply enable Storage I/O controls on each datastore. This will prevent any one VM from monopolizing the datatore by leveling out all requests for I/O that the datastore receives.

This is exactly the reason why I would recommend anyone who has a large environment, and even more specifically in cloud environments, to enable SIOC. Especially in very large environments where compute, storage and network resources are designed to accommodate the highest common factor it is important to ensure that all entities can claim their fair share of resource and in this case SIOC will do just that.

Now the question is how does this actually work? I already wrote a short article on it a while back but I guess it can’t hurt to reiterate thing and to expand a bit.

First a bunch of facts I wanted to make sure were documented:

- SIOC is disabled by default

- SIOC needs to be enabled on a per Datastore level

- SIOC only engages when a specific level of latency has been reached

- SIOC has a default latency threshold of 30MS

- SIOC uses an average latency across hosts

- SIOC uses disk shares to assign I/O queue slots

- SIOC does not use vCenter, except for enabling the feature

When SIOC is enabled disk shares are used to give each VM its fair share of resources in times of contention. Contention in this case is measured in latency. As stated above when latency is equal or higher than 30MS, and the statistics around this are computed every 4 seconds, the “datastore-wide disk scheduler” will determine which action to take to reduce the overall / average latency and increase fairness. I guess the best way to explain what happens is by using an example.

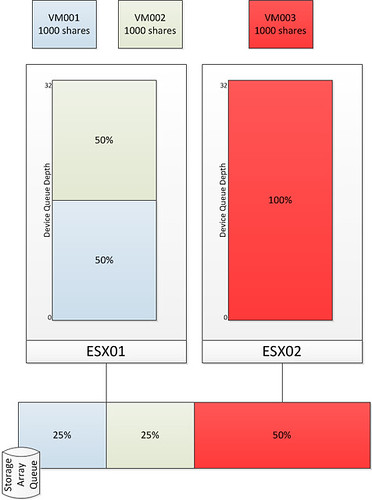

As stated earlier, I want to keep this post fairly simple and I am using the example of an environment where every VM will have the same amount of shared. I have also limited the amount of VMs and hosts in the diagrams. Those of you who attended VMworld session TA8233 (Ajay and Chethan) will recognize these diagrams, I recreated and slightly modified them.

The first diagram shows three virtual machines. VM001 and VM002 are hosted on ESX01 and VM003 is hosted on ESX02. Each VM has disk shares set to a value of 1000. As Storage I/O Control is disabled there is no mechanism to regulate the I/O on a datastore level. As shown in the bottom by the Storage Array Queue in this case VM003 ends up getting more resources than VM001 and VM002 while all of them from a shares perspective were entitled to the exact same amount of resources. Please note that both Device Queue Depth’s are 32, which is the key to Storage I/O Control but I will explain that after the next diagram.

As stated without SIOC there is nothing that regulates the I/O on a datastore level. The next diagram shows the same scenario but with SIOC enabled.

After SIOC has been enabled it will start monitoring the datastore. If the specified latency threshold has been reached (Default: Average I/O Latency of 30MS) for the datastore SIOC will be triggered to take action and to resolve this possible imbalance. SIOC will then limit the amount of I/Os a host can issue. It does this by throttling the host device queue which is shown in the diagram and labeled as “Device Queue Depth”. As can be seen the queue depth of ESX02 is decreased to 16. Note that SIOC will not go below a device queue depth of 4.

Before it will limit the host it will of course need to know what to limit it to. The “datastore-wide disk scheduler” will sum up the disk shares for each of the VMDKs. In the case of ESX01 that is 2000 and in the case of ESX02 it is 1000. Next the “datastore-wide disk scheduler” will calculate the I/O slot entitlement based on the the host level shares and it will throttle the queue. Now I can hear you think what about the VM will it be throttled at all? Well the VM is controlled by the Host Local Scheduler (also sometimes referred to as SFQ), and resources on a per VM level will be divided by the the Host Local Scheduler based on the VM level shares.

I guess to conclude all there is left to say is: Enable SIOC and benefit from its fairness mechanism…. You can’t afford a single VM flooding your array. SIOC is the foundation of your (virtual) storage architecture, use it!

ref:

PARDA whitepaper

storage i/o control whitepaper

vmworld storage drs session

vmworld storage i/o control session

vCD – Networking part 1 – Intro

After my introduction on vCD last week, I thought it was time to publish an article on Networking. Networking is most likely the most complex concept of vCD(VMware vCloud Director) and can at times be very confusing. I have created three articles which will explain the concepts of networking within vCD and of course will explain on a technical level how things work. (Including the vSphere layer)

If there are any questions don’t hesitate to leave a comment. Please note that I am deliberately trying to simplify things in this first article as I don’t want you to get lost in any of the layers of networking vCD offers.

Layered

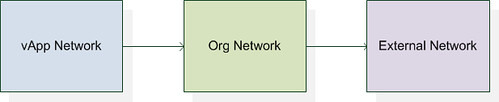

Networking within vCD is built up out of 3 distinct layers.

- External Network

- Org Network

- vApp Network

These three layers have been created to give the end-user the flexibility needed in a multi purpose virtual datacenter. I have depicted all three layers in the following diagram which shows the logical relationship between the layers:

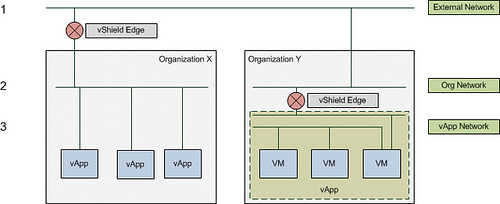

Some of you technical guys might say, that’s nice but I would like to see something less abstract. I created the following diagram which depicts the different layers in a different way. The diagram shows the three layers. I created a single External Network which links to two Org Networks. These Org Networks in its turn connect to multiple VMs(Org Y) and multiple vApps(Org X).

This is just an example however that illustrates possible network connections and as can clearly be seen it can be rather complex. As demonstrated there are multiple ways to connect vApps to each other or the outside world.

Now that we know some of the basics I will break down the three layers of networking. The first before we will discuss any of the advanced options like vShield Edge or network pools

External Network

The External Network is used for inter-Cloud connections. Or as I like to call it “your connection to the outside world”. It is the first network “object” that you create within vCD. An External Network is always backed by a Portgroup, meaning that a portgroup needs to exist within vSphere before you can create this vCD network object. This portgroup can be on a regular vSwitch, a dvSwitch or you could use Nexus 1KV. It all works, and all of them are supported!

Of course it is heavily recommended to set this portgroup up with a VLAN for layer 2 isolation, again note that this is an outbound facing connection for your Org or for multiple Orgs.

Examples of External Networks are:

- VPN to customer site

- Internet connection

As said, an external network can be shared between organizations but is typically created per organization and is your connection from or to your virtual datacenter.

I would to stress that, the external network is your exit from your virtual datacenter or your entrance!

Org Network

The second object that is created is the Org Network. The Org Network is used for intra-Cloud connections. Or as I like to call it “Cloud internal traffic”. An Org Network is linked to an organization and can be:

- Directly connected to an External Network

- NAT/Routed connected to an External Network

- Completely Isolated

With that meaning that although an Org Network is primarily intended for internal traffic, it can be linked to an External Network to create an entry to or exit from your virtual datacenter. Note that it doesn’t necessarily need to be connected to an External Network, it could be completed isolated and used for “Cloud internal traffic” only! A use case for this would be for instance a test/dev environment where vApps will need to communicate with each other but not with the tenants back-end.

It should also be noted that the Org Network is mandatory! Every organization needs an Org Network, it is the only mandatory network object.

Just for completeness, an Org Network consumes a segment from a Network Pool when it is NAT/Routed or Isolated. A network pool is a collection of L2 networks which will be automatically consumed by vCD when needed, and what I call a segment is one of those L2 networks… basically a portgroup. I will explain Network Pools more in-depth in part 2.

When an Org Network is directly connected it is just a logical entity and physically doesn’t exist. Again in one of the following articles(part 3) I will explain what that looks like in vCenter.

vApp Network

The vApp Network kind of resembles the Org Network as it also consumes a segment from a Network Pool. The vApp Network enables you to have a vApp internal network, this could be useful for isolating specific VMs of a vApp. The vApp Network can be:

- Directly connected to an Org Network

- NAT/Routed to an Org Network

- Completely Isolated

It should be noted that the “directly connected” option for both the Org Network and the vApp Network is just a logical connection. In the back-end it will be directly connected to the layer above.

As shown in an earlier diagram and explained above a vApp can contain multiple networks. This can be used to isolate specific VMs from the outside world. An example is shown in the following diagram where only the Web Server has a connection to the Org Network and the App and Database servers are isolated but do connect to the Web server.

Summary

vCD has three different layers of networking. Each of these layers has a specific purpose. The External Network is your connection to the outside world, the Org Network is linked to a specific Organization and the vApp network only resides within a vApp.

That is it for Part 1. Part 2 will focus on the Network Pools and Part 3 will focus on what these vApp, Org and External Networks look like on a vSphere layer and some general best practices.

My tip of the day, if you want to get to know vCD really well, check vCenter every time you make a change and see what happens!

UPDATE: for a full schematic overview check Hany’s awesome diagram.