I had a customer asking me today why on each VM it was showing that all HA responses are disabled. This customer is running vSphere 6.5 and below you can see what the UI showed. Note that it still says the VM is Protected, yet none of the protection mechanisms appeared to be enabled

I asked them to show me a screenshot of their HA configuration, and the HA configuration actually had several of these response mechanisms enabled. I checked my vSphere 6.5 lab and it seems I have the same problem and there’s a UI issue on the VM level details for vSphere HA in vSphere 6.5. I verified with engineering, and this is indeed a known issue which has been identified and is fixed in vCenter Server 6.5.0b! KB Article on the topic can be found here, and in the release notes for 6.5.0b it is mentioned that it is fixed.

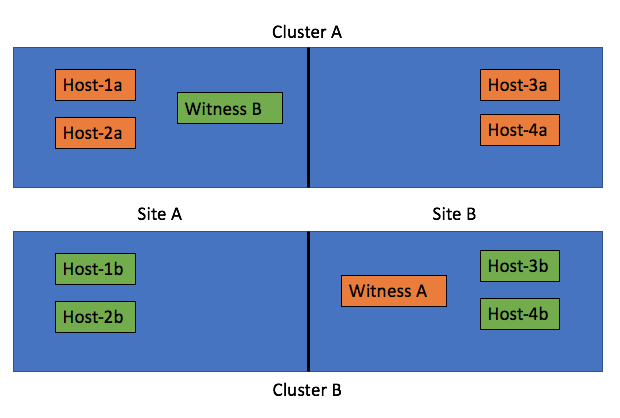

Earlier this week I was on the phone with Rawlinson Rivera, my former VMware/vSAN colleague, and he told me all about the new stuff for

Earlier this week I was on the phone with Rawlinson Rivera, my former VMware/vSAN colleague, and he told me all about the new stuff for