Over the last couple of weeks, I’ve had conversations with customers and partners who have been running performance benchmarks against both vSAN ESA and vSAN OSA. As you can imagine, people want to compare version 8 of OSA against version 8 of ESA, and that is completely fair. What I noticed though is that some of those customers came back with comments around CPU usage of vSAN OSA against ESA. The general comment we get is that vSAN ESA is using more CPU cycles than vSAN OSA.

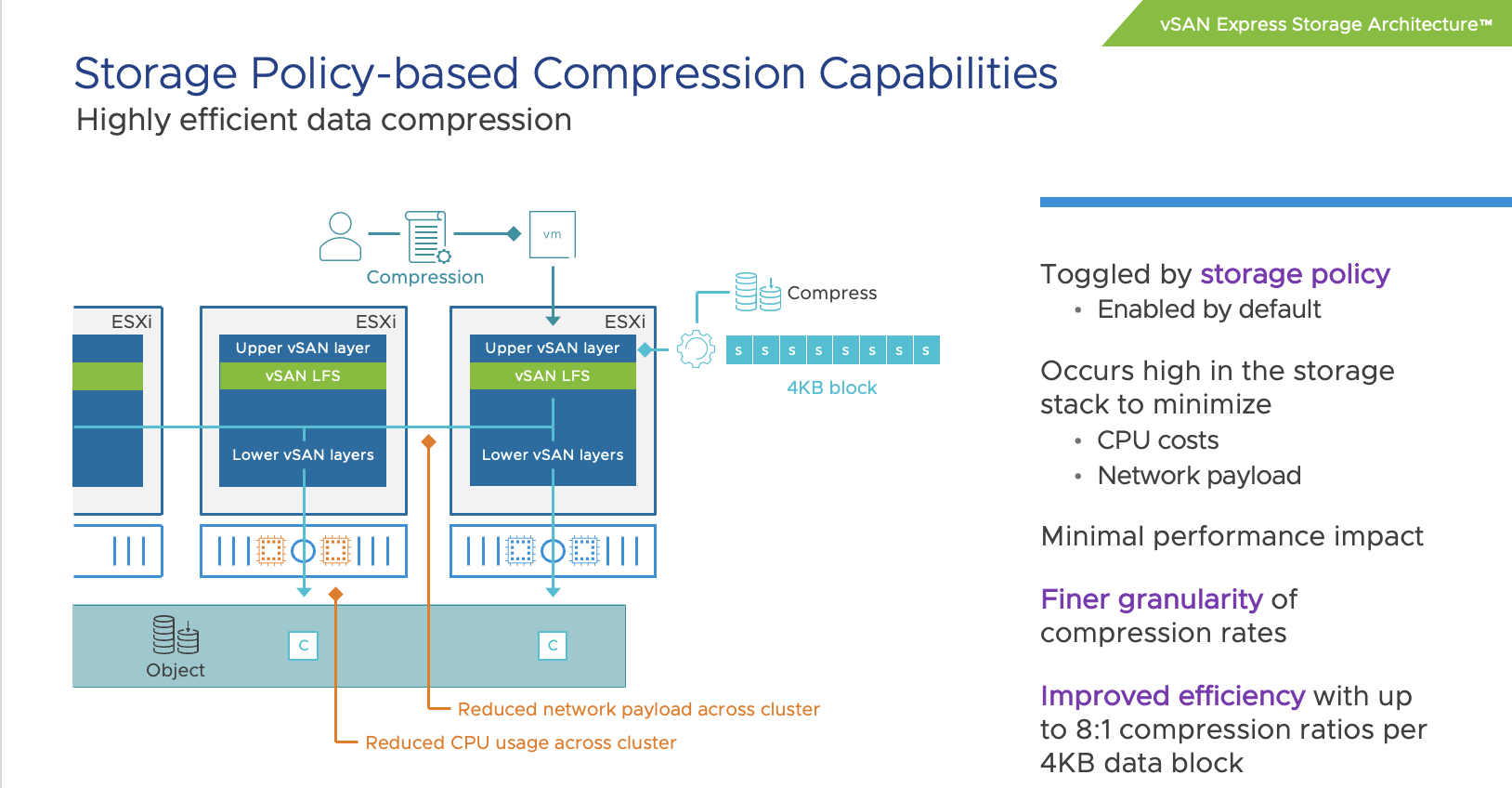

When looking at it from a total number point of view, or CPU cycles consumed, it is very likely you will see vSAN ESA using more cycles than vSAN OSA. The question then typically arises why that is the case, as VMware (the vSAN team) has been claiming that vSAN ESA is much more efficient than vSAN OSA. To be fair, it is much more efficient. For instance data services like checksumming, encryption, and compression have moved to the top of the stack (as shown below) resulting in the fact that we don’t have to compress/encrypt data 3/4/5/6 times but can do it once at the source and then send it over the network to the destination.

Still, it leaves the question, why is more CPU capacity used? The answer is simple, you are pushing much more IO. We’ve seen customers easily reaching 4x the number of IOPS with ESA than with OSA. Even though ESA is more efficient, if you are pushing 4x (or more) the amount of IO then you will need to remember that those additional IOs also come at a cost, and that cost is CPU cycles to process them. So when you make a comparison, please compare apples to apples, and not apples to oranges.

The last thing I want to add, and hopefully I can share some data in the future, the use of RDMA with vSAN 8 ESA seems to have a significant impact on CPU usage, as in lower the amount of CPU required to produce the same results (or better results). So it is worth considering RDMA for sure when adopting vSAN 8 ESA!

.png)