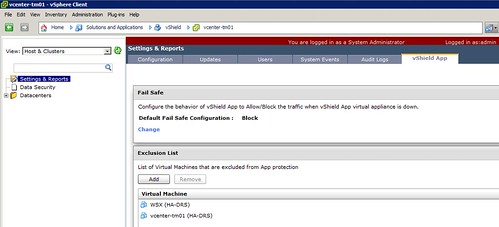

A while back I posted a hack to exclude your vCenter Server from vShield App protection. I discussed this hack with the vShield team and asked them if it would be possible to add similar functionality to vShield. I was pleasantly surprised when I noticed that they managed to slip it in to vShield App 5.0.1 release. What a quick turnaround! It is described how to do this on page 51 of the admin guide. I tested it myself ad here are the steps I took:

- Log in to the vShield Manager.

- Click Settings & Reports from the vShield Manager inventory panel.

- Click the vShield App tab.

- On the Exclusion List, click Add.

Add Virtual Machines to Exclude dialog box opens. - Click in the field next to Select and click the virtual machine you want to exclude.

- Click Select.

The selected virtual machine is added to the list. - Click OK

In my case I excluded both my WSX server and my vCenter Server instance: