When answering some questions in the vSphere HA section of the VMTN forum the “poweron” file was mentioned. I have gotten some other questions as well about this file so a public blog post makes most sense.

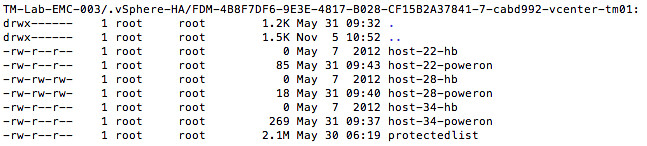

Each hosts in a vSphere HA cluster keeps track of the power state of the virtual machines it is hosting. This set of powered on virtual machines is stored the “poweron” file. Note that this applies to both the master and the slave hosts in your cluster. This file is located on your vmfs volumes in the hidden directory “.vSphere-HA/<FDM cluster ID>“.

The naming scheme for this file is as follows:

host-<id>-poweron

Tracking virtual machine power-on state is not the only thing the “poweron” file is used for. This file is also used by the slaves to inform the master that it is isolated from the management network: the top line of the file will either contain a “0” (zero) or a “1”. A “0” means not-isolated and a “1” means isolated. The master will inform vCenter about the isolation of the host.

This also means that if a host is not sending out any heartbeats to the master, the master will validate if that host has been isolated by reading the “poweron” file. This could be considered as an extra check on top of the “datastore heartbeating” mechanism.