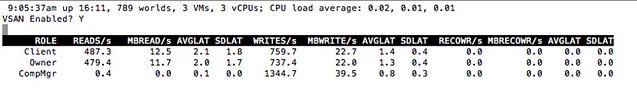

Today I was fiddling with ESXTOP to see if anything was new for vSphere 6.0. Considering the massive number of metrics it already holds it is difficult to find things which stand out / are new. One thing did stick out though which is a new display for Virtual SAN.I haven’t found much detail around this new section in ESXTOP to be honest, but then again I guess most of it speaks for itself. If you are in ESXTOP and press “x” then you will go to the VSAN screen. Now when you press “f” you have the option to add “fields”, I enabled all and the below is the result:

It isn’t a huge amount of detail yet, but being able to see the number of reads, writes and average latency is useful for sure per host. Also what has my interest is “RECOWR/s” and “MBRECOWR/s”. This refers to “recovery writes”, which is the resync of components which were somehow impacted by a failure. If for whatever reason RVC or the VSAN Observer is unavailble then it may be worth peaking at ESXTOP to see what is going on.

EMC

EMC