Almost on a weekly basis, I get a question about unexpected results during the testing of certain failure scenarios. I usually ask first if there’s a diagram that shows the current configuration. The answer is usually no. Then I would ask if they have a failure testing matrix that describes the failures they are introducing, the expected result and the actual result. As you can guess, the answer is usually “euuh a what”? This is where the problem usually begins. The problem usually gets worse when customers try to mimic a certain failure scenario.

What would I do if I had to run through failure scenarios? When I was a consultant we always started with the following:

- Document the environment, including all settings and the “why”

- Create architectural diagrams

- Discuss which types of scenarios would need to be tested

- Create a failure testing matrix that includes the following:

- Type of failure

- How to create the scenario

- Preferably include diagrams per scenario displaying where the failure is introduced

- Expected outcome

- Observed outcome

What I would normally also do is describe in the expected outcome section the theory around what should happen. Maybe I should just give an example of a failure and how I would describe it more or less.

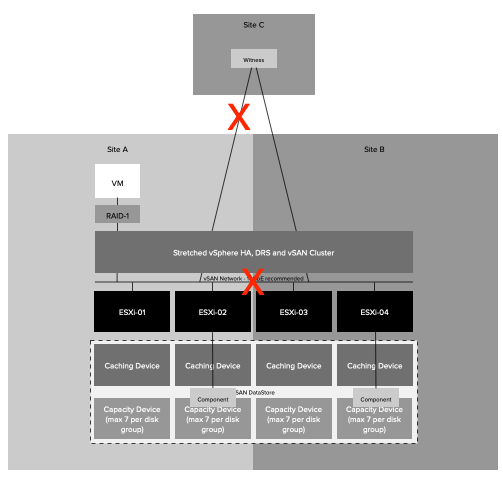

Type Failure: Site Partition

How to: Disable links between Site-A / Site-C and Site-A / Site-B

Expected outcome: The secondary location will bind itself with the witness and will gain ownership over all components. In the preferred location, the quorum is lost, as such all VMs will appear as inaccessible. vSAN will terminate all VMs in the preferred location. This is from an HA perspective however a partition and not an isolation as all hosts in Site-A can still communicate with each other. In the secondary location vSphere HA will notice hosts are missing. It will validate that the VMs that were running are running, or not running. All VMs which are not running, and have accessible components, will be restarted in the secondary location.

Observed outcome: The observed outcome was similar to the expected outcome. It took 1 minute and 30 seconds before all 20 test VMs were restarted.

In the above example, I took a very basic approach and didn’t even go into the level of depth you probably should go. I would, for instance, include the network infrastructure as well and specify exactly where the failure occurs, as this will definitely help during troubleshooting when you need to explain why you are observing a particular unexpected behavior. In many cases what happens is that for instance a site partition is simulated by disabling NICs on a host, or by closing certain firewall ports, or by disabling a VLAN. But can you really compare that to a situation where the fiber between two locations is damaged by excavations? No, you can not compare those two scenarios. Unfortunately this happens very frequently, people (incorrectly) mimic certain failures and end up in a situation where the outcome is different than expected. Usually as a result of the fact that the failure being introduced is also different than the failure that was described. If that is the case, should you still expect the same outcome? You probably should not.

Yes I know, no one likes to write documentation and it is much more fun to test things and see what happens. But without recording the above, a successful implementation is almost impossible to guarantee. What I can guarantee though is that when something fails in production, you most likely will not see the expected behavior when you have not tested the various failure scenarios. So please take the time to document and test, it is probably the most important step of the whole process.

Hi Duncan,

Thanks for writing a good post. To understand your article I have gone through the available documentation on vSAN.

Note:- In a stretched cluster, this rule defines the number of additional host failures that the object can tolerate after the number of site failures defined by PFTT is reached. If PFTT = 1 and SFTT = 2, and one site is unavailable, then the cluster can tolerate two additional host failures.

Default value is 1. Maximum value is 3.

https://docs.vmware.com/en/VMware-vSphere/6.5/com.vmware.vsphere.virtualsan.doc/GUID-08911FD3-2462-4C1C-AE81-0D4DBC8F7990.html.

Thanks

Suresh Siwach

It is not about the technology, the example is nothing more than just an idea of how I would approach this. So no need to go through anything else.

Yes, You are right. I tried understand technical gravity and missed the focus of this bolg.

i will try to take care. Thanks for highlighting my miss.

Thanks

Suresh Siwach

Hi Duncan, and thank you for this post (and so many others !)

It’s the first time I comment, but definitely not the first time I read 🙂

The funny thing is that I am precisely in the middle of writing a documentation for our test scenarios ! Good timing !

What you describe as a methodology is quite in sync with how we started to think about it, so it’s good to see we are going the right way.

I have a technical question, and I not sure about the expected outcome :

– How will HA behave if my primary (OR secondary) “data” site gets isolated (or destroyed by an alien plasma ray of the death), then, later, the Witness becomes unavailable for some reason (host crash, network failure on third site, human mistake… or alien doggedness) ?

Will it power off everything or keep VMs powered on on the remaining “data” site ? (assuming the “das.isolationaddress” responds on the remaining site).

Thanks again for your work !

Carlos

OK, I have my answer… And I don’t like it 😉

https://storagehub.vmware.com/t/vmware-vsan/vsan-stretched-cluster-guide/site-failure-or-network-partitions-2/

yeah that is the whole reason for the witness of course. Just imagine we wouldn’t do it and all three sites are partitioned, it would then allow all three sites to advance, which then when the connection between locations return would lead to issues. Serious issues.

Of course, I agree with that, in the case of everything acting automatically.

But what if :

– One Data site gets down and the Witness is UP and communicates with the second site ==> the VMs, depending on their PFTT settings, are restarted on the site that is still alive. Expected behavior.

– It appears that the down site is totally dead, and it will take days or weeks to get it back (physically destroyed for instance).

Why couldn’t we “manually” tell vSAN to act as a “not stretched” cluster, so we don’t loose everything in case of a network failure between the live site and the witness, and before the second site has been revived ?

What would you recommend in such a situation ?