Since I started playing with Virtual SAN there was something that I more or less avoided / neglected and that is Network IO Control. However, Virtual SAN and Network IO Control should go hand-in-hand. (And as such the Distributed Switch.) Note that when using VSAN (beta) the Distributed Switch and Network IO Control come with it. I guess I skipped it as there were more exciting thing to talk about, but as more and more people are asking about it I figured it is time to discuss Virtual SAN and Network IO Control. Before we get started, lets list the type of networks we will have within the VSAN cluster:

- Management Network

- vMotion Network

- Virtual SAN Network

- Virtual Machine Network

Considering it is recommend to use 10GbE with Virtual SAN that is what I will assume with this blog post. In most of these cases, at least I would hope, there will be a form of redundancy and as such we will have 2 x 10GbE to our disposal. So how would I recommend to configure the network?

Lets start with the various portgroups and VMkernel interfaces:

- 1 x Management Network VMkernel interface

- 1 x vMotion VMkernel interface (All interfaces need to be in the same subnet)

- 1 x Virtual SAN VMkernel interface

- 1 x Virtual Machine Portgroup

Some of you might be surprised that I have only listed the vMotion VMkernel interface and the Virtual SAN VMkernel interface once… And after various discussions and thinking about this for those I figured I would keep things as simple as possible, especially considering the average IO profile of server environments.

By default we can make sure the various traffic types are separated on different physical ports, but we can also set limits and shares when desired. I do not recommend using limits though, why limit a traffic type when you can use shares and “artificially limit” your traffic types based on resource usage and demand?! Also note that shares and limits are enforced per uplink.

So we will be using shares, as shares only come in to play when there is contention. What we will do is take 20GbE in to account and carve it up. Easiest way, if you ask me, is to say each traffic type gets an X number of GbE assigned at a minimum which is based on some of the recommendations out there for these types of traffic:

- Management Network –> 1GbE

- vMotion VMK –> 5GbE

- Virtual Machine PG –> 2GbE

- Virtual SAN VMkernel interface –> 10GbE

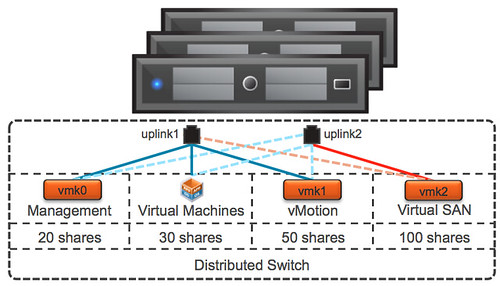

Now as you can see “management”, “virtual machine” and vMotion” traffic share Port 1 and “Virtual SAN” traffic uses Port 2. This way we have sufficient bandwidth for all the various types of traffic in a normal state. We also want to make sure that no traffic type can push out other types of traffic, for that we will use the Network IO Control shares mechanism.

Now lets look at it from a shares perspective.You will want to make sure that for instance vMotion and Virtual SAN always has sufficient bandwidth. I will work under the assumption that I only have 1 physical port available and all traffic types share the same physical port. We know this is not the case, but lets take a “worst case scenario” approach.

Lets assume you have a 1000 shares in total and lets take a worst case scenario in to account where 1 physical 10GbE ports has failed and only 1 is used for all traffic. By taking this approach you ensure that Virtual SAN always has 50% of the bandwidth to its disposal while leaving the remaining traffic types with sufficients bandwidth to avoid a potential self-inflicted DoS.

| Traffic Type | Shares | Limit |

| Management Network | 20 | n/a |

| vMotion VMkernel Interface | 50 | n/a |

| Virtual Machine Portgroup | 30 | n/a |

| Virtual SAN VMkernel Interface | 100 | n/a |

You can imagine that when you select the uplinks used for the various types of traffic in a smart way that even more bandwidth can be leveraged by the various traffic types. After giving it some thought, this is what I would recommend per traffic type:

- Management Network VMkernel interface = Explicit Fail-over order = P1 active / P2 standby

- vMotion VMkernel interface = Explicit Fail-over order = P1 active / P2 standby

- Virtual Machine Portgroup = Explicit Fail-over order = P1 active / P2 standby

- Virtual SAN VMkernel interface = Explicit Fail-over order = P2 active / P1 standby

Why use Explicit Fail-over order for these types? The best explanation here is predictability. By separating traffic types we allow for optimal storage performance while also providing vMotion and virtual machine traffic sufficient bandwidth.

Also vMotion traffic is bursty and can / will consume all available bandwidth, so when combined with Virtual SAN on the same uplink you could see how these two could potentially hurt each other. Of course depending on the IO profile of your virtual machines and the type of operations being done. But you can see how a vMotion of a virtual machine provisioned with a lot of memory can impact the available bandwidth for other traffic types. Don’t ignore this, use Network IO Control!

Lets try to visualize things, makes it easier to digest. Just to be clear, dotted lines are “standby” and the others are “active”.

I hope this provides some guidance around how to configure Virtual SAN and Network IO Control in a VSAN environment. Of course there are various ways of doing it, this is my recommendation and my attempt to keep things simple and based on experience with the products.

If you use a distributed switch, why not make use of all the uplinks and set the Load Balancing of each port group to “Route Based on Physical NIC Load”? Won’t this do a better job of balancing the load then a human attempting to balance the load by picking NICs?

LBT works based on a 30 second window. Just imagine 4 vMotions are being kicked off automatically and vMotion happens to be on the same NIC as Virtual SAN. For at least 30 seconds your vMotion traffic would interfere with Virtual SAN traffic. If you can simply avoid this, why not?

TY

Duncan addresses that to a degree as predictability.

Myself, seeing thing rise of memory entitlement to VMs as well as addressing the monster VM factor and increasing consolidation ratios, I prefer the availability of Multi NIC vMotion especially for host evacuations. In 10GbE environments, it totally rocks.

Jason I was hoping vSAN could also leverage multi-nic vMotion behavior (within the same pg or seperate pg with another vmk). I’ve seen better performance with multi-nic vMotion over 10gbe as well!

Yes it can use multiple VMkernels. But they will need to be in a different subnet for VSAN to be able to work with it.

So I guess we should treat all ip based storage vmkernel interfaces this way then? This is not an issue only in vSAN, but in any iSCSI / NFS scenario.

Lars

It depends I would say what you are going for Lars. Because we are talking 10GbE here, I personally prefer to keep things simple and just go with a single VMkernel for VSAN, for 95% of the environments out there I would expect this to be sufficient. But again, it just a recommendation based on my personal experience, your would could and probably will look completely different 🙂

Would an LACP for the 2x 10gbe uplinks resolve the necessity active/standby?

It’s a bit of initial configuration overhead, but provides simplicity for the vsphere management and pg configuration/standardization.

That is a viable alternative… However, I typically do not recommend LACP/Etherchannels as I’ve seen too many misconfigured environments in the past. Also, in order to provide resiliency you would need switches which can do cross stack etherchannels.

Nice post Duncan.

I wrote the below post a while back, and concluded NIOC share values of the below for an environment using IP Storage.

IP Storage traffic : 100

ESXi Management: 25

vMotion: 25

Fault Tolerance : 25

Virtual Machine traffic : 50

Assuming the IP Storage traffic was the Virtual SAN VMKernel interface and had the same share value of 100, I would be interested in your thoughts on my post.

I also went with LBT rather than explicit fail-over order as I feel this would ensure in the event of >75% utilization that traffic could be moved around, and should result in more consistent performance for all traffic types.

Having read your article, I agree with your comment around one vMotion & Virtual SAN VMK would be sufficient for most environments and comply with the keep it simple methodology, so I may update my post, or do a follow up post as this seems logical for most environments.

http://www.joshodgers.com/2013/01/19/example-vmware-vnetworking-design-w-2-x-10gb-nics-ip-based-or-fcfcoe-storage/

Hi Duncan, great blog post as always. I had no chance to play with vSAN but your vSAN related blog posts are very helpful and keep me up to date.

It is probably little bit off topic because you recommend to avoid NIOC limits but I would like to clarify it. You mentioned …

“Meaning that if you team two 10GbE interface ports and set a limit than Network IO Control will look at the bandwidth used on both interface ports and limit the combined. So with a 2Gbps limit, it could be that one physical port uses 200Mbps and the other 1800Mbps.”

… I have to disagree with this statement because since ESX 5.1 network shaper (responsible for traffic limits) is per NIC and not per host. So in your example both interface ports can handle 2Gbps. I have just performed tests in my lab to be absolutely sure.

vSphere Client (C#) is also confusing because in NIOC Resource Allocation Pool Setting is the label “Host limit” which was ok till 5.0 but wrong from 5.1 and above.

It is probably detail but can you verify and confirm it?

Thanks,

David.

You are completely right David, completely forgot about the fact we changed that indeed…. Thanks for pointing that out 🙂