I have been playing a lot with vSphere Virtual SAN (VSAN) in the last couple of months… I figured I would write down some of my thoughts around creating a hardware platform or constructing the virtual environment when it comes to VSAN. There are some recommended practices and there are some constraints, I aim to use this blog post to gather all of these Virtual SAN design considerations. Please read the VSAN introduction, how to install VSAN in your virtual lab and “How do you know where an object is located” to get a better understanding of the product. There is a long list of VSAN blogs that can be found here: vmwa.re/vsan

The below is all based on vSphere 5.5 Virtual SAN (public) Beta and my interpretation and thoughts based on various conversations with colleagues, engineering and reading various documents.

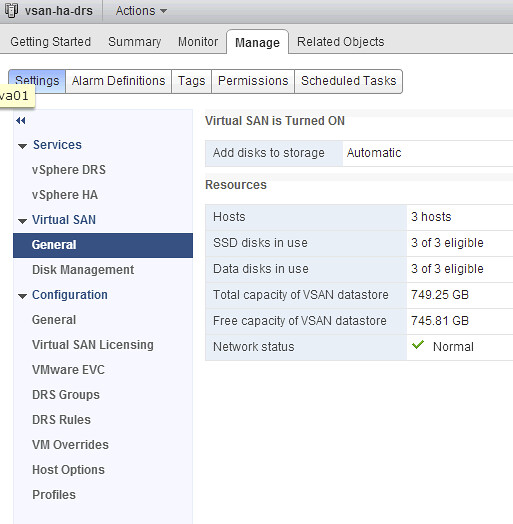

- vSphere Virtual SAN (VSAN) clusters are limited to a maximum total of 32 hosts and there is a minimum of 3 hosts. VSAN is also currently limited to 100 VMs per host, resulting in a maximum of 3200 VMs in a 32 host cluster. Please note that HA currently has a limit of 2048 protected VMs in a single Datastore.

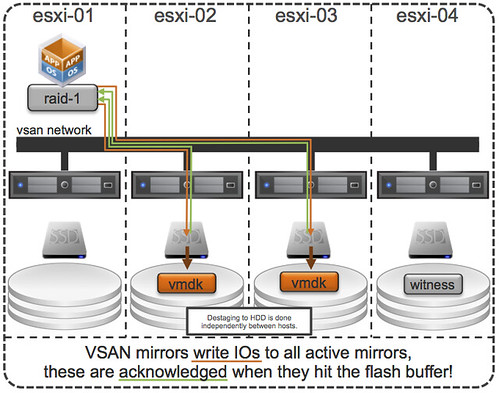

- It is recommended to dedicate a 10GbE NIC port to your VSAN VMkernel traffic, although 1GbE is fully supported it could be a limiting factor in I/O intensive environments. Both VSS and VDS are supported.

- It is recommended to have a VSAN VMkernel on every physical NIC! Ensure to configure them in a “active/standby” configuration so that when you have 2 physical NIC ports and 2 VSAN VMkernel’s each of them will have its own port. Do note that multiple VSAN VMkernel NICs on a single host on the same subnet is not a supported configuration, in different subnets it is supported.

- IP Hash Load Balancing is supported by VSAN, but due to limited number of IP-addresses between source/destination load balancing benefits could be limited. In other words, an etherchannel formed out of 4x1GbE NIC will most likely not result in 4GbE.

- Although Jumbo Frames are fully supported with VSAN they do add a level of operational complexity. When Jumbo Frames are enabled ensure these are enabled end-to-end!

- VSAN requires at a minimum 1 SSD and 1 Magnetic Disk per diskgroup on a host which is contributing storage. Each diskgroup can have a maximum of 1 SSD and 7 magnetic disks. When you have more than 7 HDDs or two or more SSDs you will need to create additional diskgroups.

- Each host that is providing capacity to the VSAN datastore has at least one local diskgroup. There is a maximum of 5 disk groups per host!

- It can beneficial to create multiple smaller disk groups instead of larger diskgroups. More diskgroups means smaller failure domains and more cache drives / queues.

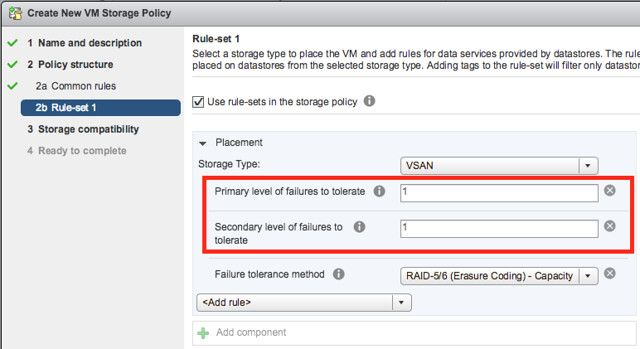

- Ensure when sizing your environment to take data replicas in to account. If your environment needs N+1 or N+2 (etc) resiliency factor this in accordingly.

- SSD capacity does not count towards total VSAN datastore capacity. When sizing your environment, do not include SSD capacity in your totalized capacity calculation.

- It is a recommended practice to have a minimum 1:10 ratio of SSD capacity to HDD capacity in each disk group. In other words, when you have 1TB of HDD capacity, it is recommended to have at least 100GB of SSD capacity. Note that VMware’s recommendation has changed since BETA, new recommendation is:

- 10 percent of the anticipated consumed storage capacity before the number of failures to tolerate is considered

- By default, 70% of the available SSD capacity will be used as read cache and 30% will be used as a write buffer. As in most designs, when it comes to cache/buffer –> more = better.

- Selecting the SSD with the right performance profile can make a 5x-10x difference in VSAN performance easily, chose carefully and wisely. Both SSD and PCIe flash solutions are supported, but there are requirements! Make sure to check the HCL before purchasing new hardware. My tip Intel S3700, great price/performance balance.

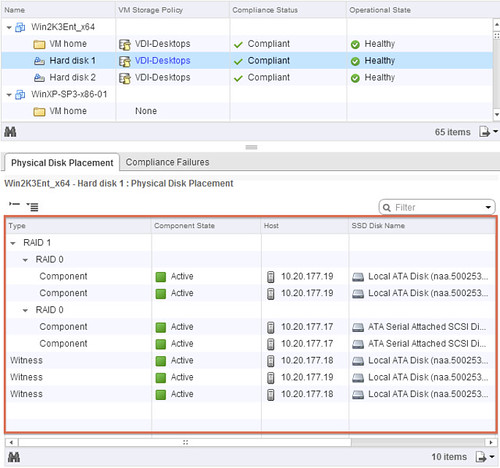

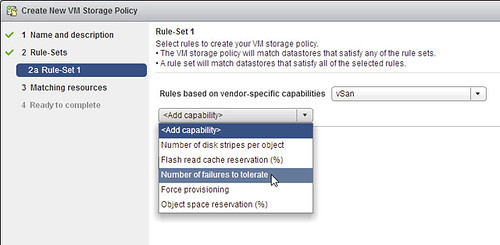

- VSAN relies on VM Storage Policies for policy based management. There is a default policy under the hood, but you cannot see this within the UI. As such it is a recommended practice to create a new standard policy for your environment after VSAN has been configured. It is recommended to start with all settings set to default, ensure “Number of failures to tolerate” is configured to 1. This guarantees that when a single host fails virtual machines can be restarted and recovered from this failure with minimal impact on the environment. Attach this policy to your virtual machines when migrating them to VSAN or during virtual machine provisioning.

- Configure vSphere HA isolation response to “power-off” to ensure that virtual machines which reside on an isolated host can be safely restarted.

- Ensure vSphere HA admission control policy (“host failures to tolerate” or the “percentage based) aligns with your VSAN availability strategy. In other words, ensure that both compute and storage are configured using the same “N+x” availability approach.

- When defining your VM Storage Policy avoid unnecessary usage of “flash read cache reservation”. VSAN has internal read cache optimization algorithms, trust it like you trust the “host scheduler” or DRS!

- VSAN does not support virtual machine disks greater than 2TB-512b, VMs which require larger VMDKs are not suitable candidates at this point in time for VSAN.

- VSAN does not support FT, DPM, Storage DRS or Storage I/O Control. It should be noted though that VSAN internally takes care of scheduling and balancing when required. Storage DRS and SIOC are designed for SAN/NAS environments.

- Although supported by VSAN, it is recommended practice to keep the hosts/disk configuration for a VSAN cluster similar. Non-uniform cluster configuration could lead to variations in performance and could make it more complex to stay compliant to defined policies after a failure.

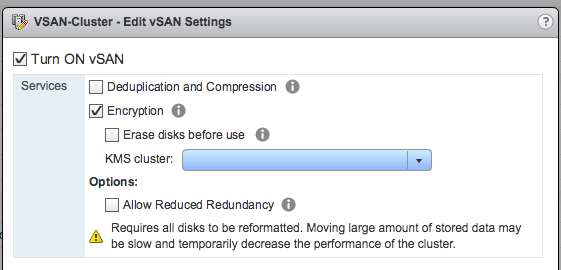

- When adding new SSDs or HDDs ensure these are not pre-formatted. Note that when VSAN is configured to “automatic mode” disks are added to existing disk groups or new disk groups are created automatically.

- Note that vSphere HA behaves slightly different in a VSAN enabled cluster, here are some of the changes / caveats

- Be aware that when HA is turned on in the cluster, FDM agent (HA) traffic goes over the VSAN network and not the Management Network. However, when an potential isolation is detected HA will ping the default gateway (or specified isolation address) using the Management Network.

- When enabling VSAN ensure vSphere HA is disabled. You cannot enable VSAN when HA is already configured. Either configure VSAN during the creation of the cluster or disable vSphere HA temporarily when configuring VSAN.

- When there are only VSAN datastores available within a cluster then Datastore Heartbeating is disabled. HA will never use a VSAN datastore for heartbeating.

- When changes are made to the VSAN network it is required to re-configure vSphere HA.

- VSAN requires a RAID Controller / HBA which supports passthrough mode or pseudo passthrough mode. Validate with your server vendor if the included disk controller has support for passthrough. An example of a passthrough mode controller which is sold separately is the LSI SAS 9211-8i.

- Ensure log files are stored externally to your ESXi hosts and VSAN by leveraging vSphere’s syslog capabilities.

- ESXi can be installed on: USB, SD and Magnetic Disk. Hosts with 512GB or more memory are only supported when ESXi is installed on magnetic disk.

That is it for now. When more comes to mind I will add it to the list!