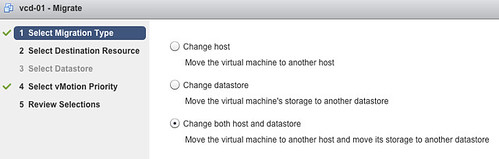

There’s a nice new enhancement to vMotion in vSphere 5.1. (and no, it doesn’t have specific name :-)) With vSphere 5.1 you can migrate virtual machines live without needing “shared storage”. In other words you can vMotion virtual machines between ESXi hosts with only local storage. It is very simple:

- Open the vSphere Web Client

- Click “VMs and Templates”

- Right click the VM you want to migrate

- Select “Change both host and datastore”

I am sure Frank Denneman is going to dive in to this soon so I won’t elaborate on how the process it self works. There’s already a blogpost out by Sreekant Setty which has some more details and which points to a nice white paper about vMotion / SvMotion performance.