Over the past couple of weeks I have been watching the VMware VSAN Community Forum with close interest and also twitter. One thing that struck me was the type of hardware people used for to test VSAN on. In many cases this is the type of hardware one would use at home, for their desktop. Now I can see why that happens, I mean something new / shiny and cool is released and everyone wants to play around with it, but not everyone has the budget to buy the right components… And as long as that is for “play” only that is fine, but lately I have also noticed that people are looking at building an ultra cheap storage solution for production, but guess what?

Virtual SAN reliability, performance and overall experience is determined by the sum of the parts

You say what? Not shocking right, but something that you will need to keep in mind when designing a hardware / software platform. Simple things can impact your success, first and foremost check the HCL, and think about components like:

- Disk controller

- SSD / PCIe Flash

- Network cards

- Magnetic Disks

Some thoughts around this, for instance the disk controller. You could leverage a 3Gb/s on-board controller, but when attaching lets say 5 disks to it and a high performance SSD do you think it can still cope or would a 6Gb/s PCIe disk controller be a better option? Or even leverage 12Gb/s that some controllers offer for SAS drives? Not only can this make a difference in terms of number of IOps you can drive, it can also make a difference in terms of latency! On top of that, there will be a difference in reliability…

I guess the next component is the SSD / Flash device, this one is hopefully obvious to each of you. But don’t let these performance tests you see on Tom’s or Anandtech fool you, there is more to an SSD then just sheer IOps. For instance durability, how many writes per day for X years life can your SSD handle? Some of the enterprise grades can handle 10 full writes or more per day for 5 years. You cannot compare that with some of the consumer grade drives out there, which obviously will be cheaper but also will wear out a lot faster! You don’t want to find yourself replacing SSDs every year at random times.

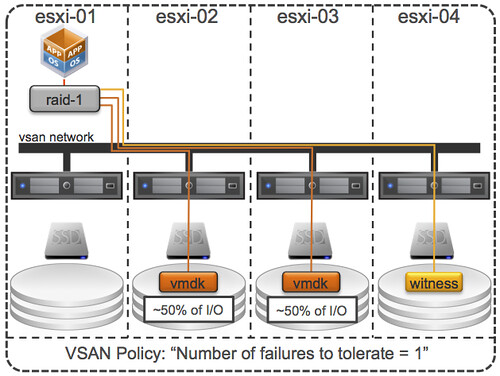

Of course network cards are a consideration when it comes to VSAN. Why? Well because I/O will more than likely hit the network. Personally, I would rule out 1GbE… Or you would need to go for multiple cards and ports per server, but even then I think 10GbE is the better option here. Most 10GbE are of a decent quality, but make sure to check the HCL and any recommendations around configuration.

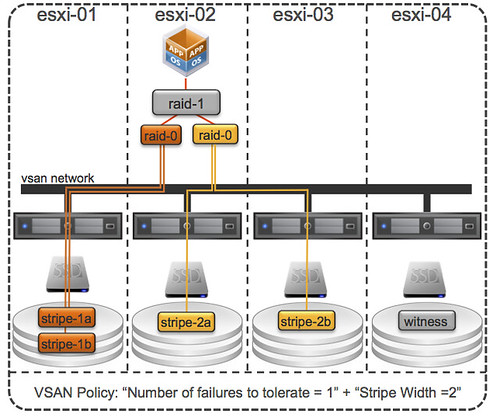

And last but not least magnetic disks… Quality should always come first here. I guess this goes for all of the components, I mean you are not buying an empty storage array either and fill it up with random components right? Think about what your requirements are. Do you need 10k / 15k RPM, or does 7.2k suffice? SAS vs SATA vs NL-SATA? Also, keep in mind that performance comes at a cost (capacity typically). Another thing to realize, high capacity drives are great for… yes adding capacity indeed, but keep in mind that when IO needs to come from disk, the number of IOps you can drive and your latency will be determined by these disks. So if you are planning on increasing the “stripe width” then it might also be useful to factor this in when deciding which disks you are going to use.

I guess to put it differently, if you are serious about your environment and want to run a production workload then make sure you use quality parts! Reliability, performance and ultimately your experience will be determined by these parts.

<edit> Forgot to mention this, but soon there will be “Virtual SAN” ready nodes… This will make your life a lot easier I would say.

</edit>