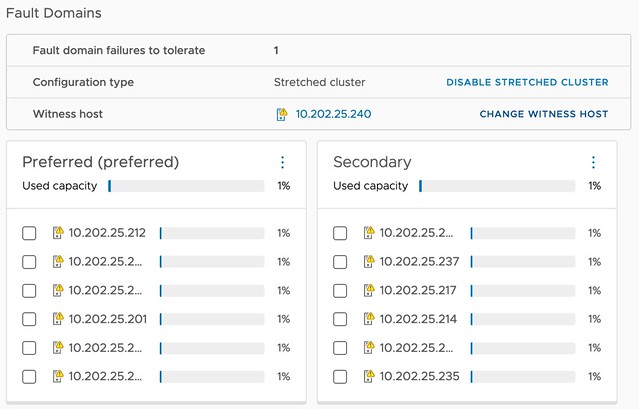

I created a new lab environment not too long ago and I ran into this situation where the Performance Management Object showed up as Reduced Availability with no Rebuild in vSAN Skyline Health. This happened in my case because I created a Stretched Cluster configuration after I had already formed a cluster, which means that the performance management object was randomly placed across hosts without taking those “failure domains” into account. I completely forgot about it until someone on VMTN reminded me about this. I had two options, fix the existing perf database, or simply disable/enable the perf service to it is recreated.

As I had no data stored in the database I figured disable/enable is the easiest route. I looked for the option in vSphere 8.0 U1 but could not find it, it seems that the UI option no longer exists for whatever reason. How do I now disable/enable the service? Ruby vSphere Console (RVC) to the rescue!

When you log in to RVC you can simply run the following commands on the cluster object you want to disable/enable the performance service for. Fairly straight forward, and fixed the issue within a minute or so:

vsan.perf.stats_object_delete <cluster> vsan.perf.stats_object_create <cluster>

I also documented this in the vSAN 8.0 ESA Deep Dive Book by the way, you can buy a paper copy or ebook on Amazon.