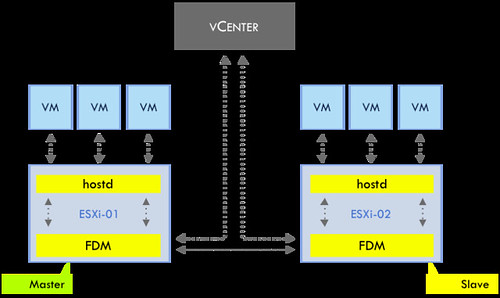

With vSphere 5.0 comes a new HA architecture. HA has been rewritten from the ground up to shed some of those constraints that were enforced by AAM. HA as part of 5.0, also referred to as FDM (fault domain manager), introduces less complexity and higher resiliency. From a UI perspective not a lot has changed, but there is a lot under the covers that has changed though, no more primary/secondary node concept as stated but a master/slave concept with an automated election process. Anyway, too much details for now, will come back to that later in a later article. Lets start with the basics first. As mentioned the complete agent as been rewritten and the dependency on VPXA has been removed. HA talks directly to hostd instead of using a translator to talk to VPXA with vSphere 4.1 and prior. This excellent diagram by Frank, also used in our book, demonstrates how things are connected as of vSphere 5.0.

The main point here though is that the FDM agent also communicates with vCenter and vCenter with the FDM agent. As of vSphere 5.0, HA leverages vCenter to retrieve information about the status of virtual machines and vCenter is used to display the protection status of virtual machines. On top of that, vCenter is responsible for the protection and unprotection of virtual machines. This not only applies to user initiated power-offs or power-ons of virtual machines, but also in the case where an ESXi host is disconnected from vCenter at which point vCenter will request the master HA agent to unprotect the affected virtual machines. What protection actually means will be discussed in a follow-up article. One thing I do want to point out is that if vCenter is unavailable, it will not be possible to make changes to the configuration of the cluster. vCenter is the source of truth for the set of virtual machines that are protected, the cluster configuration, the virtual machine-to-host compatibility information, and the host membership. So, while HA, by design, will respond to failures without vCenter, HA relies on vCenter to be available to configure or monitor the cluster.

Without diving straight in to the deep I want to point out two minor chances but huge improvements when it comes to managing/troubleshooting HA which I want to point out:

- No dependency on DNS

- Syslog functionality

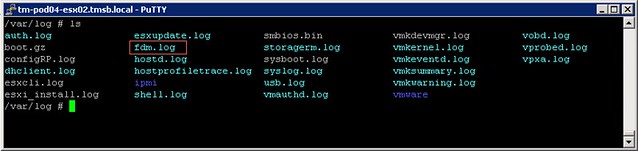

As of 5.0 HA is no longer dependent on DNS, as it works with IP addresses only. This is one of the major improvements that FDM brings. This also means that the character limit that HA imposed on the hostname has been lifted. (Pre-vSphere 5.0, hostnames were limited to 26 characters.) Another major change is the fact that the HA log files are part of the normal log functionality ESXi offer which means that you can find the log file in /var/log and it is picked up by syslog!

There are a couple of other major changes that I want to explain in the upcoming posts. Here is what you can expect to be published in the upcoming week:

** Disclaimer: This article contains references to the words master and/or slave. I recognize these as exclusionary words. The words are used in this article for consistency because it’s currently the words that appear in the software, in the UI, and in the log files. When the software is updated to remove the words, this article will be updated to be in alignment. **