Today Pat Gelsinger and Ben Fathi announced vSphere 6.0. (if you missed it you can still sign up for other events) I know many of you have been waiting on this and are ready to start your download engines but please note that this is just the announcement of GA… the bits will follow shortly. I figured I would do a quick post which details what is in vSphere 6.0 / what is new.There were a lot of announcements today, but I am just going to cover vSphere 6.0 and VSAN. I have some more detailed posts to come so I am not gonna go in to a lot of depth in this post, I just figured I would post a list of all the stuff that is in the release… or at least that I am aware off, some stuff wasn’t broadly announced.

- vSphere 6

- Virtual Volumes

- Want “Virtual SAN” alike policy based management for your traditional storage systems? That is what Virtual Volumes will bring in vSphere 6.0. If you ask me this is the flagship feature in this release.

- Long Distance vMotion

- Cross vSwitch and vCenter vMotion

- vMotion of MSCS VMs using pRDMs

- vMotion L2 adjacency restrictions are lifted!

- vSMP Fault Tolerance

- Content Library

- NFS 4.1 support

- Instant Clone aka VMFork

- vSphere HA Component Protection

- Storage DRS and SRM support

- Storage DRS deep integration with VASA to understand thin provisioned, deduplicated, replicated or compressed datastores!

- Network IO Control per VM reservations

- Storage IOPS reservations

- Introduction of Platform Services Controller architecture for vCenter

- SSO, licensing, certificate authority services are grouped and can be centralized for multiple vCenter Server instances

- Linked Mode support for vCenter Server Appliance

- Web Client performance and usability improvements

- Max Config:

- 64 hosts per cluster

- 8000 VMs per cluster

- 480 CPUs per host

- 12TB of memory

- 1000 VMs per host

- 128 vCPUs per VM

- 4TB RAM per VM

- vSphere Replication

- Compression of replication traffic configurable per VM

- Isolation of vSphere Replication host traffic

- vSphere Data Protection now includes all vSphere Data Protection Advanced functionality

- Up to 8TB of deduped data per VDP Appliance

- Up to 800 VMs per VDP Appliance

- Application level backup and restore of SQL Server, Exchange, SharePoint

- Replication to other VDP Appliances and EMC Avamar

- Data Domain support

- Virtual Volumes

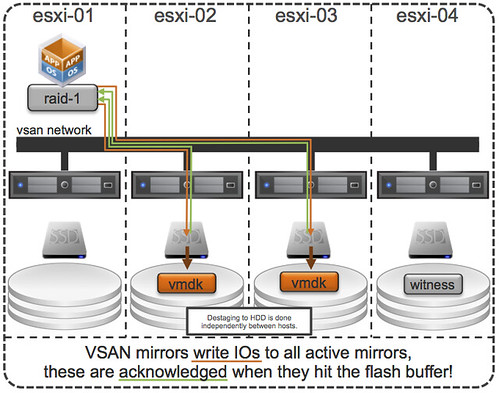

- Virtual SAN 6

- All flash configurations

- Blade enablement through certified JBOD configurations

- Fault Domain aka “Rack Awareness”

- Capacity planning / “What if scenarios”

- Support for hardware-based checksumming / encryption

- Disk serviceability (Light LED on Failure, Turn LED on/off manually etc)

- Disk / Diskgroup maintenance mode aka evacuation

- Virtual SAN Health Services plugin

- Greater scale

- 64 hosts per cluster

- 200 VMs per host

- 62TB max VMDK size

- New on-disk format enables fast cloning and snapshotting

- 32 VM snapshots

- From 20K IOPS to 40K IOPS in hybrid configuration per host (2x)

- 90K IOPS with All-Flash per host

As you can see a long list of features and products that have been added or improved. I can’t wait until the GA release is available. In the upcoming days I will post some more details on some of the above listed features as there is no point in flooding the blogosphere even more with similar info.