I was just listening to the VMware earnings call, and needless to say that I was very excited hearing all the great news about Virtual SAN. When I talk to customers this is something that comes up every now and then, they want to know where we stand in the market. I figured I would share what was stated by Carl Eschenbach and Pat Gelsinger at the 2015 Q4 earnings call:

Summarizing a few other product areas, our hyper-converged offerings based on VMware Virtual SAN experienced significant traction. Specifically, our Virtual SAN business saw successes across a wide variety of industries, market segments, and geos. In Q4, total VSAN bookings grew nearly 200% year-over-year, and customer count has increased to over 3,000 versus over 1,000 a year ago. We are now well over $100 million annual run rate per total bookings.

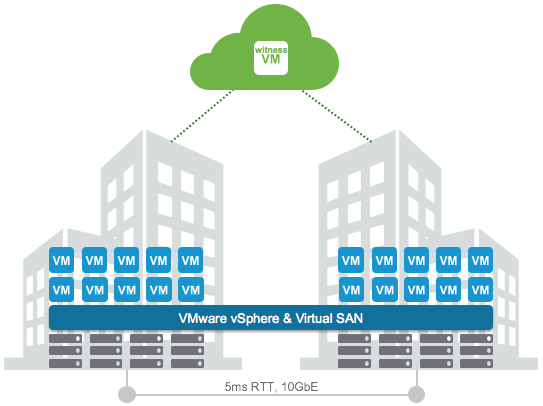

With our next release of VSAN in Q1, we expect our momentum to build given the powerful new enterprise capabilities, the product brings to market. Taking into account, the hardware associated with running the Virtual SAN software and our current booking to run rate, we believe we are the industry leader in the hyper-converged offerings measured both by software and as an appliance.

And the best is yet to come… New version is around the corner, and I can’t wait for it to be released. Make sure to sign up for the launch events! (or attend one of the many VMUGs the upcoming months, I will personally be presenting in Newcastle, Johannesburg, Durban, Capetown and Den Bosch)