In episode 013 we talk to Adrian Roberts, Head of EMEA Solution Architecture for VMware Cloud on AWS at AWS. Adrian discusses the various reasons customers are looking to utilize VMware Cloud on AWS, discusses some of the challenges, and of course the opportunities that arise when you have your VMware workloads close to native AWS services. Listen to the full episode via Spotify (spoti.fi/3DDCoJX) or Apple (apple.co/3r1qAvV)

vmware cloud on aws

Joined GigaOm’s David S. Linthicum on a podcast about cloud, HCI and Edge.

A while ago I had the pleasure to join David S. Linthicum from GigaOm on their Voices in Cloud Podcast. It is a 22 minute podcast where we discuss various VMware efforts in the cloud space, edge computing and of course HCI. You can find the episode here, where they also have the full transcript for those who prefer to read instead of listen to a guy with a Dutch accent. It was a fun experience for sure, I always enjoy joining podcast’s and talking tech… So if you run a podcast and are looking for a guest, don’t hesitate to reach out!

A while ago I had the pleasure to join David S. Linthicum from GigaOm on their Voices in Cloud Podcast. It is a 22 minute podcast where we discuss various VMware efforts in the cloud space, edge computing and of course HCI. You can find the episode here, where they also have the full transcript for those who prefer to read instead of listen to a guy with a Dutch accent. It was a fun experience for sure, I always enjoy joining podcast’s and talking tech… So if you run a podcast and are looking for a guest, don’t hesitate to reach out!

Of course you can also find Voices in Cloud on iTunes, Google Play, Spotify, Stitcher, and other platforms.

Project Dimension – VMware’s Edge Computing effort

Internally some of my focus has been shifting, going forward I will spend more time on edge computing besides vSAN. Edge (and IoT for that matter) has had my interest for a while, and when VMware announced an edge project I was intrigued and interested instantly. At VMworld US the edge computing efforts were announced. The name for the effort is Project Dimension. There were several sessions at VMworld, and I would recommend watching those if you are looking for more info then provided below. The session out of which I took most of the below info was IOT2539BE, titled “Project Dimension: the easy button for edge computing” by Esteban Torres and Guru Shashikumar. Expect more content on Project Dimension in the future as I start getting involved more.

What is Project Dimension? What discussed at VMworld was the following:

- A new VMware Cloud service; starting at edge locations

- Enable enterprises to consume compute, storage, and networking at the edge like they consume public cloud

- VMware will work with OEM partners to deliver and manage hyperconverged appliances in edge locations

- All appliances will be managed by VMware via VMware Cloud

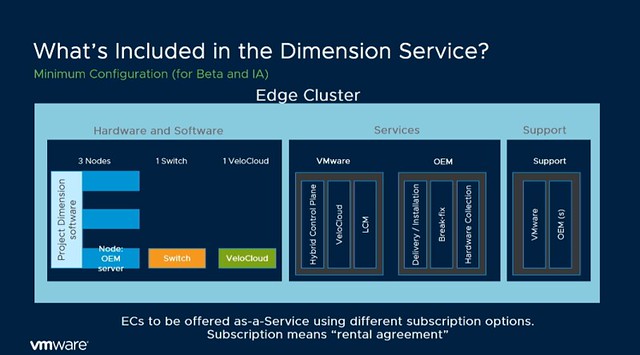

So what does it include? Well as mentioned it includes hardware, the type etc hasn’t been mentioned, but it was said that Dell and Lenovo are the first two OEMs to support Project Dimension. This hyperconverged solution will include:

- vSphere

- vSAN

- Velocloud

This solution will be managed by a “hybrid cloud control plane” as it is referred to, all by VMware. Architecturally this is what the service will look like:

Now what I found very interesting is that during the session someone asked about the potential for Dimension in on-prem datacenters, and the answer was: “Edge is where we are beginning, but the long-term plan is to offer the same model for data centers as well”. Some may notice that in the above list and diagram NSX is missing, as mentioned during the session, this is being planned for, but preferably will be a “lighter” flavor. What also stands out is that the HCI solution includes not only compute but also networking (switches and SD-WAN appliance).

Now, what is most interesting is the management aspect, VMware and the OEM partner will do the full maintenance/lifecycle management for you. This means that if something breaks the OEM will fix it, you as a customer however always contact VMware, single point of contact for everything. If there’s an upgrade then VMware will go through that motion for you. Every edge cluster for instance also has a vCenter Server instance, but you as an administrator/service owner will not be managing that vCenter Server instance, you will be managing the workloads that run in that environment. This to me makes sense, as when you scale out and potentially have hundreds or thousands of locations you don’t want to spend most of your time managing the infra for that, you want to focus on where the company’s revenue is.

Now getting back to the maintenance/upgrades. How does this work, how do you know you have sufficient capacity to allow for an upgrade to happen? VMware will also ensure this is possible by doing some form of admission control, which prevents you to claim 100% of the physical resources. Another interesting thing mentioned is that Dimension will allow you to chose when the upgrade or patches will be applied. In most environments maintenance will have an impact on workloads in some shape or form, so by providing blackout dates a peak season/time can be avoided.

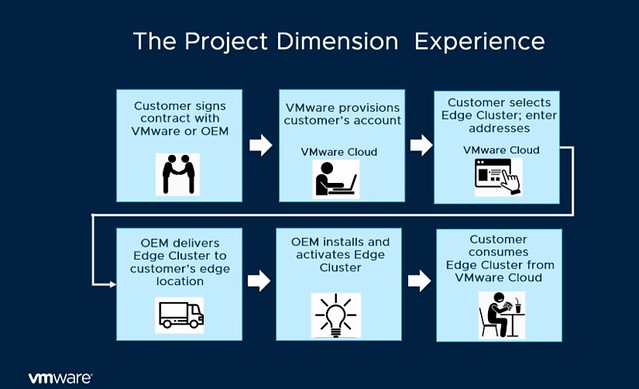

From a hardware point of view and procurement perspective, this service is also different then you are used to. The services will be on a subscription basis. 1 year or 3-year reserved edge clusters, or more of course. And from a hardware perspective, it kind of aligns with what you typically see in the cloud: Small, Medium or Large instance. Which then refers to the number of resources you get per node. Starting with 3 nodes, of course, have the ability to scale up and potentially start smaller than 3 nodes in the future. The process in terms of sign up / procurement is displayed in the diagram below, delivery would be within 1-2 weeks, which seems extremely fast to me.

What I also found interesting was the mention of a “try and buy” option, you pay for 3 months and if you like it you keep it, and your 3 months contract will go to 1 year (or so) automatically.

At this point you may be asking: why is VMware doing this? Well, it is pretty simple: demand and industry changes. We are starting to see a clear trend, more and more workloads are shifting closer to the consumer. This allows our customers to process data faster and more importantly respond faster to the outcome, and of course, take action through machine learning. But the biggest challenge customers have is consistently managing these locations at a global scale, and this is what Project Dimension should solve. This is not just a challenge at the edge, but across edge, on-prem and public cloud if you ask me. There are so many moving parts, various different tools, and interfaces, which just makes things overly complex.

So what is VMware planning on delivering with Project Dimension? Consistently, reliable and secure hyperconverged infrastructure which is managed through a Cloud Control Plane (single pane of glass management for edge environments) and edge-to-cloud connectivity through Velocloud SD-WAN. (Management traffic for now, but “edge to edge” and “edge to on-prem” soon!) There’s a lot of innovation happening at the back-end when it comes to managing and maintaining 1000s of edge locations, but you as a customer are buying simplicity, reliability, and consistency.

Please note, Project Dimension is in beta, and the team is still looking for beta customers. You need to have a valid use case, as I can see some of you thinking “nice for a home lab for a couple of weeks”, but that, of course, is not what the team is looking for. For those who have a good use case, please go to the product page and leave your details behind: http://vmwa.re/dimension

HCI1998BU – Enable High-Capacity Workloads with Elastic vSAN on VMware Cloud

I just watched the session by Rakesh and Peng on Elastic vSAN, also known as “EBS Backed vSAN”. This session was high on my list to watch live at VMworld, but unfortunately, I couldn’t attend it due to various other obligations. If you are interested in the full session, make sure to watch it here, it is free. If you want to read a short summary then have a look below.

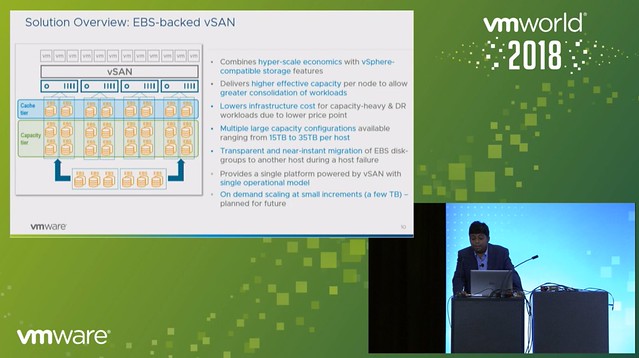

EBS backed vSAN is exactly what you expect it to be, having said that I do want to point out that EBS backed vSAN is supported for vSAN in VMware Cloud on AWS only. On top of that, it is recommended to run workloads on it which require high capacity. You could, for instance, consider leveraging EBS backed vSAN as a high capacity target for DR as a Service. But of course this could also be used in cases where there is sufficient CPU/Memory capacity available, but only storage needs to scale in VMware Cloud on AWS. 10TB is the capacity limit per host in VMC today, EBS backed vSAN removes this limit. With EBS backed vSAN you can increase the host per 15, 20, 25, 30 or 35TB per host. Which means you can deliver up to 140TB of capacity in a single 4 node cluster, for 16 nodes that is 560TB!

What is great about this solution is that it also solves another problem. Everyone knows that a host failure results in resyncing data. And depending on how much capacity the host was delivering this could take a long time. With EBS backed vSAN this problem does not exist any longer. When a host fails the EBS volumes simply will be mounted to another host, or a new host when this is introduced. This is a huge benefit if you ask me, even when there’s a high change rate as this happens within seconds.

One thing to point out as a constraint though is that today in VMC you can’t run the management workloads on EBS backed vSAN just yet. Rakesh did mention that this is being tested.

Next, the architecture was discussed, this is where Peng took over. He mentioned that the IOPS limit is set to 10K (regardless of the size) and the throughput is limited at 160MBps. All of this delivered typically with sub-millisecond latency, which is very impressive. Also, Peng mentioned that EBS backed vSAN provided very consistent and predictable performance in all tests. On top of that, EBS backed vSAN is also very reliable and highly available, even when compared to flash devices.

What I found interesting is the architecture, vSAN gets presented a SCSI device, however EBS is network attached and an EBS protocol client was implemented and then presented as an NVMe target through the PCI-e interface. The PCI-e interface allows for multi-volume, hot-add and hot-remove. This is what allows the EBS devices to be removed from a host which has failed (or has a failure) and then added to a healthy host.

When EBS backed vSAN is enabled each host will have 3 disk groups, and each disk group will have 3-7 capacity disks. Note that it is recommended to use RAID-5 for space efficiency and “Compression only mode” is enabled on these disk groups. Considering the target workloads, and the architecture (and EBS performance constraints) it didn’t make sense to use deduplication, hence the vSAN team implemented a solution where it is possible to have only compression enabled. Some I/O amplification is not an issue when you run all-flash and have hundreds of thousands of IOPS per device, but as stated EBS is limited to 10k IOPS per device, which means you need to be smart about how you use those resources.

During the Q&A one thing that was mentioned, which I found interesting, is that although today EBS backed vSAN needs to be introduced in certain increments across the whole cluster, that will not be the case in the future. In the future, according to Peng, it should be possible to add EBS volumes to disk groups on particular hosts even, allowing for full and optimal flexibility,

And for those who didn’t know, the VMworld Hands-On Labs was running on top of EBS backed vSAN and performance above expectations!