vSphere 6.0 was just announced and with it a new version of Virtual SAN. I don’t think it is needed to introduce Virtual SAN as I have written many many articles about it in the last 2 years. Personally I am very excited about this release as it adds some really cool functionality if you ask me, so what is new for Virtual SAN 6.0?

- Support for All-Flash configurations

- Fault Domains configuration

- Support for hardware encryption and checksum (See HCL)

- New on-disk format

- High performance snapshots / clones

- 32 snapshots per VM

- Scale

- 64 host cluster support

- 40K IOPS per host for hybrid configurations

- 90K IOPS per host for all-flash configurations

- 200 VMs per host

- 8000 VMs per Cluster

- up to 62TB VMDKs

- Default SPBM Policy

- Disk / Disk Group serviceability

- Support for direct attached storage systems to blade (See HCL)

- Virtual SAN Health Service plugin

That is a nice long list indeed. Let my discuss some of these features a bit more in-depth. First of all “all-flash” configurations as that is a request that I have had many many times. In this new version of VSAN you can point out which devices should be used for caching and which will serve as a capacity tier. This means that you can use your enterprise grade flash device as a write cache (still a requirement) and then use your regular MLC devices as the capacity tier. Note that of course the devices will need to be on the HCL and that they will need to be capable of supporting 0.2 TBW per day (TB written) over a period of 5 years. For a drive that needs to be able to sustain 0.2 TBW per day, this means that over 5 years it needs to be capable of 365TB of writes. So far tests have shown that you should be able to hit ~90K IOPS per host, that is some serious horsepower in a big cluster indeed.

That is a nice long list indeed. Let my discuss some of these features a bit more in-depth. First of all “all-flash” configurations as that is a request that I have had many many times. In this new version of VSAN you can point out which devices should be used for caching and which will serve as a capacity tier. This means that you can use your enterprise grade flash device as a write cache (still a requirement) and then use your regular MLC devices as the capacity tier. Note that of course the devices will need to be on the HCL and that they will need to be capable of supporting 0.2 TBW per day (TB written) over a period of 5 years. For a drive that needs to be able to sustain 0.2 TBW per day, this means that over 5 years it needs to be capable of 365TB of writes. So far tests have shown that you should be able to hit ~90K IOPS per host, that is some serious horsepower in a big cluster indeed.

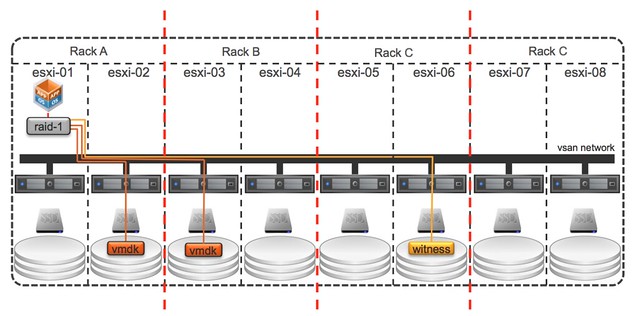

Fault Domains is also something that has come up on a regular basis and something I have advocated many times. I was pleased to see how fast the VSAN team could get it in to the product. To be clear, no this is not a stretched cluster solution… but I would see this as the first step, but that is my opinion and not VMware’s. This Fault Domain feature will allow you to specify fault domains per rack and then when you provision a new virtual machine VSAN will make sure that the components of the objects are placed in different fault domains.

In this case when you do it per rack then even a full rack failure would not impact your virtual machine availability. Very cool indeed. The nice thing about the fault domain feature also is that it is very simple to configure. Literally a couple of clicks in the UI, but you can also use RVC or host profiles to configure it if you want to. Do note that you will need 6 hosts at a minimum for Fault Domains to make sense.

Then of course there is the scalability. Not just the 64 host cluster support but also the 200 VMs per host is a great improvement. Of course there is also the improvements around snapshot and cloning which can be attributed to the new on-disk format and the different snapshotting mechanism that is being used, less then 2% performance impact when going up to 32 levels deep is what we have been waiting for. Fair to say that this is where the acquisition of Virsto is coming in to play, and I think we can expect to see more. Also, the components number has gone up. The max number of components used to be 3000 and is now increased to 9000.

Then there is the support for blade systems with direct attached storage systems… this is very welcome, I had many customers asking for this. Note that as always the HCL is leading, so make sure to check the HCL before you decide to purchase equipment to implement VSAN in a blade environment. Same applies to hardware encryption and checksums, it is fully supported but make sure your components are listed with support for this functionality on the HCL! As far as I know the initial release will have 2 supported systems on there, one IBM system and I believe the Dell FX platform.

All of the operational improvements that were introduced around disk serviceability and being able to tag a device as “local / remote / SSD” are the direct result of feedback from customers and passionate VSAN evangelists internally at VMware. Also for instance pro-active rebalancing is now possible through RVC. If you add a host or remove a host and want to even out the nodes from a capacity point of view then a simple RVC command will allow you to do this. But also for instance the “resync” details can now be found in the UI, something I am very happy about as that will help people during PoCs not to run in to the scenario where they introduce new failures while VSAN is recovering from previous failures.

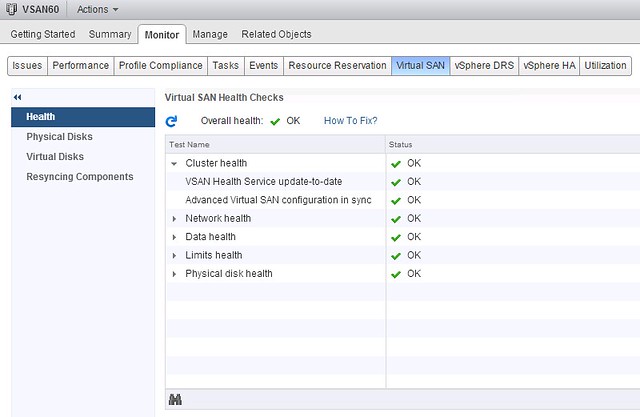

Last one I want to mention is the Virtual SAN Health Service plugin. This is a separately developed Web Client plugin that will provide in-depth information about Virtual SAN. I gave it a try a couple of weeks ago and now have it running in my environment, impressed with what is in there and great to see this type of detail straight in the UI. I expect that we will see various iterations in the upcoming year.

Das support! Very nice

Hi Duncan,

You mentioned stretched clusters – how far can VSAN 6 nodes live apart in terms of latency?

thanks!

It is not intended for stretched clusters in this release, that is something that will be worked on. I have seen no latency requirements mentioned. We expect nodes for now to be located in close proximity with low latency connection

I’m curious about the upgrade path? Is it disruptive? Greenfield only? After the negative press with EMC’s ExtremeIO upgrade it would be nice to see this be an NDU… . .

It is a non-disruptive rolling upgrade. Upgrade process is as follows:

1) Upgrade vCenter Server

2) Upgrade ESXi

3) Upgrade to new on-disk format

-> Host pre-check/validation to ensure successful migration

-> Rolling re-format per disk group

-> objects converted to V2 mode

Thanks Duncan,

That’s terrific news!

Glad to see vSphere 6.0 announced and all of the updates, there’s a ton a storage goodness to dive into!

A question regarding scale, specifically what is the IOP size used in configuring the performance maximums of 40K IOPS per host for hybrid & 90K IOPS per host for all-flash configurations? Is it 4KB?

— cheers,

v

How does number of faults to tolerate impact performance? Obviously there’s a multiplier effect based on the number of replicas.

— thx

v

depends, for reads it could have a positive impact as more devices are used for a VM. For writes it won’t matter too much in terms of performance it is just that more writes will occur over the network so this will increase network overhead.

“4K 70r/30w” mix as far as I know…

Is it true the All-Flash VSAN is an additional license? If so what’s the costs?

http://www.vmware.com/company/news/releases/vmw-newsfeed/VMware-Launches-New-Generation-of-Enterprise-Storage—%C2%A0Virtual-SAN-6-and-vSphere-Virtual-Volumes-to-Enable-Mass-Adoption-of-Software-Defined-Storage/1920298

additional $1,495 per CPU according to the press release

Whats the logic behind charging more for all flash VSAN ? Can I just include an HD as a fake hot spare and avoid the luxury tax ??

Not sure I am following your logic here Fletcher.

Maybe you can clarify the pricing referenced in http://ir.vmware.com/releasedetail.cfm?ReleaseID=894140

“Pricing and Availability

VMware Virtual SAN 6 and VMware vSphere Virtual Volumes are expected to become available in Q1 2015. VMware Virtual SAN is priced at $2,495 per CPU. VMware Virtual SAN for Desktop is priced at $50 per user. The new All-Flash architecture will be available as on add-on to VMware Virtual SAN 6 and will be priced at $1,495 per CPU and $30 per desktop. VMware vSphere Virtual Volumes will be packaged as a feature in VMware vSphere Standard Edition and above as well as VMware vSphere ROBO editions.”

It makes it sound like you pay $2495 for VSAN, and an additional $1495 for All-Flash VSAN architecture?

thanks!

Yes, the price per CPY for all flash would be 2495+1495.

I understand the economics from an R&D context, but after the vRAM PR fiasco why make another without explaining the difference? Why does all-flash require an extra license? Our new servers are all SSD, 10g – help us understand the all flash premium logic??

That is not up to me Fletcher. I’m not part of the product team even, let alone have any say in pricing / packaging.

Hi Ducan, is it too complicated to implement streched cluster feature for the VSAN team? VSAN clearly lacks a synchronous replication feature. why it’s not a priority?

Not sure why you would draw that conclusion to be honest. As stated in my post, today we have a “rack awareness” feature which is the first step towards “stretched”. VSAN objects, when FTT is set to 1 at a minimum, are automatically replicated to 2 (or more) hosts… So by default it has the capability. As you understand this is something that will need to be tested and potentially the product need to be optimized for the use case. It is definitely on the roadmap, but considering this is the second big release of the products there were other things that need to be tackled first. It is not too complicated, it is a matter of having a long list of features and prioritizing those and dependencies in between those features

Is there a minimum number of hosts per fault domain? My logic would tell me that since VSAN requires 3 hosts to operate, that it would require 3 hosts per FD. But your writeup gives me hope that its an aggregate of the total hosts.

Dan

My understanding is that a single vmdk must fit in a single disk group so how does that work with a 62tb vmdk?

Even if you had a disk group of 7 4tb drives that’s only 28tb which wouldn’t fit. Unless it gets split into multiple disk groups, but I haven’t seen that I’m any documentation.

That is actually not the case. Even a 1TB VMDK will be chunked up as a “component” has a max size of 256GB. So in the case of a 1TB VMDK you end up with 4 components for that VMDK and with FTT=1 that ends up being 8.

Objects can be spread across hosts, across diskgroups, across disks… That is the nice thing about VSAN. Good question, and actually had that question this week from someone else. Will do a short article on it.