A while back I wrote about design considerations when designing or building a stretched vCloud Director infrastructure. Since then I have been working on a document in collaboration with Lee Dilworth, and this document should be out soon hopefully. As various people have asked for the document I decided to throw it in to this blog post so that the details are already out there.

** Disclaimer: this article has not been reviewed by the technical marketing team yet, this is a preview of what will possibly be published. When the official document is published I will add a link to this article **

Introduction

VMware vCloud® Director™ 5.1 (vCloud Director) gives enterprise organizations the ability to build secure private clouds that dramatically increase datacenter efficiency and business agility. Coupled with VMware vSphere® (vSphere), vCloud Director delivers cloud computing for existing datacenters by pooling vSphere virtual resources and delivering them to users as catalog-based services. vCloud Director helps you build agile infrastructure-as-a-service (IaaS) cloud environments that greatly accelerate the time-to-market for applications and responsiveness of IT organizations.

Resiliency is a key aspect of any infrastructure but is even more important in “Infrastructure as a Service” (IaaS) solutions. This solution overview was developed to provide additional insight and information in how to architect and implement a vCloud Director based solution on a vSphere Metro Storage Cluster infrastructure.

Architecture Introduction

This architecture consists of two major components. The first component is the geographically separated vSphere infrastructure based on stretched storage solution, here after referred to as the vSphere Metro Storage Cluster (vMSC) infrastructure. The second component is vCloud Director.

Note – Before we dive in to the details of the solution we would like to call out the fact that vCloud Director is not site aware. If incorrectly configured availability could be negatively impacted in certain failure scenarios.

vSphere Metro Storage Cluster Introduction

A VMware vSphere Metro Storage Cluster (vMSC) configuration is a VMware vSphere 5.x certified solution that combines array based synchronous replication and clustering. These solutions are typically deployed in environments where the distance between datacenters is limited, often metropolitan or campus environments. It should be noted that some solutions can now be deployed up to 200 kilometers, always consult with your vendor and the vMSC hardware compatibility list for guidance

vMSC infrastructures are implemented with a goal of reaping the same benefits that high availability clusters provide to a local site, albeit in a geographically dispersed model with two datacenters in different locations. A vMSC infrastructure is essentially a stretched vSphere cluster at its core. The architecture is built on the premise of extending what is defined as “local” in terms of network and storage to allow these subsystems to span geographies, presenting a single and common base infrastructure set of resources to the vSphere cluster at both sites. It is in essence stretching storage and the network between sites.

The primary benefit of a stretched cluster model is to enable fully active and workload-balanced datacenters to be used to their full potential. Stretched cluster solutions enable on-demand and non-intrusive mobility of workloads through inter-site vMotion and Storage vMotion. The capability of a stretched cluster to provide this active balancing of resources should always be the primary design and implementation goal.

vMSC infrastructures offer the benefit of:

- Workload mobility

- Cross-site automated load balancing

- Enhanced Downtime avoidance

- Disaster avoidance

vMSC technical requirements and constraints

Due to the technical constraints of an online migration of virtual machines there are specific requirements that must be met prior to consideration of a stretched cluster implementation (vMSC). These requirements are listed in the VMware Compatibility Guide web page and the vMSC white paper but included below for your convenience:

- vMSC certified storage platform

- Storage connectivity using Fibre Channel, iSCSI, SVD, NFS, or FCoE

- The maximum supported network latency between sites for the ESXi management networks is 5 milliseconds Round Trip Time (RTT)

- Note that 10ms of latency for vMotion is only supported with Enterprise+ licenses (Metro vMotion)

- The maximum supported latency for synchronous storage replication links is 5 milliseconds RTT (Note that this is not a storage limitation, but a VMware requirement)

vMSC implementation

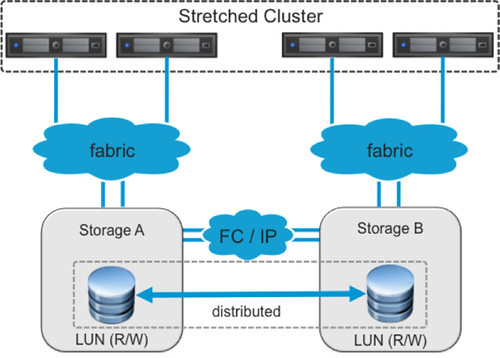

In our environment we have deployed an EMC VPLEX solution in a non-uniform fashion. Non-uniform is where hosts in Datacenter-A only have access to the array within Datacenter-A, and hosts in Datacenter-B only have access to the array in Datacenter-B. The following diagram depicts a simplified version of a non-uniform architecture.

EMC VPLEX provides the concept of a “virtual LUN” which allows ESXi hosts in each data center to read and write to the same datastore/LUN. The VPLEX technology takes care of maintaining the cache state on each array so that ESXi hosts in either datacenter see the LUN as local. This solution is what EMC calls “write anywhere”. Even in the case where two virtual machines reside on the same datastore but are both located in a different datacenter they will write locally and the VPLEX engines will ensure data is replicated to the other site, for reads VPLEX will eliminate any cross site traffic, unlike uniform access solutions which will typically require additional bandwidth for both writes and reads as these solutions typically only allow reads and writes to a primary storage controller. A key point in this configuration is that each of the LUNs / datastores have “site affinity” defined, this is also sometimes referred to as “site bias” or “LUN locality”. In other words, if anything happens to the link in between sites then the storage system on the preferred site for a given datastore will be the only one left who can read-write access it. This is of course to avoid any data corruption in the case of a failure scenario.

vCloud Director

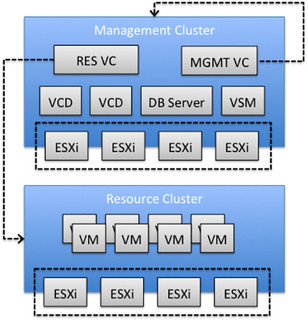

VMware vCloud Director infrastructures are typically implemented and architected using a separate management and resource environment. This concept is described in-depth in the VMware vCloud Architecture Toolkit (vCAT).

- The Management Cluster contains the elements required to operate and manage the vCloud Director environment. This typically includes vCloud Director cells, vCenter Server(s) (used for resource clusters), vCenter Chargeback, vSphere Orchestrator, vShield Manager and one or more database servers.

- The Resource Cluster represents dedicated resources for end-user consumption. Each resource group consists of VMware ESXi hosts managed by a vCenter Server, and is under the control of vCloud Director. vCloud Director can manage the resources of multiple clusters, resource pools and vCenter Servers.

This separation is primarily recommended to facilitate quicker troubleshooting and problem resolution. Management components are strictly contained in a relatively small and manageable cluster. Running management components on a large cluster with mixed environments can be difficult to troubleshoot and time consuming and complex to operate.

Separation of function also enables consistent and transparent management of infrastructure resources, critical for scaling vCloud Director environments. It increases upgrade flexibility because management cluster upgrades are not tied to resource group upgrades and it prevents security attacks or intensive provisioning activities from affecting management component availability.

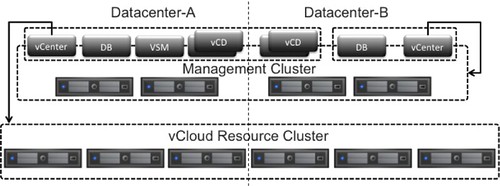

The following diagram depicts this scenario.

Management Cluster Components

The management cluster hosts all the necessary vCloud infrastructure components. In our scenario the Management Cluster contains at a minimum the following virtual machines:

- Two vCenter Server instances

- One vCenter Server instance for Management

- One vCenter Server instance for Cloud Resources

- One vCenter Server database

- One vCloud Director database

- Two vCloud Director cells

- One vShield Manager

It should be noted that the management vCenter Server instance is running as a virtual machine in the cluster it is managing, the black arrow going from the “MGMT VC” (Management vCenter Server instance) depicts this in the diagram above.

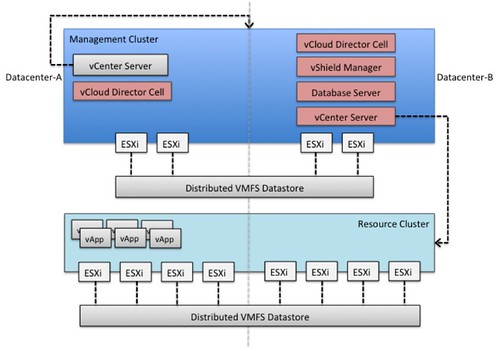

vCloud Director on a vMSC infrastructure

In this section we will describe the architecture deployed for this case study. We will also discuss some of the basic configuration and behavior of the various vSphere features, for an in-depth explanation of each respective features we would like to refer to the vSphere 5.1 Availability Guide and the vSphere 5.1 Resource Management Guide. From an end-user perspective, and in many cases also administrator’s perspective, a stretched environment looks and feels like a single site deployment. In reality these are two geographically separated sites, the dotted line used as a divider in the below diagram depicts this. In this diagram the vCenter Server in “Datacenter-A” manages the “Management Cluster” while the vCenter Server in “Datacenter-B” manages the “Resource Cluster”.

Before discussing any of the individual components and the design considerations associated with protecting these we want to enumerate some failure scenarios. A better understanding of the various possible failure scenarios will provide the information necessary for the various design considerations.

Failure Scenarios

There are many failures that can be introduced in clustered systems, but in a properly architected environment, HA, DRS and the storage subsystem will be unaware of many of these. We will not discuss the low-impact failures, like the failure of a single network cable or the failure of a single host, as they are explained in-depth in the documentation provided by the storage vendor of your chosen solution. We will briefly discuss the following “common” failure scenarios:

- Datacenter partition

- Full storage failure in Site-A

- Full compute failure in Site-A

- Loss of complete Site-A

Datacenter partition

In this scenario the network between sites has failed causing a datacenter partition, by this we mean all connections from both a storage and network perspective have failed. Resulting in potentially the need to restart virtual machines depending on where they reside. In a VPLEX environment each LUN has storage site affinity configured know as the preferred site, ensuring a LUN either belongs to Datacenter-A or to Datacenter-B, but never to both in the case where the VPLEX systems have lost connection with each other. The reason for this is to prevent data corruption and data consistency issues from occurring. When a datacenter partition occurs any given datastore will only be accessible in one of the Datacenter’s.

As an example, Datastore-A has affinity with Datacenter-A. When there is a datacenter partition Datacenter-B will lose connectivity with the datastore as VPLEX will suspend the device at that site, Datacenter-A will remain connected and continue serving IO to that datastore.

Virtual machines stored on a datastore with affinity to Datacenter -A and running on a host in Datacenter -A are not impacted. Virtual machines stored on a datastore with affinity to Datacenter -A and running on a host in Datacenter -B are restarted in Datacenter -A.

Recommended: Ensure virtual machines reside in the site they have datastore affinity with, using VM-Host affinity rules, to avoid unneeded down time. Additionally consult your storage vendors recommendation for site to site link configurations. Resiliency should also be built into your WAN connection design. Ideally you will be using diversely routed links, physically separate channels and of course at each site enter the site via different entry points and terminate the connections in different cabinets within each datacenter. Always validate claims that these are being provided if using 3rd parties to provide these facilities.

Full storage failure in Datacenter-A

VPLEX Notes: In this example storage failure refers to the backend storage device(s) connected to the VPLEX cluster. Customers who are deploying VPLEX Metro as used in our lab should note that if only the backend storage fails in Datacenter-A but the VPLEX cluster remains online then hosts in Datacenter-A will not be impacted as the datastores are still accessible via the VPLEX cluster. (Please refer to the VPLEX whitepaper covering all failure scenarios for more information)

Recommended: Ensure where possible “active / standby” or “active / active” solutions are implemented used to avoid downtime, such as vSphere vCenter Single Sign On deployed in a High Availability fashion.

Full compute failure in Datacenter-A

In this scenario the full compute layer in Datacenter-A has failed resulting in the need to restart all virtual machines in Datacenter-B. The restart of virtual machines is typically initiated within 30 seconds by vSphere HA. It should be noted that the initiation of a restart does not equal the completion of a full boot including initialization of all services / applications.

Recommended: Ensure where possible “active / standby” or “active / active” application architectures are implemented to avoid downtime during a full compute failure one of the datacenters. In the case where this is not possible ensure the correct start-up order is provided in to minimize the risk of virtual machines restarting in the incorrect order. (Note: There is no guarantee that virtual machine boots are completed in a specific order when managed by HA.)

Loss of Datacenter-A

In this scenario the full Datacenter-A is lost. As all datastores are available locally all virtual machines can be restarted automatically by the hosts in Datacenter-B through the use of vSphere HA. This type of failure is typically detected and acted on by vSphere HA within 30 seconds.

Recommended: Ensure where possible “active / standby” or “active / active” application architectures are implemented to avoid downtime during a full datacenter. In the case where this is not possible ensure the correct start-up order is provided in to minimize the risk of virtual machines restarting in the incorrect order. (Note: There is no guarantee that virtual machine boots are completed in a specific order when managed by HA.)

Most storage providers will strongly recommend deployment of a “witness” appliance to ensure datastores remain online at the surviving site. If we use VPLEX as an example it is strongly recommended to run the “witness” appliance within a 3rd site (failure domain). The “witness” will basically establish a 3-way VPN between itself and the two sites (and their respective VPLEX clusters). In the event of a site loss the “witness” can determine the state of the failed site and inform the surviving site of this state. Why is this useful? If you have lost a site then LUN’s whose preference rule was set to that site will, by design, be in a suspended state at the surviving site. Just like hosts in a HA cluster without some kind of arbitration technique the surviving site has no way of knowing if the other site is simply isolated or is actually down. The “witness” steps in here. Once site state is determined the “witness” can then override the preference rules meaning that LUNs in a suspended state (due to their preference being set to the downed site) can be brought online at the surviving site. It is important to note this is the only condition that causes the “witness” to make this decision. In all other failure scenarios the preference rules take priority.

The sum of the parts

Within vCloud Director environments there is a dependency on various components to be available for a full end-user experience. This leads to the question how are you going to protect the individual components against failures and what is the impact on your vCloud workloads when a component is down. Within a vCloud Director environment management component failures typically do not lead to decreased availability of the vCloud workloads. Individual component failures could however potentially result in decreased availability of the vCloud tenant portal or the vCloud administrator portal.

It should be noted that many of the vCloud Director management components are dependent on each other. For example vCenter Server requires Single Sign On to be available in order to allow a user to log-in and vCloud Director requires vShield Manager to be available in order to deploy new vShield Edge services.

Depending on the service level agreement around tenant portal availability specific components might require additional protection. The following section will discuss design / implementation considerations for each of the components.

Management Component Level Protection

Each vCloud Director environment consists of many moving parts. For each of these components there are different options for protecting them against failure. The question that will need to be answered first though before any decisions can be made is how critical the vCloud Director portal is for your customers. We cannot answer this question for you, hence all mentioned recommendations below should be considered as guidelines or recommended practices. Before implementing a similar environment we recommended evaluating which recommended practices or guidelines meet your requirements and which do not and adopt or adapt accordingly.

In each of the respective sections availability or fault tolerance options are summarized. Recommended practices are provided on the assumption service level agreement is based on vCloud workload availability and developed with an operational simplicity first mindset.

vCloud Director

vCloud Director was developed with a scale-out architecture in mind. vCloud Director can easily scale by deploying new instances, or “cells” as they are often referred to. A cell is simply said a virtual machine running the vCloud Director software. These cells are typically fronted by a load-balancer and all connected to the same database. Each of these cells can handle load and present tenants with the vCloud Director portal. vCloud Director cells are stateless and simple to deploy and manage, at a low cost.

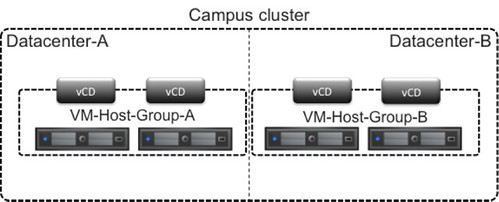

VMware recommends a vCloud Director cell to be available at all times. Because of the low cost involved of deploying new instances and simplicity of managing these it is recommended to deploy at a minimum two vCloud Director cells in each site.

In order to ensure vCloud Director cells reside in each of the “sites” of your campus cluster infrastructure, VMware recommends leveraging vSphere DRS VM-Host affinity groups. It should be noted that VMware recommends using “should” rules to ensure vCloud Director cells are restarted by vSphere HA when a full site failure has occurred. More details around VM-Host affinity groups and best practices can be found in the vSphere Metro Storage Cluster white paper.

In the scenario where more than 4 vCloud Director cells are required it is recommended to ensure each site contains 50% of the deployed cells. In an eight vCloud Director cell deployment this would result in four vCloud Director cells in Datacenter-A and four in Datacenter-B using the appropriate VM-Host affinity groups.

The following diagram depicts the above recommendation.

vCenter Server (cloud resources and management)

vCenter Server can be protected through various methods. Availability of vCenter can be increased by leveraging vSphere HA and out-of-the-box functionality like vSphere HA VM Monitoring. The benefit of these solutions is that they are simple to configure and manage.

Another method of protecting vCenter Server is through vCenter Server Heartbeat. vCenter Heartbeat is a more intelligent solution than vCenter HA as in that it can detect application, service and guest OS outages using heartbeat mechanisms and respond to that. It uses a passive server instance to provide rapid failover and failback of vCenter Server and its components to a standby instance either onsite or to a remote datacenter. Heartbeat provides the same level of protection for the vCenter Server database, monitoring and protecting the Microsoft SQL Server database associated with vCenter Server, even if it’s installed on a separate server.

It is key to realize that neither of these solutions are “non-disruptive”. Both vSphere HA and vCenter Heartbeat will cause a slight disruption. vSphere HA will restart your VM when a host has failed, and vSphere HA – VM Monitoring can restart the Guest OS when the Guest OS has failed. Although vCenter Server Heartbeat is a more intelligent solution, it is disruptive and reactive.

Taking the provided failure scenario examples in to account and the associated complexity of operational procedures VMware recommends leveraging vSphere HA and VM Monitoring for the protection of vCenter Server for both the management and cloud resource cluster. Additionally vSphere HA Application Monitoring could be used to monitor individual services if desired.

In order to prevent any dependencies between the management vCenter Server instance and the vCloud resources vCenter server VMware recommends leveraging a separate database server for each. A possibility, depending on the size of the management cluster, is the use of a locally installed database service on the management vCenter Server instance.

vCenter Server is formed out of multiple services. These services are required to offer full functionality from a vSphere administrative perspective. In some cases these services are required for vCloud Director. In the following section we will discuss these components and provide guidance around availability of the total solution.

vCenter Server services

vCenter Server is comprised of four key components. These components are:

- vCenter Server aka VPXD

- vCenter Server Single Sign On (SSO)

- vCenter Server Inventory Service

- vCenter Server Web Client

vCenter Server (VPXD) relies on availability of SSO and the Inventory Service. vCenter Server Web Client offers an alternative to the Windows installable vSphere Client and is not required, but recommended as many new features are only exposed and thus configurable through the vSphere Web Client. vCenter login functionality is dependent on SSO availability. SSO can be deployed in different types of configurations of which the basic deployment and high availability deployment are most common.

The vCenter Server services should be viewed as a chain of services providing a single service and should be treated as such. We realize that from scalability perspective separating functionality and providing each its own virtual machine could be beneficial. However, separating services increases the probability of an outage caused by unavailability of either of the virtual machines.

In a single vCloud Resource vCenter environment VMware recommends keeping vCenter Server (VPXD), SSO, Inventory Service and the Web Client together to minimize operational complexity and dependency between virtual machines and the associated services. The Web Client can be separated from vCenter Server, SSO and Inventory Services. The Web Client allows for a scale-out architecture and as such multiple instances can be installed. This will require some form of load balancing services to function correctly.

Database Server

It is recommended to use an external database for both vCloud Director and vCenter Server. The question that arises is if this database should be clustered or not. A clustered database service will increase availability of the overall solution. At the time of writing however not all components that require a database, support a clustered solution. One option is to separate those services that do support a clustered database solutions from those which do not. Considering the complexity of management and the additional overhead this is not recommended.

In addition, in the case of a cluster database solution the operational procedures required to respond to the various described failure scenarios would be complex and sensitive to human errors. VMware recommends deploying a non-clustered database architecture in a campus environment, keeping the database solution architecture simple and easy to manage.

VMware recommends the use of VM-Host affinity groups to ensure the database server and all vCloud management components reside in the same datacenter. This to avoid unnecessary tenant and admin portal service disruption during one of the described failure scenarios as both vCenter Server (and it components) and vCloud Director are dependent on the database server. It should be noted that tenant or admin portal outages do not impact the availability of your vCloud workloads.

vShield Manager

vShield Manager provides security and network services for vCloud Director. vShield Manager comes prepackaged as a virtual machine and has a one to one relationship with vCenter Server. Meaning that if your vCloud Director environment leverages resources from vCenter Server instances, each of these instances will have a vShield Manager instance linked to it resulting in 4 vShield Manager instances

VMware recommends protecting vShield Manager through vSphere HA (including VM Monitoring). VM Monitoring enables automatic restarts of guest failure. The vShield Manager virtual machine should reside in the same site as the vCenter Server using VM-Host affinity group.

Solution overview

Stretched vCloud Director infrastructures add a level of flexibility but also inherently a level of complexity. In the above sections we have discussed the various recommendations with regards to the management components and availability. The following diagram depicts all these recommendations in a single view.

Shown are two sites, Datacenter-A and Datacenter-B. In Datacenter-A all vCloud management components are hosted, except for two out of the four vCloud Director cells. All components shown in Datacenter-A are part of a VM-Host affinity group to ensure that during normal operations they reside in Datacenter-A. vCenter Server (and the associated database server) which manages the Management cluster has affinity with Datacenter-B and should be part of the VM-Host affinity group of Datacenter-B. In this datacenter two of the four vCloud Director cells are deployed to provide maximum availability and fast recovery after a failure event.

vCloud workload considerations

Stretched vSphere architectures are typically deployed to enable workload mobility between datacenters and provide disaster avoidance capabilities. Often neglected is the potential operational impact of such an architecture and the potential network overhead.

vCloud Director provides a self-service portal but primarily an abstraction layer to simplify the provisioning process of new workloads. As a result some of the advanced vSphere infrastructure configuration options, like vSphere DRS affinity rules, are not exposed in the vCloud Director self-service portal making alignment of compute and storage affinity a manual vCenter Server workflow. On the management layer it was proposed to leverage vSphere DRS affinity rules to align storage and compute affinity for maximum uptime. At time of writing the use of vSphere DRS affinity rules is not supported in a vCloud Director environment and as such cannot be leveraged to align compute and storage affinity.

vCloud Director tenants heavily rely on vShield Edge for specific network services like DHCP, NAT, firewalling and more. An edge device is typically configured in an HA configuration. This HA configuration is active/standby, meaning that only one out of the two deployed devices will actively provide these services and handle traffic. In a stretched vCloud environment it is uncertain in which site a given workload resides. As such it could be all vCloud workloads reside in Datacenter-A while the vShield Edge device resides in Datacenter-B. Any traffic which would need to be inspected by the vShield Edge device would in that scenario go to Datacenter-B first. This so-called traffic tromboning could increase datacenter interconnect utilization and should be accounted for and carefully monitored and managed..

Conclusion

When properly operated and architected, stretched vSphere and vCloud architectures are excellent solutions that increase resiliency and offer inter-site workload mobility. Keeping your management vCloud environment simple will allow you to minimize downtime during failure scenarios and reduce complexity of operational processes. This post has highlighted design considerations, the importance of site affinity and the role that VMware DRS affinity rules play in this process. Customers must ensure that the logic mandated by these rules and groups is enforced over time if the reliability and predictability of the cluster are to be maintained.

Reference material

The following Knowledge Base articles and documents have been used to develop this post. It is recommended to read these.

Great post. I have the 5.1 clustering deepdive to read this week so I hope i feel as enlightened by the end of it.

Thanks

Duncan,

Nice job and looking forward to the final white paper when it GAs. I noticed that your article does not give details on the networking layer; and while the KBs and docs you referenced do mention extending Layer 2, they do not give detailed information either. Just curious if VMware is being purposely agnostic about recommending a specific L2 technology? Even if VMware does not want to make a specific recommendation, a brief summary of supported L2 extension technologies might be helpful for virtualization and storage admins.

Nice Post, looking forward to the full paper.

Great Post! Learning and learning more on a daily base on vCloud Director setups… I’m in the middle of creating a Secure vCloud director environment which may be stretched between 2 locations. It sure is more complicated than the “normal” stretched vSphere Clusters…

Nice work, we have all the complexity of stretched cluster, abstraction of storage via vplex, and abstraction of already virtualised vSphere into vCD layer. It is a challenge to keep every bit of configuration on its toes. Excellent contribution, Duncan!

Great overview of vCloud Director. The diagrams really helped with my overall understanding of the different infrastructure components. Thanks for sharing.