We(VMware PSO) had a discussion yesterday on the fact whether it’s supported to have MSCS(Microsoft Clustering Services) VM’s in a HA/DRS cluster with both HA and DRS set to disable. I know many people struggle with this because it doesn’t make sense in a way. In short: No, this is not supported. MSCS VM’s can’t be part of a VMware HA/DRS cluster, even if they are set to disabled.

I guess you would like to have proof:

For ESX 3.5:

http://www.vmware.com/pdf/vi3_35/esx_3/r35u2/vi3_35_25_u2_mscs.pdf

Page 16 – “Clustered virtual machines cannot be part of VMware clusters (DRS or HA).”For vSphere:

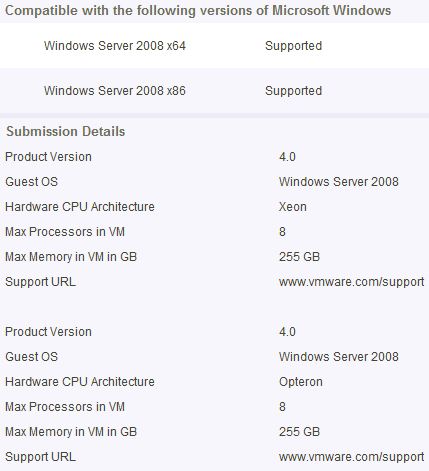

http://www.vmware.com/pdf/vsphere4/r40/vsp_40_mscs.pdf

Page 11 – “The following environments and functionality are not supported for MSCS setups with this release of vSphere:

Clustered virtual machines as part of VMware clusters (DRS or HA).”

As you can see certain restrictions apply, make sure to read the above documents for all the details.