You must have been wondering the same thing after reading the introduction to Virtual SAN. Last week at VMworld I received many questions on this topic, so I figured it was time for a quick blog post on this matter. How do you know where a storage object resides with Virtual SAN when you are striping across multiple disks and have multiple hosts for availability purposes, what about Virtual SAN object location? Yes I know this is difficult to grasp, even with just multiple hosts for resiliency where are things placed? The diagram gives an idea, but that is just from an availability perspective (in this example “failures to tolerate” is set to 1). If you have stripe width configured for 2 disks then imagine what could happen that picture. (Before I published this article, I spotted this excellent primer by Cormac on this exact topic…)

Luckily you can use the vSphere Web Client to figure out where objects are placed:

- Go to your cluster object in the Web Client

- Click “Monitor” and then “Virtual SAN”

- Click “Virtual Disks”

- Click your VM and select the object

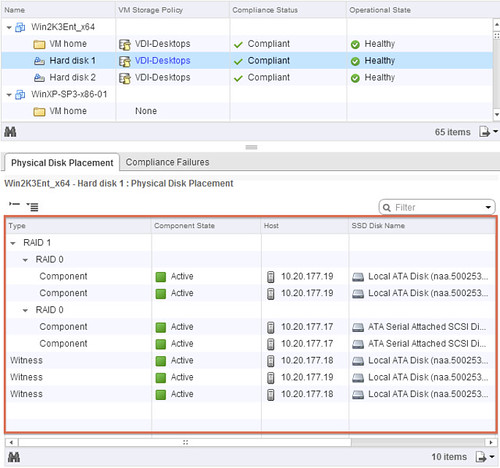

The below screenshot depicts what you could potentially see. In this case the Policy was configured with “1 host failure to tolerate” and “disk striping set to 2”. I think the screenshot explains it pretty well, but lets go over it.

The “Type” column shows what it is, is it a “witness” (no data) or a “component” (data). The “Component state” shows you if it is available (active) or not at the moment. The “Host” column shows you on which host it currently resides and the “SSD Disk Name” column shows which SSD is used for read caching and write buffering. If you go to the right you can also see on which magnetic disk the data is stored in the column called “Non-SSD Disk Name”.

Now in our example below you can see that “Hard disk 2” is configured in RAID 1 and then immediately following with RAID 0. The “RAID 1” refers to “availability” in this case aka “component failures” and the “RAID 0” is all about disk striping. As we configured “component failures” to 1 we can see two copies of the data, and we said we would like to stripe across two disks for performance you see a “RAID 0” underneath. Note that this is just an example to illustrate the concept, this is not a best practice or recommendation as that should be based on your requirements! Last but not least we see the “witness”, this is used in case of a failure of a host. If host 10.20.177.19 would fail or be isolated from the network somehow then the witness would be used by host 10.20.177.17 to claim ownership. Makes sense right?

Hope this helps understanding Virtual SAN object location a bit better… When I have the time available, I will try to dive a bit more in to the details of Storage Policy Based Management.

I was wondering – is the case where vSphere owning the disks could be one server, and the VM was executing on a different vSphere host. What would happen then – would the VMDK objects eventually be located where the VM workload was, or would the workload be moved to where the VM was. Or would such a scenario just not happen?

Data doesn’t move with VSAN. Objects will reside where they have been provisioned initially, and only when a failure has occurred and a new object will need to be created will data end up in a different location.

So basically if you have 4 hosts, and your VM sits on a host where the VMDK object doesn’t sit then it will read/write remotely.

as per twitter conversation: I don’t really think I’d care about where my object is as long as high availability has been taken care of in the design. I don’t know it today in my SAN, why would I want to know it now?

The only reason I could think of is degraded performance on a single disk loss as that probably will not trigger a failover. Can you elaborate on that? What if a single disk fails in a) the node my VM is running on, b) the mirror node or c) the witness node. And as VSAN is taking care of RAID, do I get the hardware failure through vCenter or do I need my hardware monitoring to take care of that?

my comment doesn’t really matter anymore. This is the SAN RAID 10 paradigm upside down! Which is not bad from a failover perspective but it does answer my initial question. Let me elaborate and tell me if I am right afterwards (you know I want to be proven wrong).

In a regular SAN we use a RAID0 on top of multiple RAID1 so that if a disk fails, recalculating the raid effects only one disk in the whole pool (the one in RAID1). Here we have a RAID1 as a network failover over multiple RAID0. If a single disk fails in a single node, the whole node fails so we have a node failover by default whether or not it’s a single disk or a complete node failure. Again; this does not have to be bad as it is the only way to ensure node failover.

Am I coming close?

My concern was the latencies inherent in the file objects being one server, and the workload being elsewhere. I’m guessing that the Host that owns the workload would eventually cache the VMs file objects over time?

the latency wouldn’t change that much as you are ALWAYS writing at least once to another host because of the “network RAID1”. You need the remote ACK to ensure reliability of the write. When completely remote it’s just 2 remote ACKs. The only thing it would change is double bandwith usage.

The reads on the other hand …

incidentally. i’m not sure the ability to do basic maths qualifies as test of “being human”. 1=8=9… 🙂

Why is “component failures” to 1 create witness to 3?, “component failures” = 1, create 2 replicas and 1 witness.