Frank and I have discussed this topic multiple times and it was briefly mentioned in Frank’s excellent series about over-sizing virtual machines; Zero Pages, TPS and the impact of a boot-storm. Pre-vSphere 4.1 we have seen it all happen, a host fails and multiple VMs need to be restarted. Temporary contention exists as it could take up to 60 minutes before TPS completes. Or of course when the memory pressure thresholds are reached the VMkernel requests TPS to scan memory and collapse pages if and where possible. However, this is usually already too late resulting in ballooning or compressing (if your lucky) and ultimately swapping. If it is an HA initiated “boot-storm” or for instance you VDI users all powering up those desktops at the same time, the impact is the same.

Now one of the other things I also wanted to touch on was Large Pages, as this is the main argument our competitors are using against TPS. Reason for this being that Large Pages are not TPS’ed as I have discussed in this article and many articles before that one. I even heard people saying that TPS should be disabled as most Guest OS’es being installed today are 64Bit and as such ESX(i) will back even Small Pages (Guest OS) by Large Pages and TPS will only add unnecessary overhead without any benefits… Well I have a different opinion about that and will show you with a couple of examples why TPS should be enabled.

One of the major improvements in vSphere 4.0 is that it recognizes zeroed pages instantly and collapses them. I have dug around for detailed info but the best I could publicly find about it was in the esxtop bible and I quote:

A zero page is simply the memory page that is all zeros. If a zero guest physical page is detected by VMKernel page sharing module, this page will be backed by the same machine page on each NUMA node. Note that “ZERO” is included in “SHRD”.

(Please note that this metric was added in vSphere 4.1)

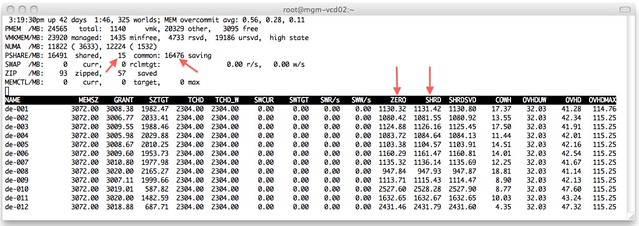

I wondered what that would look like in real life. I isolated one of my ESXi host (24GB of memory) in my lab and deployed 12 VMs with 3GB each with Windows 2008 64-Bit installed. I booted all of them up in literally seconds and as Windows 2008 zeroes out memory during boot I knew what to expect:

I added a couple of arrows so that it is a bit more obvious what I am trying to show here. On the top left you can see that TPS saved 16476MB and used 15MB to store unique pages. As the VMs clearly show most of those savings are from “ZERO” pages. Just subtract ZERO from SHRD (Shared Pages) and you will see what I mean. Pre-vSphere 4.0 this would have resulted in severe memory contention and as a result more than likely ballooning (if the balloon driver is already started, remember it is a “boot-storm”) or swapping.

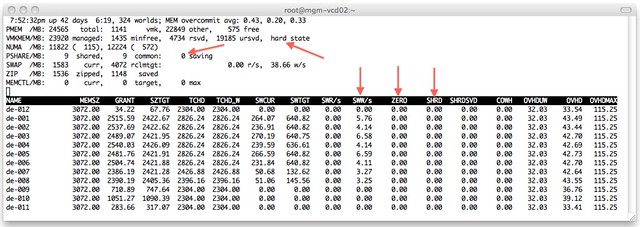

Just to make sure I’m not rambling I disabled TPS (by setting Mem.ShareScanGHz to 0) and booted up those 12 VMs again. This is the result:

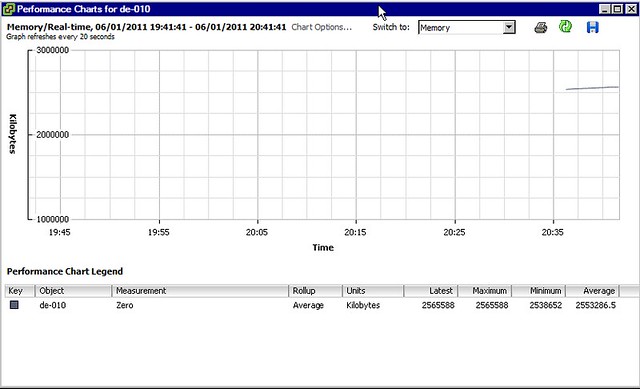

As shown at the top, the hosts status is “hard” as a result of 0 page sharing and even worse, as can be seen on a VM level, most VMs started swapping. We are talking about VMkernel swap here, not ballooning. I guess that clearly shows why TPS needs to be enabled and where and when you will benefit from it. Please note that you can also see “ZERO” pages in vCenter as shown in the screenshot below.

One thing Frank and I discussed a while back, and I finally managed to figure out, is why after boot of a Windows VM the “ZERO” pages still go up and fluctuate so much. I did not know this but found the following explanation:

There are two threads that are specifically responsible for moving threads from one list to another. Firstly, the zero page thread runs at the lowest priority and is responsible for zeroing out free pages before moving them to the zeroed page list.

In other words, when an application / service or even Windows itself “deprecates” the page it will be zeroed out by the “zero page thread” aka garbage collector at some point. The Page Sharing module will pick this up and collapse the page instantly.

I guess there is only one thing left to say, how cool is TPS?!