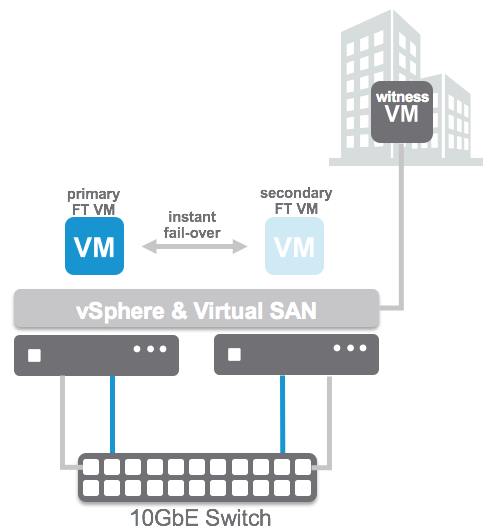

When we announced Virtual SAN 2-node ROBO configurations at VMworld we received a lot of great feedback and responses. A lot of people asked if SMP-FT was supported in that configuration. Apparently many of the customers using ROBO still have legacy applications which can use some form of extra protection against a host failure etc. The Virtual SAN team had not anticipated this and had not tested this explicit scenario unfortunately so our response had to be: not supported today.

We took the feedback to the engineering and QA team and these guys managed to do full end-to-end tests for SMP-FT on 2-node Virtual SAN ROBO configurations. Proud to announce that as of today this is now fully supported with Virtual SAN 6.1! I want to point out that still all SMP-FT requirements do apply, which means 10GbE for SMPT-FT! Nevertheless, if you have the need to provide that extra level of availability for certain workloads, now you can!