I had a couple of things I wanted to write about with regards to Virtual SAN which I felt weren’t beefy enough to dedicate a full article to so I figured I would combine a couple of news worthy items and create a Virtual SAN news flash article / series.

- I was playing with Virtual SAN last week and I noticed something I hadn’t noticed yet… I was running vSphere with an Enterprise license and I added the Virtual SAN license for my cluster. After adding the Virtual SAN license all of a sudden I had the Distributed Switch capability on the cluster I had VSAN licensed. Now I am not sure what this will look like when VSAN will go GA, but for now those who want to test with VSAN and want to use the Distributed Switch you can. Use the Distributed Switch to guarantee bandwidth (leveraging Network IO Control) to Virtual SAN when combining different types of traffic like vMotion / Management / VM traffic on a 10GbE pair. I would highly recommend to start playing around with this and get experienced. Especially because vSphere HA traffic and VSAN traffic are combined on a single NIC pair and you do not want HA traffic to be impacted by replication traffic.

- The Samsung SM1625 SSD series (eMLC) has been certified for Virtual SAN. It comes in sizes ranging between 100Gb and 800GB and can do up to 120k IOps random read… Nice to see the list of supported SSDs expanding, will try to get my hands on one of these at some point to see if I can do some testing.

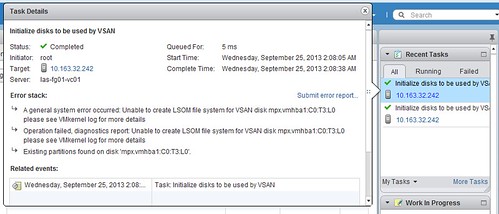

- Most people by now are aware of the challenges there were with the AHCI controller. I was just talking with one of the VSAN engineers who mentioned that they have managed to do a full root cause analysis and pinpoint the root of this problem. Currently there is a team working on solving it and things are looking good and hopefully soon a new driver will be released, when we do I will let you guys know as I realize that many use these controllers in their home-lab.