In 2013 I wrote an article about the minimum number of hosts for Virtual SAN. Since then this post has started living its own life. Somehow people have misunderstood my post and used/abused it in many shapes and forms. When I look at the size of a traditional cluster (non-VSAN) the minimum size is 2. From an availability perspective I ask myself what is the risk I am willing to take. What does that mean?

In a previous life I did many projects for SMB customers. My SMB customers typically had somewhat in the range of 2-5 hosts. With the majority having 2-3. In many cases those having 2-3 hosts were running roughly a similar number of virtual machines. The difference between the two situations “2 hosts” versus “3 hosts” was whether during times of maintenance (upgrading / updating) or failure if the ability to restart the virtual machine after a secondary failure. Many customers decided to go with 2 node clusters. Key reason for it being price vs risk. At normal operations risk is low, but the price of an additional host was relatively high.

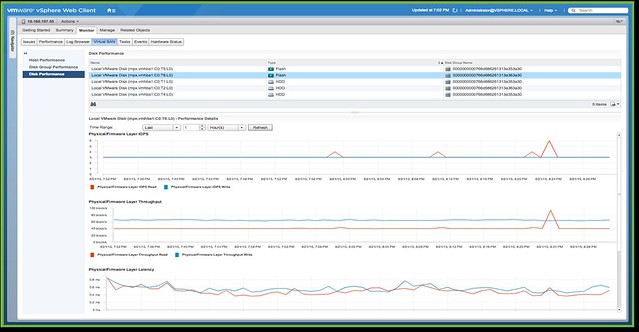

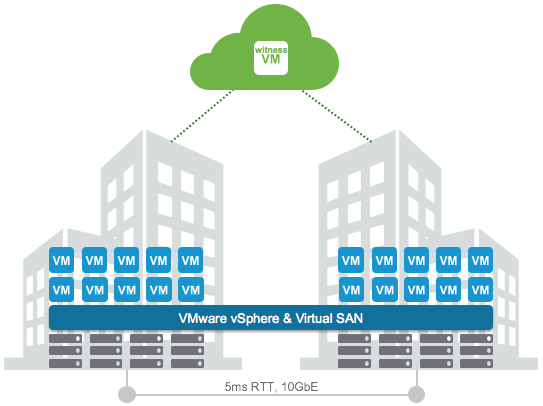

Now compare this to Virtual SAN and you will see the same applies. With Virtual SAN we have a minimum of 3 hosts, well in a ROBO configuration you can have 2 with an external witness. This means that from a support perspective the bare minimum of dedicated physical hosts required for VSAN is 2. There you go, 2 is the bare minimum for ROBO. For non-ROBO 3 is the minimum. Fully supported, offers all functionality and similar to 4 hosts.

Is having an extra host a good plan? Yes of course it is. HA / DRS / VSAN (and any other scale-out storage solution for that matter) will benefit from more hosts. You as a customer need to ask yourself what the risk is, and if the cost is justifiable.

PS1: A question just came in, want to make that it is clear. Even in a 2-host ROBO configuration you can do maintenance! A single copy of the data and the witness remains available and will have quorum.

PS2: No, you cannot host your “witness” VM on the VSAN cluster itself, this is not supported as the witness is the quorom for the cluster and it should be outside of the cluster to provide certainty of the state in the case of a failure.