I was reading this article by Michael Webster about the benefit of Jumbo Frames. Michael was testing what the impact was from both an IOps and latency perspective when you run Jumbo Frames vs non-Jumbo Frames. Michael saw a clear benefit:

- Higher IOps

- Lower latency

- Lower CPU utilization

I would highly recommend reading Michael’s full article for the details, I don’t want to steal his thunder. Now what was most interesting is the following quote, I highly regard Michael he is a smart guy and typically spot-on:

I’ve heard reports that some people have been testing VSAN and seen no noticeable performance improvement when using Jumbo Frames on the 10G networks between the hosts. Although I don’t have VSAN in my lab just yet my theory as to the reason for this is that the network is not the bottleneck with VSAN. Most of the storage access in a VSAN environment will be local, it’s only the replication traffic and traffic when data needs to be moved around that will go over the network between VSAN hosts.

As I said, Michael is a smart guy and as I’ve seen various people asking questions around this and it isn’t a strange assumption to make that with VSAN most IO will be local, I guess this is kind of the Nutanix model. But VSAN is no Nutanix. VSAN takes a different approach, a completely different approach and this is important to realize.

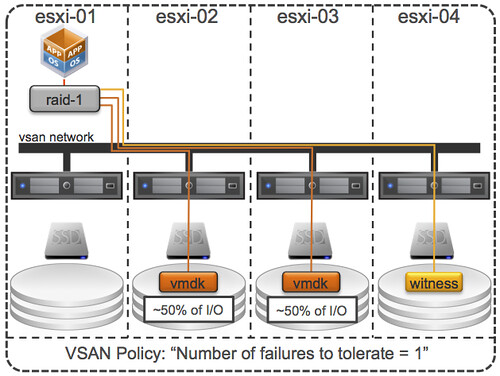

I guess with a very small cluster of 3 nodes Michael chances of IO being local are bigger, but even then IO will not only be local at a minimum 50% (when failures to tolerate is set to 1) due to the data mirroring. So how does VSAN handle this, what are some of the things to keep in mind, lets starts with some VSAN principles:

- Virtual SAN uses an “object model”, objects are stored on 1 or multiple magnetic disks and hosts.

- Virtual SAN hosts can access “objects” remotely, both read and write.

- Virtual SAN does not have the concept of data locality / gravity, meaning that the object does not follow the virtual machine, reason for this is that moving data around is expensive from a resource perspective.

- Virtual SAN has the capability to read from multiple mirror copies, meaning that if you have 2 mirror copies IO will be distributed equally.

What does this mean? First of all, lets assume you have an 8 host VSAN cluster. You have a policy configured for availability: N+1. This means that the objects (virtual disks) will be on two hosts (at a minimum). What about your virtual machine from a memory and CPU point of view? Well it could be on any of those 8 hosts. With DRS being envoked every 5 minutes at a minimum I would say that chances are bigger that the virtual machine (from a CPU/Memory) resides on one of the 6 hosts that does not hold the objects (virtual disk). In other words, it is likely that I/O (both reads and writes) are being issued remote.

From an I/O path perspective I would like to re-iterate that both mirror copies can and will serve I/O, each would serve ~50% of the I/O. Note that each host has a read cache for that mirror copy, but blocks in read cache are only stored once, this means that each host will “own” a set of blocks and will serve data for those be it from cache or be it from spindles. Easy right?

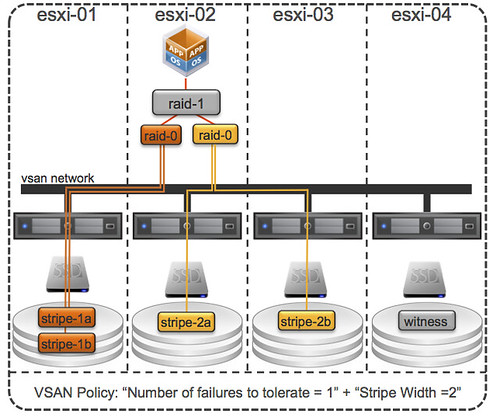

Now just imagine you have configured your “host failures” policy set to 2. I/O can now come from 3 hosts, at a minimum. And why do I say at a minimum? Because when you have the stripe width configured or for whatever reason striping goes across hosts instead of disks (which is possible in certain scenarios) then I/O can come from even more hosts… VSAN is what I would call a true fully distributed solution! Below is an example of “number of failures” set to 1 and “stripe width” set to 2, as can be seen there are 3 hosts that are holding objects.

Lets reiterate that. When you define “host failures” as 1 and stripe width as 1 then VSAN can still, when needed, stripe across multiple disks and hosts. When needed, meaning when for instance the size of a VMDK is larger than a single disk etc.

Now lets get back to the original question Michael asked himself, does it make sense to use Jumbo Frames? Michael’s tests clearly showed that it does, in his specific scenario that is of course. I have to agree with him that when (!!) properly configured it will definitely not hurt, so the question is should you always implement this? I guess if you can guarantee implementation consistency, then conducts tests like Michael did. See if it benefits you, and if it lowers latency and increase IOps I can only recommend to go for it.

PS: Michael mentioned that even when mis-configured it can’t hurt, well there were issues in the past… although they are solved now, it is something to keep in mind.