On twitter @heiner_hardt asked for help with a performance related issue he was experiencing. As I am starting to appreciate esxtop more every single day and I really start to appreciate solving performance problems I decided to dive in to it.

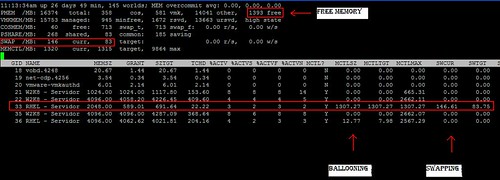

After the initial couple of questions Heiner posted a screenshot:

Heiner highlighted (red outline) a couple of metrics which indicated swapping and ballooning as he pointed out with the text boxes. Although I can’t disagree that swapping and ballooning happened at some point in time I do disagree with the conclusion that this virtual machine is swapping. Lets break it down:

Global Statistics:

- 1393 Free -> Currently 1393MB memory available

- High State -> Hypervisor is not under memory pressure

- SWAP /MB 146 Cur -> 146MB has been swapped

- SWAP /MB 83 Target -> Target amount that needed to be swapped was 83MB

- 0.00 r/s -> No reads from swap currently

- 0.00 w/s -> No writes to swap currently

World Statistics:

- MCTLSZ 1307.27 -> The amount of guest physical memory that has been reclaimed by the balloon driver is 1307.27MB

- MCTLTGT 1307.27 -> The amount of guest physical memory to be kept in the balloon driver is 1307.27MB

- SWCUR 146.61 -> The current amount of memory that has been swapped is 146.61.

- SWTGT 83.75 -> The target amount of memory that needed to be swapped was 83.75MB

Now that we know what these metrics mean and what the associated values are we can easily draw a conclusion:

At one point the host has most likely been overcommitted. However currently there is no memory pressure (state = high (>6% free memory)) as there is 1393MB of memory available. The metric “swcur” seems to indicate that swapping has occurred” however currently the host is not actively reading from swap or actively writing to swap (0.00 r/s and 0.00 w/s).

If the host is not experiencing memory pressure why is the balloon driver still inflated (MCTLTGT 1307.27MB)? Although the host is currently in a high memory state the amount of available memory almost equals the amount of claimed memory by the balloon driver. However deflating the balloon would return the host to a memory constrained state again.

My recommendation? Cut down on memory on your VMs! The fact that memory has been granted does not necessarily mean it is actively used and in this case it leads to serious overcommitment which in its turn leads to ballooning and even worse swapping.

One thing to point out though is the amount of “PSHARE” (TPS) is compared to average environments low. Might be something to explore!

I agree with your conclusions. Also, you have 0.00 r/s and w/s, which indicates to me that it is not _CURRENTLY_ swapping.

I have been using ESXTOP recently for troubleshooting a similar problem to this. I had a single VM that was consistently ballooning for days even though there was plenty of memory free in the resource group it was part of. ESXTOP obviously can’t show me the resources across a resource group but can give some good information as to what the VM is doing.

What was strange is that the I could trace the ballooning to the exact date a couple of big VMs were created for an upcoming project. These had actually caused over provisioning and contention in the resource pool, however I powered them down and still this one machine persisted to balloon constantly even though there was free memory. Eventually I re-installed VMware tools on the troublesome machine and that resolved the issue, it was as if the balloon driver was stuck thinking there was contention. Very Strange?!?

In this particular case though it looks like 16GB available 15GB out to VM’s, Once you add in virtualisation overhead then there is bound to be a degree of overprovisioning and at somepoint contention. The spot regarding the free memory and the balloon amount is a good one.

Duncan can you explain why just a single VM is chosen as the target for the ballooning. Is there a reason that a little isn’t taken from a number of VM’s instead of a single VM being targetted?

Duncan,

As always you published an excellent analysis of my problem and you were right.

When I moved a VM to another host, more memory was released and after some time the balloon driver was deflated and swap statistics were zeroed. This proved that the host became overcommitted.

What still puzzles me (maybe something I missed) is why the hypervisor reclaimed memory through ballooning if Vmware said that whether or not host memory is overcommitted , the hypervisor will not reclaim memory through ballooning or swapping. And it should be true since I have no limits set. Or am I wrong? At least that is what the “Understanding Memory Resource Management in VMware ESX Server” manual says (page 10).

But anyway I want to thank you for the time and assistance provided. This really helped me a lot.

Regards,

Heiner

We ran into a bug on VMware 3.5U4 which was resolved in VMware 3.5U5. VMs would swap regardless of the fact that the memory state was high. We worked with VMware support and our TAM for a long time and got no resolution, but the problem went away on U5.

The problem would usually exhibit when the overcommit ratio was up to 0.5 or greater, memory state was high and most often on VMs with very large memory footprints (16GB or 332GB). The obvious solution of reducing the memory allocation was not an option due to how the environments were used (sometimes a lot, sometimes not at all).

A vmotion (either manual or via DRS) would cause the VM to immediately begin swapping back in causing terrible performance problems. It was especially painful on linux.

About page sharing: If this is vSphere and mem.allocguestlargepage is the default 1 then it is the same I have observed in our environment: page sharing rarely happens.

About why only this VM is ballooning: probably the VM is idle (idle memory tax) and the others aren’t (or there is a serious bug in vSphere 🙂 ).

Idle Memory Tax! I forgot about that one, that makes sense, thanks Mihai

@virtualPro – Idle Memory Tax is definitely the reason.

@Heiner – I think you are misreading the document! If there is memory pressure on a host level the hypervisor will always start reclaiming memory through ballooning or swapping. that is the only way it can do it.

Duncan, Frank,

there is plenty of information about memory management techniques but there is one thing which is not explained properly. You talking about memory pressure on a host and levels where host starting to use memory reclaiming techniques like balloning, swaping etc. Memory pressure in some state is defined by 4 levels of free host memory. Nowhere is stated what exactly is Free Host Memory and from which formula is calculated. It is a host memory which is divided from Host memory capacity – memory consumed by virtual machines + VMkernel memory and overhead OR it is an amount of host memory – sum of all ACTIVE memory for all powered on VMs + VMKernel, overhead …???

Because e.g. if I have 4 VMs which I granted 16GB RAM each and they will run on host with 48GB of RAM, without any reservation or limit. All of them will actively using only let’s say 1GB of RAM each, will hypervisor think that is in memory pressure and will he start reclaiming memory with balloning a next with swaping, compression….If the answer is yes it is not much clever behavior of hypervisor and it will leeds to serious performance degradation. At the end if it is true it is negation of memory overcommit.

Sorry maybe this is a silly question but If you dive into VMware documentation sources it is not allways clear and accurate and sometimes is quite confusing. So it is time to ask experts 🙂 can you guys please explain it to me?

Many Thanks

Petr

All,

I´m familiar with the concept about Idle Memory Tax and agree with you about the fact this may be the cause of what i´m facing.

But what reaaly “opened my mind” was what Frank Denneman explained in his latest article (memory reclaimation, when and how?). And this point is not covered in the “Resource Management Guide”. Bellow follows:

“Before the VMkernel detects the free memory is reaching the soft threshold, it will start to request pages through the balloon driver (vmmemctl), this is because it takes time for the Guest OS to respond to the vmmemctl driver with suitable pages. By starting prematurely, the VMkernel tries to avoid the situation that it will reach the Soft state or worse. So you can see ballooning occurring sometimes before the High state is reached”

That´s what I mean. If we follow the manuals in a very strict way, we simply should assume that memory in “high state” should never be ballooned. But this proves that there are always dissonance with certain statements.

Again, thanks for all the help and comments guys.

Regards,

Heiner

Heiner,

I corrected the statement, “So you can see ballooning occurring sometimes before the High state is reached”.

What I meant to say was, once the VMkernel detects that it is falling towards the threshold of 4% it will start to balloon. So passing the threshold of 6%, it will not wait “idle” and see if it reaches the 4%, but it will start to balloon, knowing it will take some time to get results.

So between 6% and 4% memory free you can see ballooning happening.

Hi,

Could anyone confirm if memory ballooning is happening all the time irrespective of memory contention in the environment.

I got the answer, you can ignore my above post. What I understood is vmmctl always work in background to gather the information for non-active memory pages and inflate the balloon when asked by the host kernel for memory reclamation at the time of memory contention.

–Sandeep Choudhary

Hi,

Just come across this interesting thread as am assessing performance at a customer environment.

As far as I can tell, the only way to reduce the balloon size once inflated is to vMotion the VM to another host. Is there another way to do this please?

Thanks,

Andy

Excellent analysis. Initially I thought anything in SWCUR column equals to bad performance but that’s obviously not the case. SWCUR only indicates how much memory VM has in .vswp file. To see if VM is currently actively swapping you need to look at SWW/s and SWR/s apart from state of host etc.

Duncan it would be good idea to be able to see that picture clearly. Currently it’s not very clear to see. thanks,

Considering it is almost a 4 year old article there isn’t much I can do at this point 🙂