A couple of days ago I was asked whether I would recommend to use two logical PCIe flash devices leveraging a single physical PCIe flash device. The reason for the question was the recommendation from VMware to have two Virtual SAN disk groups instead of (just) one disk group.

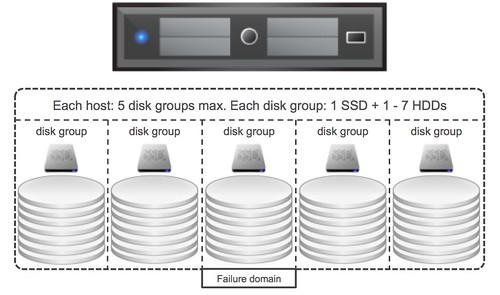

First of all, I want to make it clear that this is a recommended practices but definitely not a requirement. The reason people have started recommending it is because of “failure domains”. As some of you may know, when a flash device becomes unavailable, which is used for read caching / write buffering and fronts a given set of disks, all the disks in that disk group associated with the flash devices becomes unavailable. As such a disk group can be considered a failure domain, and when it comes to availability it is typically best to spread risks so having multiple failure domains is desirable.

When it comes to PCIe devices would it make sense to carve up a single physical device in to multiple logical? From a failure point of view I personally think it doesn’t add much value, if the device fails then it is likely both logical devices fail. From an availability point of view there isn’t much 2 logical devices adds, however it could be beneficial to have multiple logical devices if you have more than 7 disks per server.

As most of you will know each host can have 7 disks per disk group at most and 5 disk groups per server. If there is a requirement for the server to have more than 7 disks then there will be a need to have multiple flash devices, in that scenario creating multiple logical devices would be needed, although I would still prefer having multiple physical devices from a failure tolerance perspective than having multiple logical devices. But I guess it all depends on what type of devices you use, if you have sufficient PCIe slots available etc. In the end the decision is up to you, but do make sure you understand the impact of your decision.