I had a question last week around HA’s datastore heartbeating, the question was if datastore heartbeating still worked if you only have 1 datastore in your environment. I can understand where the question comes from as HA throws this error that you need to have 2 datastores at a minimum for HA datastore heartbeating to function correctly. I want to point out that even though HA says that 2 datastores is the minimum, even when only one datastore is available it will be used for heartbeat purposes. Yes this error will be there on your cluster, and yes you can suppress it using “das.ignoreInsufficientHbDatastore“. I figured others might be hitting the same error and have the same question so why not document it?!

datastore

How does vSphere recognize an NFS Datastore?

This question has popped up various times now, how does vSphere recognize an NFS Datastore? This concept has changed over time and hence the reason many people are confused. I am going to try to clarify this. Do note that this article is based on vSphere 5.0 and up. I had a similar article a while back, but figured writing it in a more explicit way might help answering these questions. (and gives me the option to send people just a link :-))

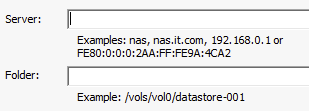

When an NFS share is mounted a unique identifier is created to ensure that this volume can be correctly identified. Now here comes the part where you need to pay attention, the UUID is created by calculating a hash and this calculation uses the “server name” and the folder name you specify in the “add nfs datastore” workflow.

This means that if you use “mynfserver.local” on Host A you will need to use to use the exact same on Host B. This also applies to the folder. Even “/vols/vol0/datastore-001” is not considered to be the same as “/vols/vol0/datastore-001/”. In short, when you mount an NFS datastore make absolutely sure you use the exact same Server and Folder name for all hosts in your cluster!

By the way, there is a nice blogpost by NetApp on this topic.

Should I use many small LUNs or a couple large LUNs for Storage DRS?

At several VMUGs I presented a question that always came up was the following: “Should I use many small LUNs or a couple of large LUNs for Storage DRS? What are the benefits of either?”

I posted about VMFS-5 LUN sizing a while ago and I suggest reading that first if you haven’t yet, just to get some idea around some of the considerations taken when sizing datastores. I guess that article already more or less answers the question… I personally prefer many “small LUNs” than a couple of large LUNs, but let me explain why. As an example, lets say you need 128TB of storage in total. What are your options?

You could create 2x 64TB LUNs, 4x 32TB LUNs, 16x 8TB LUNs or 32x 4TB LUNs. What would be easiest? Well I guess 2x 64TB LUNs would be easiest right. You only need to request 2 LUNs and adding them to a datastore cluster will be easy. Same goes for the 4x 32TB LUNs… but with 16x 8TB and 32x 4TB the amount of effort increases.

However, that is just a one-time effort. You format them with VMFS, add the to the datastore cluster and you are done. Yes, it seems like a lot of work but in reality it might take you 20-30 minutes to do this for 32 LUNs. Now if you take a step back and think about it for a second… why did I wanted to use Storage DRS in the first place?

Storage DRS (and Storage IO Control for that matter) is all about minimizing risk. In storage, two big risks are hitting an “out of space” scenario or extremely degraded performance. Those happen to be the two pain points that Storage DRS targets. In order to prevent these problems from occurring Storage DRS will try to balance the environment, when a certain threshold is reached that is. You can imagine that things will be “easier” for Storage DRS when it has multiple options to balance. When you have one option (2 datastores – source datastore) you won’t get very far. However, when you have 31 options (32 datastores – source datastore) that increases the chances of finding the right fit for your virtual machine or virtual disk while minimizing the impact on your environment.

I already dropped the name, Storage IO Control (SIOC), this is another feature to take in to account. Storage IO Control is all about managing your queues, you don’t want to do that yourself. Believe me it is complex and no one likes queues right. (If you have Enterprise Plus, enable SIOC!) Reality is though, there are many queues in between the application and the spindles your data sits on. The question is would you prefer to have 2 device queues with many workloads potentially queuing up, or would you prefer to have 32 device queues? Look at the impact that this could have.

Please don’t get me wrong… I am not advocating to go really small and create many small LUNs. Neither am I saying you should create a couple of really large LUNs. Try to find the the sweetspot for your environment by taking failure domain (backup restore time), IOps, queues (SIOC) and load balancing options for Storage DRS in to account.

With vSphere 5.0 and HA can I share datastores across clusters?

I have had this question multiple times by now so I figured I would write a short blog post about it. The question is if you can share datastores across clusters with vSphere 5.0 and HA enabled. This question comes from the fact that HA has a new feature called “datastore heartbeating” and uses the datastore as a communication mechanism.

The answer is short and sweet: Yes.

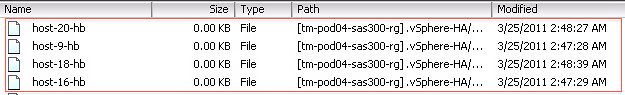

For each cluster a folder is created. The folder structure is as follows:

/<root of datastore>/.vSphere-HA/<cluster-specific-directory>/

The “cluster specific directory” is based on the uuid of the vCenter Server, the MoID of the cluster, a random 8 char string and the name of the host running vCenter Server. So even if you use dozens of vCenter Servers there is no need to worry.

Each folder contains the files HA needs/uses as shown in the screenshot below. So no need to worry around sharing of datastores across clusters. Frank also wrote an article about this from a Storage DRS perspective. Make sure you read it!

PS: all these details can be found in our Clustering Deepdive book… find it on Amazon.