I’ve seen a few questions around this, is it possible, or supported, to use vSAN Datastore Sharing aka HCI Mesh to connect OSA with ESA? Or of course the other way around. I can be brief about this, no it is not supported and it isn’t possible either. vSAN HCI Mesh or Datastore Sharing uses the vSAN proprietary protocol called RDT. When using vSAN OSA a different version is used of RDT than with vSAN ESA, and these are unfortunately not compatible at the moment. Meaning that as a result you cannot use vSAN Datastore Sharing to share your OSA capacity with an ESA cluster, or the other way around. Hope that clarify things.

vSAN

vSAN ESA and the minimum number of hosts with RAID-1/5/6

I had a meeting last week with a customer and a question came up around the minimum number of hosts a cluster requires in order to use. particular RAID configuration for vSAN. I created a table for the customer and a quick paragraph on how this works and figured I would share it here as well.

With vSAN ESA VMware introduced a new feature called “Adaptive RAID-5”. I described this feature in this blog post here. In short, depending on the size of the cluster a RAID-5 configuration will either be a 2+1 scheme or a 4+1 scheme. There’s no longer a 3+1 scheme with vSAN ESA. Of course, there’s still the ability to use RAID-1 and RAID-6 as well, the RAID-1 and RAID-6 schemes remained unchanged.

When it comes to vSAN ESA, below are the number of hosts required for a particular RAID scheme. Do note, that with RAID-5, of the size of the cluster changes (higher of lower) then the scheme may also change as described in the linked article above.

| Failures To Tolerate | Object Configuration | Minimum number of hosts | Capacity of VM size |

|---|---|---|---|

| No data redundancy | RAID-0 | 1 | 100% |

| 1 Failure (Mirroring) | RAID-1 | 3 | 200% |

| 1 Failure (Erasure Coding) | RAID-5, 2+1 | 3 | 150% |

| 1 Failure (Erasure Coding) | RAID-5, 4+1 | 6 | 125% |

| 2 Failures (Erasure Coding) | RAID-6, 4+2 | 6 | 150% |

| 2 Failures (Mirorring) | RAID-1 | 5 | 300% |

| 3 Failures (Mirorring) | RAID-1 | 7 | 400% |

Doing network/ISL maintenance in a vSAN stretched cluster configuration!

I got a question earlier about the maintenance of an ISL in a vSAN Stretched Cluster configuration which had me thinking for a while. The question was what would you do with your workload during maintenance. I guess the easiest of course is to power off all VMs and simply shutdown the cluster, for which vSAN has a UI option, and there’s a KB you can follow. Now, of course, there could also be a situation where the VMs need to remain running. But how does this work when you end up losing the connection between all three locations? Normally this would lead to a situation where all VMs will become “inaccessible” as you will end up losing quorum.

As said, this had me thinking, you could take advantage of the “vSAN Witness Resiliency” mechanism which was introduced in vSAN 7.0 U3. How would this work?

Well, it is actually pretty straight forward, if all hosts of 1 site are in maintenance mode, failed, or powered off, the votes of the witness object for each VM/Object will be recalculated within 3 minutes. When this recalculation has completed the witness can go down without having any impact on the VM. We introduced this capability to increase resiliency in a double-failure scenario, but we can (ab)use this functionality also during maintenance. Of course I had to test this, so the first step I took was placing all hosts in 1 location into maintenance mode (no data evac). This resulted in all my VMs being vMotioned to the other site.

Now next I checked with RVC if my votes were recalculated or not. As stated, depending on the number of VMs this can take around 3 minutes in total, but usually will probably be quicker. After the recalculation had been completed I powered off the Witness, and this was the result as shown below, all VMs were still running.

Of course, I had to double check on the commandline using RVC (you can use the command “vsan.vm_object_info” to check a particular object for instance) to ensure that indeed the components of those VMs were still “ACTIVE” instead of “ABSENT”, and there you go!

Now when maintenance has been completed, you simply do the reverse, you power on the witness, and then you power on the hosts in the other location. After the “resync” has been completed the VMs will be rebalanced again by DRS. Note, DRS rebalancing (or should rules being applied) will only happen when the resync of the VM has been completed.

What does Datastore Sharing/HCI Mesh/vSAN Max support when stretched?

This question has come up a few times now, what does Datastore Sharing/HCI Mesh/vSAN Max support when stretched? It is a question which keeps coming up somehow, and I personally had some challenges to find the statements in our documentation as well. I just found the statement and I wanted to first of all point people to it, and then also clarify it so there is no question. If I am using Datastore Sharing / HCI Mesh, or will be using vSAN Max, and my vSAN Datastore is stretched, what does VMware (or does not) support?

We have multiple potential combinations, let me list them and add whether it is supported or not, not that this is at the time of writing with the current available version (vSAN 8.0 U2).

- vSAN Stretched Cluster datastore shared with vSAN Stretched Cluster –> Supported

- vSAN Stretched Cluster datastore shared with vSAN Cluster (not stretched) –> Supported

- vSAN Stretched Cluster datastore shared with Compute Only Cluster (not stretched) –> Supported

- vSAN Stretched Cluster datastore shared with Compute Only Cluster (stretched, symmetric) –> Supported

- vSAN Stretched Cluster datastore shared with Compute Only Cluster (stretched, asymmetric) –> Not Supported

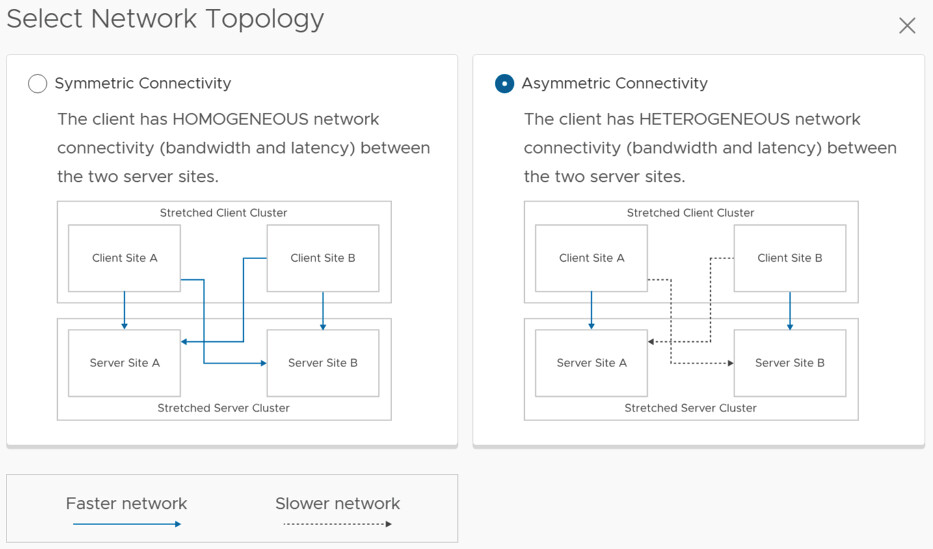

So what is the difference between symmetric and asymmetric? The below image, which comes from the vSAN Stretched Configuration, explains it best. I think Asymmetric in this case is most likely, so if you are running Stretched vSAN and a Stretched Compute Only, it most likely is not supported.

This also applies to vSAN Max by the way. I hope that helps. Oh and before anyone asks, if the “server side” is not stretched it can be connected to a stretched environment and is supported.

Unexplored Territory episode 59: Introducing vSAN Max!

Two months ago VMware introduced vSAN Max at VMware Explore. I wrote about it in this blog. Last week I had a conversation with Kalyan Krishnaswamy on the topic of vSAN Max, for which Kalyan is the Product Manager. I figured I would share the episode via my blog as well for those who are not subscribed to the Unexplored Territory podcast just yet. Note, you can either listen to it below, of just listen via Spotify, Apple, or anywhere else you get your podcasts.