This question has popped up various times now, how does vSphere recognize an NFS Datastore? This concept has changed over time and hence the reason many people are confused. I am going to try to clarify this. Do note that this article is based on vSphere 5.0 and up. I had a similar article a while back, but figured writing it in a more explicit way might help answering these questions. (and gives me the option to send people just a link :-))

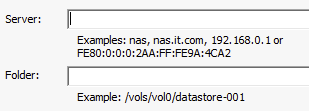

When an NFS share is mounted a unique identifier is created to ensure that this volume can be correctly identified. Now here comes the part where you need to pay attention, the UUID is created by calculating a hash and this calculation uses the “server name” and the folder name you specify in the “add nfs datastore” workflow.

This means that if you use “mynfserver.local” on Host A you will need to use to use the exact same on Host B. This also applies to the folder. Even “/vols/vol0/datastore-001” is not considered to be the same as “/vols/vol0/datastore-001/”. In short, when you mount an NFS datastore make absolutely sure you use the exact same Server and Folder name for all hosts in your cluster!

By the way, there is a nice blogpost by NetApp on this topic.

Interesting. I’ve wondered why I end up with doubles sometimes (I don’t use NFS for running VMs, just templates and archived ones).

I wil point out that it would be really useful, in this case, for VMware to provide in the interface a way to easily check what settings were made for an NFS datastore (ie, replicate the setup box as an info box).

The vSphere 5.1 Web Client offers you the ability to connect the same datastore to all hosts.

Is there any way to change the server portion of this once a store is in use? Basically I mounted an NFS datastore by IP on several hosts and now need to change the IP and there are VMs on that datastore. I can easily power them off but to re-add them to inventory would be a nightmare because this is a target for Veeam replicas.

Do you have the new storage available?

If so – mount it and then do Storage vMotion the VMs over to the new storage.

I wouldn’t recommend that. It is the same datastore, mounting it again could lead to all sorts of strange locking issues.

Not sure what the best approach for this would be to be honest, never used the Veeam solution.

I was thinking the new IP could me exposed to vSphere with different set of volumes (not the same volumes double mounted and eliminate the locking, etc).

Maybe use some temporary storage to evacuate the original IP – use a virtual storage appliance (VSA) to hold the VMs and then re-do your IP on the original NAS and move the VMs after you remounted the data stores correctly.

Definitely think about leveraging vStorage Motion for this – then when the old Datastores are evacuated, drop them from the vSphere host.

If the Host Swap is on an NFS Volume (i.e. dedicated Swap volume) then I think you will need to bounce the host to move it.

Duncan,

I think the link in the last sentence may be wrong – it goes here

http://www.yellow-bricks.com/vols/vol0/datastore-001

Thanks,

Rob

fixed

It’d be nice if VMware was smart enough to realize the same exact NFS datastore in one datacenter is the same exact one in another datacenter. You are forced to do a cold migration from one datacenter to another which is a pain when the storage isnt’ changing at all, just the virtual datacenter. I understand why it is setup like that, but it would be nice to have an option to tell VMware “Hey! Don’t copy the VMDKs! You arent changing your storage!”.

Hi Duncan, it would be wonderful if you could give an overview about expected behaviour with nfs datastores along with the recent APD/PDL improvements in Vsphere 5.1 and the effects of this on guest vms.