I have been getting so many hits on my blog for VXLAN I figured it was time to expand a bit on what I have written about so far. My first blog post was about Configuring VXLAN, the steps required to set it up in your vSphere environment. As I had many questions about the physical requirements I followed up with an article about exactly that, VXLAN Requirements. Now I am seeing more and more questions around where and when VXLAN would be a great fit, so lets start with some VXLAN basics.

The first question that I would like to answer is what does VXLAN enable you to do?

In short, and I am trying to make it as simple as I possibly can here… VXLAN allows you to create a logical network for your virtual machines across different networks. More technically speaking, you can create a layer 2 network on top of layer 3. VXLAN does this through encapsulation. Kamau Wanguhu wrote some excellent articles about how this works, and I suggest you read that if you are interested. (VXLAN Primer Part 1, VXLAN Primer Part 2) On top of that I would also highly recommend Massimo’s Use Case article, some real useful info in there! Before we continue, I want to emphasize that you could potentially create 16 million networks using VXLAN, compare this to the ~4000 VLANs and you understand by this technology is important for the software defined datacenter.

Where does VXLAN fit in and where doesn’t it (yet)?

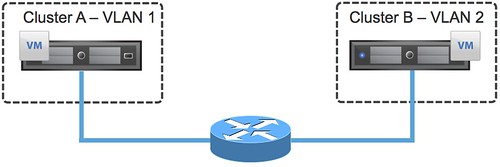

First of all, lets start with a diagram.

In order for the VM in Cluster A which has “VLAN 1” for the virtual machine network to talk to the VM in Cluster B (using VLAN 2) a router is required. This by itself is not overly exciting and typically everyone will be able to implement it by the use of a router or layer 3 switching device. In my example, I have 2 hosts in a cluster just to simplify the picture but imagine this being a huge environment and hence the reason many VLANs are created to restrict the failure domain / broadcast domain. But what if I want VMs in Cluster A to be in the same domain as the VMs in Cluster B? Would I go around and start plumbing all my VLANs to all my hosts? Just imagine how complex that will get fairly quickly. So how would VXLAN solve this?

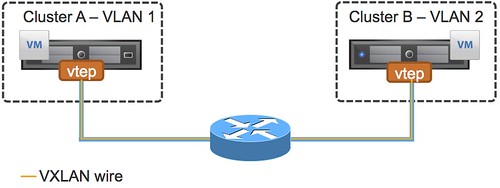

Again, diagram first…

Now you can see a new component in there, in this case it is labeled as “vtep”. This stand for VXLAN Tunnel End point. As Kamau explained in his post, and I am going to quote him here as it is spot on…

The VTEPs are responsible for encapsulating the virtual machine traffic in a VXLAN header as well as stripping it off and presenting the destination virtual machine with the original L2 packet.

This allows you to create a new network segment, a layer 2 over layer 3. But what if you have multiple VXLAN wires? How does a VM in VXLAN Wire A communicate to a VM in VXLAN Wire B? Traffic will flow through an Edge device, vShield Edge in this case as you can see in the diagram below.

So how about applying this cool new VXLAN technology to an SRM infrastructure or a Stretched Cluster infrastructure? Well there are some caveats and constraints (right now) that you will need to know about, some of you might have already spotted one in the previous diagram. I have had these questions come up multiple times, so hence the reason I want to get this out in the open.

- In the current version you cannot “stitch” VXLAN wires together across multiple vCenter Servers, or at least this is not supported.

- In a stretched cluster environment a VXLAN implementation could lead to traffic tromboning.

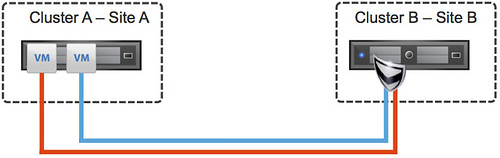

So what do I mean with traffic tromboning? (Also explained in this article by Omar Sultan.) Traffic tromboning means that potentially you could have traffic flowing between sites because of the placement of a vShield Edge device. Lets depict it to make it clear, I stripped this to the bare minimum leaving VTEPs, VLANs etc out of the picture as it is complicated enough.

In this scenario we have two VMs both sitting in Site A, and cluster A to be more specific… even the same host! Now when these VMs want to communicate with each other they will need to go through their Edge device as they are on a different wire, represented by a different color in this diagram. However, the Edge device sits in Site B. So this means that for these VMs to talk to each other traffic will flow through the Edge device in Site B and then come back to Site A to the exact same host. Yes indeed, there could be an overhead associated with that. And with two VMs that probably is minor, with 1000s of VMs that could be substantial. Hence the reason I wouldn’t recommend it in a Stretched environment.

Before anyone asks though, yes VMware is fully aware of these constraints and caveats and are working very hard towards solving these, but for now… I personally would not recommend using VXLAN for SRM or Stretched Infrastructures. So where does it fit?

I think in this post there are already a few mentioned but lets recap. First and foremost, the software defined datacenter. Being able to create new networks on the fly (for instance through vCloud Director, or vCenter Server) adds a level of flexibility which is unheard of. Also those environments which are closing in on the 4000 VLAN limitation. (And in some platforms this is even less.) Other options are sites where each cluster has a given set of VLANs assigned but these are not shared across cluster and do have the requirement to place VMs across clusters in the same segment.

I hope this helps…

Duncan,

I am not sure that VXLAN provides any benenfit over solution like Midokura, Nicira, or even vCDNI. Especially with Midokura’s distributed firewall capabilities which would allow for local host routing and firewalling.

Has VXLAN’s time already come and gone?

Can’t say much about Midokura or Nicira as they don’t integrate with VCD at the moment and I have not looked in to them.

With regards to vCD-NI the main difference is that VXLAN is backed by major network vendors and allows for load distribution and higher scale. Also from an integration perspective with the physical network point of view this is different due to the network vendors working with VMware on this.

Donny, Nicira can (potentially) use VXLAN (today it does not) as a tunnelling protocol. Today they use STT. It’s not like Nicira Vs VXLAN. This is part of a convergence story we are not just ready yet to describe in details.

FYI vCDNI only allows to multiplexing a single VLAN. VXLAN, on top of the vCDNI use case, allows to create a vWire on top of 2+ VLANs (vCDNI can’t do that).

I don’t know much about Midokura so I can’t comment.

It’s always hard in these discussions to say what’s “better”.

When you look at these things there are many angles. The first angle is what technologically is better, the second angle is how viable that technology is. From a very “pure” technology standpoint everyone should be using a mainframe (undisputedly the best technology on the market) yet it’s dying.

Similarly one could argue that Microsoft has never been the “best” technology around, yet they conquered the world.

My 2 cents.

Good post, dude (and thanks for the link). Certainly, within a DC, VXLAN is a no-brainer–at least in a pure VMware environments. For better or for worse, inter-DC, the answer still ends up being “it depends”, so posts like these are great for helping folks make informed decisions.

Regards,

Omar (@omarsultan)

Cisco

Thanks Duncan – sorry for all the ambiguous questions!

Thanks for these wonderful easy explanations, it helps a lot.

Merry Christmas and Happy New Year to you Duncan.

Hi Duncan,

I am currently doing a Proof of Concept for vCloud, VXLAN is very good feature which remove the restricting of vlan’s and allows to scaleout. However for our current setup in production we do not need VXLAN, We are convinced that due to the critical data we handle we need the best network performance so we definitely need vlan backed network however without VXLAN can this be possible?

FYI am using the VMware vCloud Direcor 5.1 Evaluation Guide for my setup and has skipped the VXLAN configuration from on page 40. On my test (POC) deployment I am getting an error:

{Ensure that infrastructure is prepared in VSM before creating or modifying VXLAN network pool. Cluster has not been prepared or mapped to a vDS in VSM.}

which is because I have not prepared my host for VXLAN.

I will appreciate if you can point me in the right direction to get the vCloud working without VXLAN.

Thanks

Ibrahim (@ibrahimquraishi)