At a customer site we received several notifications of performance issues with a VMware VI3 environment. After having checked the configuration of the VMs and the Hosts we decided to dive into esxtop. At first sight we did not see any abnormalities. Low %RDY, which is usually the first thing I check, some swapping but not enough to cause any major delays. The weird thing about this one is that it seemed that only when IP was sent/received the VM felt sluggish. As we could not reproduce the issue we decided to start esxtop in batchmode and use esxplot and perfmon to get to the bottom of it. Soon we found what the issue was, receive packets were being dropped at the vSwitch level.

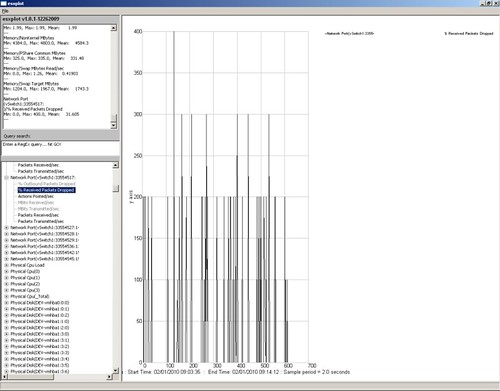

The following screenshot depicts the symptoms.

In other words, at times an enormous amount of received packets were dropped. After some research I found an article which actually describes this behavior. (http://kb.vmware.com/kb/1010071) We tried increasing the buffer size for the E1000 virtual network adapter this VM was configured with but it did not resolve the issue. As there were other drivers mentioned in the post we decided to “upgrade” the NIC to a “vmxnet” NIC and this actually resolved the issue. Although performance is not where we expected it would be yet we are not seeing any dropped packets anymore and can focus on the next possible cause.

I cam accros this a little while ago. The customer I was working for had p2v’d all the servers and were all w2k. Hadnt used the esxplot though so thanks for that.

As a complete guess at the ongoing performance issues I would take a punt on storage performance.

I am having this exact issue with a customer running the Cisco Nexus 1000v. They are experiencing receive packet loss on vm’s even after cisco recommended that we upgrade all the VM’s to VMXNET3. We are still working on the issue and have yet to resolve.

Try going into the driver properties of the nic and turning off large tcp offload when using the E1000 driver. We had some blade servers where doing the above fixed the problem.

Could that be that the ‘pauses’ you see there are due to the Flow Control mechanism pausing frames!? I may be wrong since I can’t read clearly from the screenshot 🙂

If you guys are having issues with 1000v & any type of packet loss have a look through the Cisco 1000v community board. There are numerous resources from previous customer with similar issues. This service is free on a “best-effort” basis. Feel free to post your issues, provide enough detail and we’ll be more than happy to assist you.

https://www.myciscocommunity.com/community/products/nexus1000v?view=discussions

Regards,

Robert

Cisco Systems

Hi Robert,

We are actively working with Cisco support, and speaking with your colleague Justin W. as i type this. We are going to involve VMware next and see perhaps if its a driver issue, and the vEth’s are possibly disconnecting from the 1000v.

After many hours back and forth with cisco, this thread below explains the problem and the fix. Seems to be a known issue out their with IGMP snooping and multicasting. The sympthoms are randomly losing packets to multiple VM’s at the same time across different ESX hosts. If you are experiencing issues with the 1000v, i suggest mentioning the Cisco Bug ID CSCte44240 to the TAC. From there they should have a better idea of how to help you.

https://www.myciscocommunity.com/thread/6271?tstart=0

Thanks!

I encountered the same issue a couple of months ago. The E1000 was dropping packets on Windows Vista/7, Windows Server 2008 and Solaris 10 VM’s. Windows Server 2003 was unaffected of the issue.

If ipv6 was disabled on Vista/7/2008 the %DRPRX value went down to zero. We still experienced sluggish network performance though. Ipv6 settings did not have an affect on Solaris .

VMware Support gave some vague explainations of the cause. First esxtop was “not always accurate” which seemed a bit strange to me. Second was that we should change adapter to vmxnet2 or vmxnet3. That solved it.

Note that the VM’s was created on ESX 3.5 where E1000 was default adapter, then upgraded to vSphere and VM version 7.

Hi I’m using a vmxnet3 in ESX4.1 but i don’t see the multicast packet.

Can you help me?

Thanks

I have

> ESXi 4.1

> windows 2008 R2 64 bit guest os

> connected to procurve 2510G switch

> NIC speed set to 1000 – FULL on guest side

> NIC is E1000 on guest

> large tcp offload is off on the guest

> Port set to AUTO-1000 on switch

> I get the following error when I try to backup the server over the switch

network error

While transmitting or receiving data, the server encountered a network error. Occassional errors are expected, but large amounts of these indicate a possible error in your network configuration. The error status code is contained within the returned data (formatted as Words) and may point you towards the problem.

Can anyone please help. thanks

@Rob thanks for your reference to the IGMP bug in the Cisco Nexus 1000V. I had the same problem with intermittent packet loss and disabling IGMP seems to have solved the problems.

Cheers!

ensure there is no duplicate ip address, despite all the above “fixes” the same issue experienced was related to a non-responding duplicate ip address in another VM host.

Hi.

Same issue here .. so it happened in old redhat 5.2/4.2/4.4 .. that when you p2v inherit the e1000 driver.

Nagios would complain all night about these boxes not responding .. When i change the driver to vmxnet3 the packet loss issue becomes smaller but it doesn’t disappear completely.

I did not detect any packet loss in recently new built VMS with modern OS (Centos 5.8 and above , whatever the kernel they come with by def).

Been dealing with this issue for a while i hope this helps somebody.

I tried all these tricks above (besides downing the server and switching to a VMXNet adapter) and none worked but I did find a work around. It seems in my test with Debian Wheezy using the E1000 adapter that you can actually exceed the nic speed while on the same host with ESXi 5.1 iperf tests exceeded 6Gb/s after tweaking some settings. I would of thought that would of helped but in reality it made the dropped packets get much worse! Once I moved one of the vm’s in the test to another host was it forced to transfer at 1Gb/s or less which led to 0 dropped received packets. I have a ticket open on the vmware side for this as it makes nooooooooo sense.