Two weeks ago I discussed how to determine the correct LUN/VMFS size. In short it boils down to the following formula:

round((maxVMs * avgSize) + 20% )

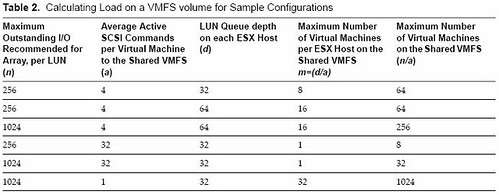

So in other words, the max amount of virtual machines per volume multiplied by the average size of a virtual machine plus 20% for snaps and .vswp rounded up. (As pointed out in the comments if you have VMs with high amounts of memory you will need to adjust the % accordingly.) This should be your default VMFS size. Now a question that was asked in one of the comments, which I already expected, was “how do I determine what the maximum amount of VMs per volume is?”. There’s an excellent white paper on this topic. Of course there’s more than meets the eye but based on this white paper and especially the following table I decided to give it a shot:

No matter what I tried typing up, and believe me I started over a billion times, it all came down to this:

- Decide your optimal queue depth.

I could do a write up, but Frank Dennenman wrote an excellent blog on this topic. Read it here and read NetApp’s Nick Triantos article as well. But in short you’ve got two options:- Queue Depth = (Target Queue Depth / Total number of LUNs mapped from the array) / Total number of hosts connected to a given LUN

- Queue Depth = LUN Queue Depth / Total number of hosts connected to a given LUN

There are two options because some vendors use a Target Queue Depth and others specifically specify a LUN Queue Depth. In the case they mention both just take the one which is most restrictive.

- Now that you know what your queue depth should be you, let’s figure out the rest.

Let’s take a look at the table first. I added “mv” as it was not labeled as such in the table.

n = LUN Queue Depth

a = Average active SCSI Commands per server

d = Queue Depth (from a host perspective)m = Max number of VMs per ESX host on a single VMFS volume

mv = Max number VMs on shared VMFS volumeFirst let’s figure out what “m”, max number of VMs per host on a single volume, should be:

- d/a = m

queue depth 64 / 4 active I/Os on average per VM = 16 VMs per host on a single VMFS volume

The second one is “mv”, max number of VMs on a shared VMFS volume

- n/a = mv

Lun Queue Depth of 1024 / 4 active I/Os on average per VM = 256 VMs in total on a single VMFS volume but multiple hosts

- d/a = m

- Now that we know “d”, “m” and “mv” it should be fairly easy to give a rough estimate of the maximum amount of VMs per LUN if you actually know what your average active I/Os number is. I know this will be your next question so my tip of today:

Windows perfmon – average disk queue length. This contains both active and queued commands.

For Linux this is “top” and if you are already running a virtual environment open up esxtop and take a look at “qstats”.

Another option of course would be running Capacity Planner.

Please don’t overthink this. If you are experiencing issues there are always ways to move VMs around that’s why VMware invented Storage VMotion. Standardize your environment for ease of management and also make sure you feel comfortable about the number of “eggs in one basket”.

There actually is a literal maximum, which is 3011, which is obviously stupid high 🙂

Your logic is right on.

Per the norm, great post! VM to datastore density can be a huge challenge if you have >100 VMs. In addition the number of datstores one has can impact others areas of operations including storage provisioning, D2D backup, and data replication.

I guess there’s no free lunch. If the storage architecture is ham strung with a shallow queue depth than it must be taken into consideration with the architecture design.

Once you get your NetApp systems setup maybe you can repeat this discussion considering NFS as the storage protocol for accessing general purpose shared storage pools.

Cheers!

Just for the record, 3011 is not a literal maximum. But now that the cat is out, it is the maximum number we tried at the time of releasing ESX3.0.0 before we ran out of hardware and interest (and it was getting really late for dinner).

LUN != VMFS. What if you use multiple LUNs and extents to create one VMFS?

There are cases where this would be beneficial. For instance Lab Manager environment, where the linked clones can be created only within one VMFS.