I posted about HA/DRS settings for VSAN Stretched Clustering yesterday and posted an intro to 6.1 and all new functionality which includes stretched clustering. As part of our VMworld session Rawlinson Rivera recorded a nice demo. We figured we should share it with the world, so I added the voice-over so at least it is clear what you are looking at and why certain things are configured in a specific way. I hope this demo shows how dead simple it is to configure VSAN stretched clustering, and how it handles a full site failure. Enjoy,

Search Results for: stretched vsan

HA/DRS configuration with Virtual SAN Stretched Cluster environment

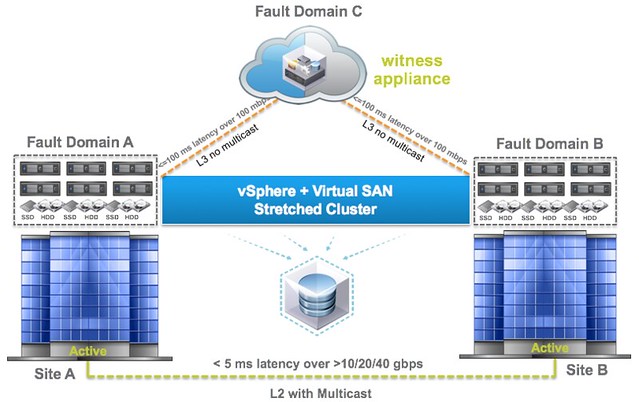

This question is going to come sooner or later, how do I configure HA/DRS when I am running a Virtual SAN Stretched cluster configuration. I described some of the basics of Virtual SAN stretched clustering in a what’s new for 6.1 post already, if you haven’t read it then I urge you to do so first. There are a couple of key things to know, first of all the latency between data sites that can be tolerated is 5ms and to the witness location ~100ms.

If you look at the picture you below you can imagine that when a VM sits in Fault Domain A and is reading from Fault Domain B that it could incur a latency of 5ms for each read IO. From a performance perspective we would like to avoid this 5ms latency, so for stretched clusters we introduce the concept of read locality. We don’t have this in a non-stretched environment, as there the latency is microseconds and not miliseconds. Now this “read locality” is something we need to take in to consideration when we configure HA and DRS.

[Read more…] about HA/DRS configuration with Virtual SAN Stretched Cluster environment

[Read more…] about HA/DRS configuration with Virtual SAN Stretched Cluster environment

Frequently asked questions about Virtual SAN / VSAN

After I published the vSphere Flash Read Cache FAQ many asked if I would also do a blog post for frequently asked questions about Virtual SAN / VSAN. I guess it makes sense considering Virtual SAN / VSAN being such a hot topic. So here are the questions I have received so far, followed by the answers of course. If you have a question do not hesitate to leave a comment.

** updated to reflect VSAN GA **

- Can I add a host to a VSAN cluster which does not have local disks?

- Yes a VSAN cluster can consist of hosts which are not contributing to VSAN storage. You will need to create a VSAN VMkernel and simply add it to the cluster. Note that you will need at a minimum 3 hosts which contribute storage to VSAN

- VSAN requires an SSD, what is it used for?

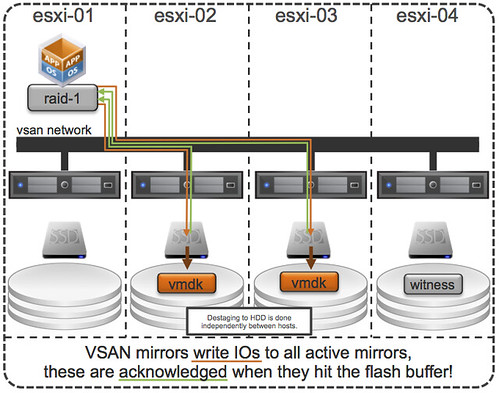

- The SSD is used for read caching (70%) and write buffering (30%). Every write will go to SSD first and will be destaged to HDD later.

- When creating my VSAN VM Storage Policy, when do I use “failures to tolerate” and when do I use “stripe width”?

- Failures to tolerate is all about availability, this is what you define when your virtual machine will need to be available when a host or disk group has failed. So if you want to take 1 host failure in to account, you define the policy to 1. This will then create 2 data objects and 1 witness in your cluster. Stripe width is about performance (read performance when not in cache and write destaging). Setting it to two or higher will result in data being striped across multiple disks. When used in conjunction with “failures” to tolerate this could potentially result in data of a single VM stored on multiple disks on multiple hosts.

- Is there a default storage policy for VSAN?

- Yes there is a policy applied by default to all VMs on a VSAN datastore but you cannot see this policy within the vSphere UI. You can see that a default policy is defined to various classes using the following command: esxcli vsan policy getdefault. By default an N+1 failures to tolerate policy is applied so that even in the case where user forgets to create and set a policy objects are made resilient. It is not recommended to change the default policy.

- How is data striped across multiple disks on a host when stripe width is set to 2?

- When stripe width is set to 2 first of all there is no guarantee that the data is striped across disks within a host. VSAN has it’s own algorythm to determine where data should be placed and as such it could happen that although you have sufficient disks in all host your data is striped across multiple hosts instead of disks within a host. When data is striped this is done in chunks of 1MB.

- What is the purpose of “disk groups” since VSAN will create one datastore anyway?

- A disk group defines the SSD that is used for caching/buffering in front of a set of HDDs. Basically a disk groups is a way of mapping HDDs to an SSD. Each disk group will have 1 SSD and a maximum of 7 disks.

- How many disks can a single host contribute to VSAN?

- Max 5 diskgroup

- Each disk group needs 1 SDD and 1 HDD at a mininum and 7 HDDs at a maximum

- HDD count max per host = 5 x 7 = 35

- SSD count max per host = 5 x 1 = 5

- Are both SSD and PCIe Flash cards supported?

- Yes both are supported but check the HCL for more details around this as there are guidelines and requirements

- Is 10GbE a hard requirement for VSAN?

- 10GbE is not a hard requirement for VSAN. VSAN works perfectly fine in smaller environments, including labs, with 1GbE. Do note that 10GbE is a recommendation.

- Why is it recommended for HA’s isolation response to be configured to “powered-off”?

- When VSAN is enabled vSphere HA uses the VSAN VMkernel network for heartbeating. When a host does not receive any heartbeats, it is most likely that the host is also isolated/partitioned from a VSAN perspective from the rest of the cluster. In this state it is recommended to power-off the virtual machine as a new copy will be powered-on by HA on the remaining hosts in the cluster automatically. This way when the host comes out of isolation the situation where 2 VMs with the same identity are on the network does not occur.

- Can I partition my SSD or disks so that I can use them for other (install ESXi / vFlash) purposes?

- No you cannot partition your SSD or HDD(s). Virtual SAN will only, and always, claim entire disks. With VSAN it probably makes most sense to install ESXi on an internal USB/SD card, this to maximize the capacity for VSAN.

- Does VSAN support deduplication or compression?

- In the current version VSAN does not support deduplication or compression. The most expensive resource in your VSAN cluster is SSD/Flash, hence duplication of data is most relevant on that layer. While having multiple copies of your data results in two copies on HDDs, and two temporary copies in the distributed write buffer (30% of the SSDs), the distributed read cache portion of the Flash (70%) will only contain a single copy of any cached data.

- Can VSAN leverage SAN/NAS datastores?

- VSAN currently does not support the use of SAN/NAS datastores. Disks will need to be “local” and directly passed to the host.

- I was told VSAN does thin disks by default, if I set Object Space Reservation to 100% does that mean the VMDK will be eager zero thick provisioned?

- No it does not mean the VM will be thick provisioned, or a portion for that matter, when you define Object Space Reservation. Object Space Reservation is all about the numbers used by VSAN when calculation used disk space / available disk space etc. When Object Space Reservation is set to 100% on a disk of 25GB then this disk will be a thin provisioned disk but VSAN will do its math with 100% used of 25GB. I guess you can compare it to a memory reservation.

- Does VSAN use iSCSI or NFS to connect hosts to the datastore?

- VSAN does not use either of these two to connect hosts to a datastore. It uses a proprietary mechanism.

- What is the impact of maintenance mode in a VSAN enabled cluster?

- There are three ways of placing a host which is providing storage to your VSAN datastore in maintenance mode:

1) Full Data Migration – All data residing on the host will be migrated. Impact: Could take a long time to complete.

2) Ensure accessibility – VSAN ensures that all VMs will remain accessible by migrating the required data to other hosts. Impact: Potentially availability policies are violated.

3) No Data Migration – No data will be migrated. Impact: Depending on the “failures to tolerate” policy defined some VMs might become unusable.

The safest option is option 1, with option 2 being the preferred and default as it is the fastest to complete. I guess the question is why you are placing the host in maintenance mode and how fast it will become available again. Option 3 is a fall back, in caseyou really need to get into maintenance mode fast and don’t care about potential data loss.

- There are three ways of placing a host which is providing storage to your VSAN datastore in maintenance mode:

- Are there any features of vSphere which aren’t supported/compatible with VSAN?

- Currently vSphere Distributed Power Management, Storage DRS and Storage IO Control are not supported with VSAN.

- How do I add a Virtual SAN / VSAN license?

- VSAN licenses are applied on a cluster level. Open the Webclient click on your VSAN enabled cluster, click the “Manage” tab followed by “Settings”. Under “Configuration” click “Virtual SAN Licensing” and then click “Assign License Key”.

- How will Virtual SAN be priced / licensed?

- VSAN is licensed per socket, the price is $ 2495 per socket or $ 50,- per VDI user. Note that the license includes the Distributed Switch and VM Storage Policies, even when using a vSphere license lower than Enterprise Plus!

- If a host has failed and as such data is lost and all VMs were protected N+1, how long will it take before VSAN starts rebuilding the lost data?

- VSAN will identify which objects are out of compliance (those which had N+1 and were stored on that host) and starts a time-out period of 60 minutes. It has a time-out period to avoid an unnecessary and costly full sync of data. If the host returns within those 60 minutes then the differences will copied to that host. When a VM has multiple mirrors it doesn’t notice the failure, this 60 minute period is all about going back to full policy compliance, i.e. being able to satisfy additional failures may they occur.

- When a virtual machines moves around in a cluster will its objects follow to keep IO local?

- No, objects (virtual disks for instance) do not follow the virtual machine. Just imagine what the cost/overhead of moving virtual disks between hosts would be each time DRS suggests a migration. Instead IO can be done remotely. Meaning that although your virtual machine might run on host-1 from a CPU/Mem perspective, its virtual disks could be physically located on host-2 and host-3.

- When a Virtual Machine is migrated to another host, is the situation such that after a vMotion the SDD cache is lost (temporary performance hit) and the cache will be rebuilt over time?

- No cache will not be lost and there is no need to rebuilt/warm the cache up again. Cache will be accessed remotely when needed.

- Does VSAN support Fault Tolerance aka FT?

- No, VSAN does not support Fault Tolerance in this release.

- The SSD in my host is being reported in vSphere as “non-SSD”. According to support this is a known issue with the generation of server I am using. Will this “mis-reporting” of the disk type affect my ability to configure a VSAN?

- Yes it will, you will need to tag the SSD as local using (example below is what I use in my lab, your identifier will be different). And in this case I claim it as being “local” and as “SSD”.

esxcli storage nmp satp rule add –satp VMW_SATP_LOCAL –device mpx.vmhba2:C0:T0:L0 –option “enable_local enable_ssd”

- Yes it will, you will need to tag the SSD as local using (example below is what I use in my lab, your identifier will be different). And in this case I claim it as being “local” and as “SSD”.

- It was mentioned that it will take 60 minutes after a failure before VSAN starts the automatic repair. Is it possible to shorten this time-out value?

- **disclaimer: Although I do not recommend changing this value, I was told it is supported**

Yes it is possible to shorten this time-out value by configuring the advanced setting named “VSAN.ClomRepairDelay” on every host in your VSAN cluster.

- **disclaimer: Although I do not recommend changing this value, I was told it is supported**

- Why can’t I use datastore heartbeat functionality in VSAN only cluster?

- There is no requirement for heartbeat datastores. The reason you do not have this functionality when you only have a VSAN datastore is because HA will use the VSAN network for heartbeats. So if a host is isolated from the VSAN network and cannot send heartbeats, it is safe to say that it will also not be able to update a heartbeat region remotely as such making it pointless to enable this feature in a VSAN only environment.

- Are there specific Best Practices around deploying View on VSAN?

- Yes there are, primarily around availability / caching and capacity reservations. Andre Leibovici wrote an article on this topic, read it!

- Can the VSAN VMkernel of hosts in a cluster be part of a different subnet?

- VSAN VMkernel’s need to be part of the same subnet. Different subnet for one (or multiple) hosts within a VSAN cluster is not supported. When using multiple VMkernel interfaces per host each interface needs to be part of a different subnet!

- Does VSAN support being stretched across multiple geographical locations?

- In the current version VSAN will not support “metro” clustering.

- Is there a difference between a host failing and a disk gradually failing?

- Yes there is a difference. There are various failure stated and depending on the state it also determines how fast VSAN will spin up a new mirror. The two failure states are “absent” and “degraded”. Degraded is where a disks has failed and the system has recognized this as such and knows it isn’t coming back. In this case VSAN recognizes this “degraded” state and will create a new mirror of the impacted objects immediately, as there is no point in waiting for 60 minutes when you know it isn’t coming back soon. The “absent” state means that VSAN doesn’t know if it is coming back any time soon, this could be a host that has failed or for instance when you yank a disk, in this case the 60 minute time-out starts.

- Is there any explanation around how VSAN handles disk failures or host failures?

- Yes, I wrote an article on this topic. Please read “How VSAN handles a disk or host failure” for more details.

- What happens when an SSD fails in a VSAN cluster?

- An SSD sits in front of a Disk Group as the read cache / write buffer. When the SSD fails then the disk group and all the components stored on it are marked as degraded. VSAN will then instanties new mirror copies where applicable and when sufficient disk capacity is available. For more details read this post.

- Does vSphere support TRIM for SSDs?

- No, TRIM is currently not supported/leveraged.

- What are the Maximum Numbers for Virtual SAN GA?

- 32 hosts per cluster

- 100 VMs per host maximum

- 3200 VMs per cluster maximum

- 2048 VMs HA protected per cluster maximum

- 2 million IOPS tested

- How do I size a VSAN datastore / cluster?

- I developed a sizing calculator which can be found here.

- How do I monitor VSAN performance?

- What’s likely to affect VSAN performance ?

- Performance is most likely affected by leveraging cheap flash devices or incorrectly configured policies. In the case a workload is highly random and has a large “working set” it could be that many of the IOs will need to come from disk, this can also impact performance depending on the disk type used and the number of disk stripes.

- Why is Storage DRS not supported in VSAN ?

- VSAN only provides a single datastore and has its own placement and balancing algorithms.

- What will happen when the whole environment goes down and power back on again ? Do we run some sort of integrity check ?

- Is VSAN dependent on vCenter ? Can I configure VSAN if vCenter is down ?

- Could you have locality in VSAN ? Does locality make sense at all compared to other solutions ?

- By default VSAN does not have a “data locality” concept as I explained here. However, for View environments CBRC is fully supported and that provides a local read cache for desktops.

- Is vCops aware of VSAN datastore?

- The current version of VC Ops has limited functionality in its current release. The upcoming version of VC Ops will include more statistics and ways of monitoring a VSAN datastore.

- How do you backup your VM’s in VSAN ? Just usual existing backup procedures ?

- VDP supports VSAN and various storage vendors are going through testing/releasing a new version of their product as we speak. VMs stored on a VSAN database should not be treated differently then regular VMs.

- Does VSAN support any data reduction mechanisms like deduplication or compression?

- In the current version deduplication or compression is not included.

- x

If you have a question, please don’t hesitate to ask… Over time I will add more and more to this list so come back regularly.

Introduction to VMware Virtual SAN (vSAN)

VMware Virtual SAN, or I should say VMware vSAN, has been around since August 2013. Back then it was indeed called Virtual SAN, today is it is officially known as vSAN, but that is what most people used anyway. As this article keeps popping up on google search I figured I would rewrite it and provide a better more generic introduction to vSAN which is up to date and covers all that VMware vSAN is about up to the current version of writing, which is VMware vSAN 6.6.

VMware vSAN is a software based distributed storage solution. Some will refer to it as hyper-converged, others will call it software defined storage and some even referred to is as hypervisor converged at some point. The reason for this is simple, VMware vSAN is fully integrated with VMware vSphere. Those of you who are vSphere administrators who are reading this will have no problem configuring vSAN. If you know how to enable HA and DRS, then you know how to configure vSAN. Of course you will need to have a vSAN Network, and you achieve this by creating a VMkernel interface and enabling vSAN on it. vSAN works with L2 and L3 networks, and as of vSAN 6.6 no longer requires multicast to be enabled on the network. (If you want to know what changed with vSAN 6.6 read this article.)

Before we will get a bit more in to the weeds, what are the benefits of a solution like vSAN? What are the key selling points?

- Software defined – Use industry standard hardware, as long as it is on the HCL you are good to go!

- Flexible – Scale as needed and when needed. Just add more disks or add more hosts, yes both scale-up and scale-out are possible.

- Simplicity – Ridiculously easy to manage! Ever tried implementing or managing some of the storage solutions out there? If you did, you know what I am getting at.

- Automated – Per virtual machine and per virtual disk policy based management. Yes, even VMDK level granularity. No more policies defined on a per LUN/Datastore level, but at the level where you need it!

- Hyper-Converged – It allows you to create dense / building block style solutions!

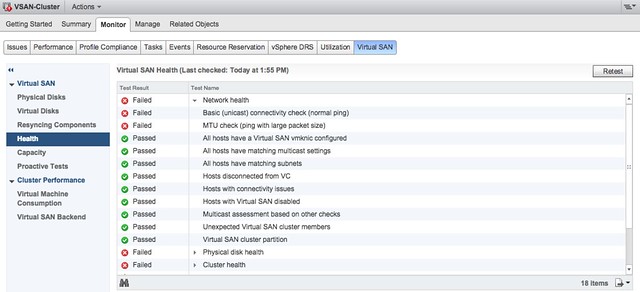

To me “simplicity” is the key reason customers buy vSAN. Not just simplicity in configuring or installing, but even more so simplicity in management. Features like the vSAN Health Check provide a lot of value to the admin. With one glance you can see what the status is of your vSAN. Is it healthy or not? If not, what is wrong?

Okay that sounds great right, but where does that fit in? What are the use-cases for vSAN, how are our 7000+ customers using it today?

- Production / Business Critical Workloads

- Exchange, Oracle, SQL, anything basically…. This is what the majority of customers use vSAN for.

- Management Clusters

- Isolate their management workloads completely, and remove the dependency on your storage systems to be available. Even when your enterprise storage system is down you have access to your management tools

- DMZ

- Where NSX helps isolating a DMZ from the world from a networking/security point of view, vSAN can do the same from a storage point of view. Create a separate cluster and avoid having your production storage go down during a denial of service attack, and avoid complex isolated SAN segments!

- Virtual desktops

- Scale out model, using predictive (performance etc) repeatable infrastructure blocks lowers costs and simplifies operations. Note that vSAN is included with Horizon Advanced and Enterprise!

- Test & Dev

- Avoids acquisition of expensive storage (lowers TCO), fast time to provision, easy scale out and up when required!

- Big Data

- Scale out model with high bandwidth capabilities, Hadoop workloads are not uncommon on vSAN!

- Disaster recovery target

- Cheap DR solution, enabled through a feature like vSphere Replication that allows you to replicate to any storage platform. Other options are of course VAIO based replication mechanisms like Dell/EMC Recover Point.

Yes that is a long list of use cases, I guess it it fair to say that vSAN fit everywhere and anywhere! Now, lets get a bit more technical, just a bit as this is an introduction and for those who want to know more about specific features and settings I have hundreds of vSAN articles on my blog. Also a vSAN book available, and then there’s of course the long list of articles by the likes of William Lam and Cormac Hogan.

When vSAN is enabled a single shared datastore is presented to all hosts which are part of the vSAN enabled cluster. Typically all hosts will contribute performance (SSD) and capacity (magnetic disks or flash) to this shared datastore. This means that when your cluster grows from a compute perspective, your datastore will typically grow with it. (Not a requirement, there can be hosts in the cluster which just consume the datastore!) Note that there are some requirements for hosts which want to contribute storage. Each host will require at least one flash device for caching and one capacity device. From a clustering perspective, vSAN supports the same limits as vSphere: 64 hosts in a single cluster. Unless you are creating a stretched cluster, then the limit is 31 hosts. (15 per site.)

As can be expected from any recent storage system, vSAN heavily relies on flash for performance. Every write I/O will go to the flash cache first, and eventually they will go to the capacity tier. vSAN supports different types of flash devices, broadest support in the industry, ranging from SATA SSDs to 3D XPoint NVMe based devices. This goes for both the caching as well as the capacity tier. Note that for the capacity layer, vSAN of course also supports regular spinning disks. This ranges from NL-SAS to SAS, 7200 RPM to 15k RPM. Just check the vSAN Ready Node HCL or the vSAN Component HCL for what is supported and what is not.

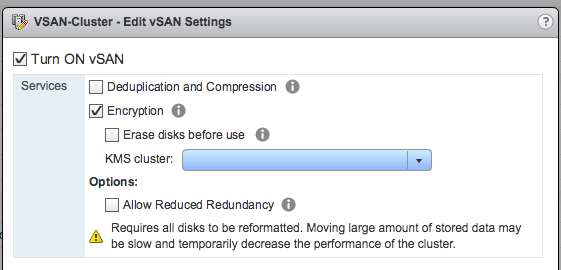

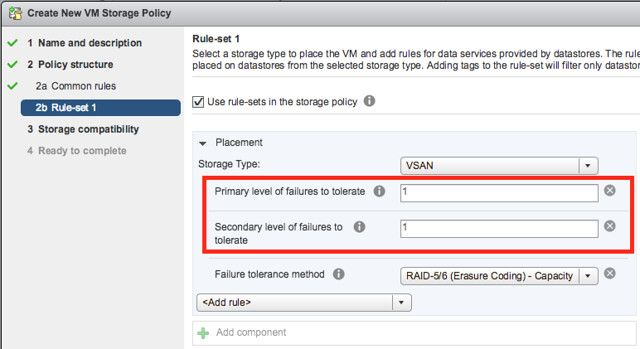

As mentioned, you can set policies on a per virtual machine or even virtual disk level. These policies define availability and performance aspects of your workloads. But for instance also allow you to specify whether checksumming needs to be enabled or not. There are 2 key features which are not policy driven at this point and these are “Deduplication and Compression” and Encryption. Both of these are enabled on a cluster level. But lets get back to the the policy based management. Before deploying your first VMs, you will typically create a (or multiple) policy. In this policy you define what the characteristics of the workload should be. For instance as shown in the example below, how many failures should the VM be able to tolerate? In the below example it shows that “primary” and “secondary” level of failures to tolerate is set to 1. Which in this case means the VM is stretched across 2 locations and also protected by RAID-5 in each site as the “Failure Tolerance Method” is also specified.

The above is a rather complex example, it can be as simple as only setting “Failures to tolerate” to “1”, which in reality is what most people do. This means you will need 3 nodes at a minimum and you will from a VM perspective have 2 copies of the data and 1 witness. vSAN is often referred to as a generic object based storage platform, but what does that mean? The VM can be seen as an object and each copy of the data and the witness can be seen as components. Objects are placed and distributed across the cluster as specified in your policy. As such vSAN does not require a local RAID set, just a bunch of local disks which can be attached to a passthrough disk controller. Now, whether you defined a 1 host failure to tolerate, or for instance a 3 host failure to tolerate, vSAN will ensure enough replicas of your objects are created within the cluster. Is this awesome or what?

Lets take a simple example to illustrate that as I realize it is also easy to get lost in all these technical terms. We have configured a 1 host failure and we create a new virtual disk. This results in vSAN creating 2 identical data components and a witness component. The witness is there just in case something happens to your cluster and to help you decide who will take control in case of a failure, the witness is not a copy of your data component let that be clear, it is just a quorum mechanis. Note, that the amount of hosts in your cluster could potentially limit the amount of “host failures to tolerate”. In other words, in a 3 node cluster you can not create an object that is configured with 2 “host failures to tolerate” as it would require vSAN to place components on 5 hosts at a minimum. (Cormac has a simple table for it here.) Difficult to visualize? Well this is what it would look like on a high level for a virtual disk which tolerates 1 host failure:

First, lets point out that the VM from a compute perspective does not need to be aligned with the data components. In order to provide optimal performance vSAN has an in memory read cache which is used to serve the most recent blocks from memory. Of course blocks which are not in the memory cache will need to be fetched from either of the two hosts that serve the data component. Note that a given block always comes from the same host for reads. This to optimize the flash based read cache. For writes it is straight forward. Every write is synchronously pushed to the hosts that contain data components for that VM. Some may refer to this as replication or mirroring. With all this replication going on, are there requirements for networking? At a minimum vSAN will require a dedicated 1Gbps NIC port for hybrid configurations, and 10GbE for all-flash configurations. Needless to say, but 10Gbps is definitely preferred with solutions like these, and you should always have an additional NIC port available for resiliency. There is no requirement from a virtual switch perspective, you can use either the Distributed Switch or the plain old vSwitch, both will work fine, the Distributed Switch is recommended and comes included with the vSAN license.

So what else is there, well from a feature / functionality perspective there’s a lot. Let me list some of my favourite features:

- RAID-1 / RAID-5 / RAID-6

- Stretched Clustering

- All-Flash for all License options

- Deduplication and Compression

- vSAN Datastore Encryption

- iSCSI Targets (for physical machines)

That more or less covers the basics and I think is a decent introduction to vSAN. Something that hopefully sparks your interest in this distributed storage platform that is deeply integrated with vSphere and enables convergence of compute and storage resources as never seen before. It provides virtual machine and virtual disk level granularity through policy based management. It allows you to control availability, performance and security in a way I have never seen it before, simple and efficient. And then I haven’t even spoken about features like the Health Check, Config Assist, Easy Install and any of the other cool features that are part of vSAN 6.6.

If there are any questions, find me on twitter!

The difference between an isolation and a partition with vSphere

I have a lot of discussions with customers on the topic of stretched clusters, but also regular vSphere clusters. Something that often comes up is the discussion around what happens in an isolation or partition scenario. Fairly often customers (but also VMware employees) use those words interchangeably. However, a partition is not the same as an isolation. They are 2 different scenarios, and also as a result they have a different type of response associated with it. Before I explain the difference in the two responses to a situation like this, what is a partition and what is an isolation?

- An isolation event is a situation where a single host cannot communicate with the rest of the cluster. Note: single host!

- A partition is a situation where two (or more) hosts can communicate with each other, but no longer can communicate with the remaining two (or more) hosts in the cluster. Note: two or more!

Why is that such a big deal? Well the response in the case of these two scenarios are different. And the response/result is also determined by what types of configuration you have. Lets break down the scenarios one by one, including the type of infrastructure used (when it is relevant).

Isolation Event

When a host is isolated it will:

- start an election process

- declare itself primary

- ping the isolation address

- declare itself isolated

- power off / shut down VMs (when this is configured)

- communicate through the connected datastores that it is isolated

- the VMs will be restarted on the remaining hosts in the cluster

And then of course vSphere HA will be able to restart the VMs. Note that in the case of vSAN, it isn’t possible to write to the datastore when a host is isolated, so it won’t do that. Yet the workloads will still have been powered off / shutdown so it is safe for vSphere HA to restart them

Partition (traditional storage)

When two or more hosts are partitioned (they can communicate with each other) and the vSphere HA primary is not part of the partition it will:

- start an election process

- declare a primary in the partition

- figure out what has happened to the hosts and VMs in the other partition

- restart any VMs that somehow were impacted, or appeared now to be powered off while the last known state was powered on

- if all VMs are running, vSphere HA won’t try to restart any, this is the expected result!

Partition (vSAN stretched)

When the partition scenario happens in a stretched vSAN environment there’s an extra (potential) step. Along the way, vSAN will identify all VMs which have no accessible components and kill those VMs so they can be restarted in the partition which has quorum. In this scenario, you have 3 locations, two for data and 1 for the witness. If a data site loses access to the other locations then the data site is partitioned (the hosts can still communicate with each other within the site), as such the isolation response is not triggered. However, vSAN will still kill these VMs as they are rendered useless (lost access to disk).

I know it is just semantics, but nevertheless, I do feel it is important to understand the difference between an isolation and a partition, especially as the response (and who responds) is different in these situations. Hope it helps,