I had a discussion on the VMTN forums about this last week and the question basically was, what should my das.failuredetection time be set to when the isolation response is set to “Shut down”.

Lets first explain what the das.failuredetectiontime is, I described it on our book as follows:

Failure Detection Time is basically the time it takes before the “isolation response” is triggered. There are two primary concepts when we are talking about failure detection time:

- The time it will take the host to detect it is isolated

- The time it will take the non-isolated hosts to mark the unavailable host as isolated and initiate the failover

So what does this have to do with your Isolation Response? Well not much actually, and that might sound weird but it had me thinking about it for a second as well….

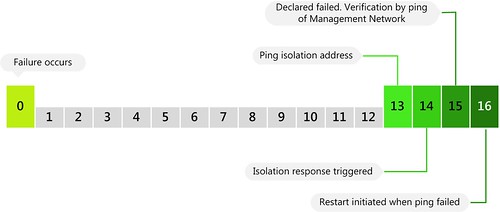

What if your Isolation Response is set to “Shut down” and an isolation occurs? Well in that case HA will try to “Shut down” the VMs in a clean way when Isolation has been detected. HA will do that on the 14th second. On the 16th second the restart will be initiated. So that leaves your VMs exactly two second to shut down in a clean way….So two questions pop-up immediately:

- What if I increase the das.failuredetectiontime?

- What are the chances restarts happens in time?

Increasing the das.failuredetectiontime wouldn’t make a difference as the “2 second” gap will just move up as well. HA will always ping the isolation address on “das.failuredetectiontime – 1” and it will always initiate the restarts on “das.failuredetection + 1”. In other words, 2 minutes or 20 seconds, it makes no difference. I guess a nice diagram makes this a bit clearer:

(created by Frank D. for our book)

So what are the chances these restarts will occur within 16 seconds? Slim indeed. So when will they be restarted? Well a year ago I wrote this article and the following still applies for vSphere 4.1:

- T+0 – Restart

- T+2 – Restart retry 1

- T+4 – Restart retry 2

- T+8 – Restart retry 3

- T+8 – Restart retry 4

- T+8 – Restart retry 5

In other words, if T+0 fails the restart will be retried 2 minutes later. If that one fails the restart will be retried 4 minutes later. (2+4 = 6 minutes after the initial restart) So as you can see selecting “shut down” will more than likely increase your restart latency and this needs to be taken into account for your SLA.

Your recommendation is to keep this low or at the default, 15000…but upon re-reading your book, I see that ‘Leave powered on’ is a more acceptable setting for vSphere 4.1 than it was in older ESX versions.

Would you please clarify how one could better assess in a SMALL (<= 5 hosts) vSphere ESXi 4.1 environment whether to use 'Leave powered on' or 'Shut down' (or whatever is the VMware default??)??

Thank you…

“Leave powered on” is the default these days and I would prefer to stick with it. All previous risks associated with it have been resolved and as such it is a perfectly viable setting.

Awesome!! I will review our hosts and proceed thusly. Thank you for the reassurance.

Is “Leave Powered on” default? Is it not “Shut down” still on 4.1?

“Leave Powered on” is the default now.

I did verify this on a new installation of vSphere 4.1 and the default is still “Shut down”.

Do we agree that “leave poweron” do not actually protects your VM against network only isolation ? I want my VM to HA if they no longer communicate with the world, that’s also why I stick the test isolation IP to some representative on my production LAN, not one super-secure-will-never-fail-because-no-no-HA-should-never-trigger !

Well keep in mind that technically HA only checks for Isolation on the Heartbeat network. I agree with you that I would personally also like my VMs to failover, however many customers I have worked with preferred to have their VMs up and running,

We had weird issues with our HP Virtual Connect, when it started to put PortChannells up and down. All ESXi hosts were constantly loosing connection and then getting it back. Together with HA which started to failover VMs to other hosts it became a real nightmare to troubleshoot.

Moreover, a lot of companies have a quite small VShphere (like ours, 12 hosts in 2 Blade Chassis) and if there is a problem with network connectivity most of the time it means it is the same problem for all hosts, thus making HA failover worthless.

What if a host comes back online in the ‘2 second gap’ and VMs are set to shutdown? VMs will shutdown and not be restarted then.

Correct

I’m not sure I agree that isolation response and the detection time are not related.

We choose to use an isolation response of ‘shut down’, but we’ve also increased our detection time to 60 seconds? We did this because we found that when our rack switches would failover their uplinks, it sometimes took more than 15 seconds for everything to be talking again. If we had this isolation response of ‘leave powered on’, this wouldn’t be an issue because all the VMs would stay powered on. However, we had a brief network glitch cause a few dozen VMs to restart, which was deemed bad. So, we increased the failure detection time.

In response to the original question, I’d suggest that if you’re doing an isolation response of ‘shut down’, you should take however long you think a brief network blip might last on your network and add 10-15 seconds to that number for your failure detection time.

great comment Sean. thanks!

other things to think about:

– Link state tracking

– spanning tree

Hi Duncan,

What happens in this scenario?

das.failuredetectiontime = 60 seconds

Host is isolated for 50 seconds.

After 50 seconds host comes back online (cause: network problem)

Your post mentions HA waits for 13+1 seconds then declares host isolated, so starting from second 14-50 – HA considers host as isolated, but will not trigger the response.

Now I’m guessing when HA receives heartbeats (1 or more?) again after the 51st second…the “missed heartbeats” counter gets reset (I don’t have a better name for it)…and no HA response is initiated.

It is a bit unclear to me because you said there are 2 sides to the problem (failure detection and reaction to detected failure), which would also indicated they are not so tightly coupled…

Also I have not found anyone explaining how transition from failed host to alive host is measured…which adds to my confusion

thanks,

Ionut

Isn’t that what I explained?

“Increasing the das.failuredetectiontime wouldn’t make a difference as the “2 second” gap will just move up as well. HA will always ping the isolation address on “das.failuredetectiontime – 1″ and it will always initiate the restarts on “das.failuredetection + 1″.”

So in your case:

60 – 1 = when isolation is detected / confirmed and VMs will be powered off

60 + 1 = is when restarts will be initiated

Ace artical mate. Cant wait to see the book when it comes out 🙂

Duncan,

You wrote, “Leave powered on is the default these days and I would prefer to stick with it. All previous risks associated with it have been resolved and as such it is a perfectly viable setting.”

Later you wrote, “Well keep in mind that technically HA only checks for Isolation on the Heartbeat network. I agree with you that I would personally also like my VMs to fail over, however many customers I have worked with preferred to have their VMs up and running.”

These statements seem contradictory. Is Leave powered on your preference, as you first posted?

You also wrote, “all the previous risks associated with Leave powered on have been resolved,” but I believe there is still one risk: If you have a situation where the heartbeat and VM networks are unavailable for a given ESX host, then all of the VMs would remain powered on and be unreachable. If I am not mistaken, I believe you would also lose the opportunity to gracefully shut down the VMs, unless you were able to fix the network issue.

it depends on the requirements of the customer to be honest and on the environment. when it is very likely that when the management network is isolated the virtual machines are also isolated than it makes more sense to restart the virtual machines on another host. (in other words select “power off / shutdown”. if it is unlikely that the virtual machines are affected by an isolation event on the management network than I wouldn’t bother restarting them.

We have active and standby adaptors for the primary management network and so increased detection time to 20s. We also have a second management network on the storage network and a second isolation address. Do both addresses get pinged at the same time if the host receives no heartbeats, or is one address pinged first then the other?

in parallel

Hi Duncan,

I’m searching your deepdive book and this blog for a while now and can’t figure the following out:

When I have 2 isolation addresses, say I disabled the ping of default gateway and set up 2 isolation adresses:

ha cluster is 3 hosts running vsphere 4.1:

das.failuredetectiontime=20000

das.isolationaddress0=10.4.128.252

das.isolationaddress1=10.4.128.253

das.usedefaultisolationaddress=false

my 2 addresses are pinged simultaneously, as you just mentioned, right?

when does the host consider it is isolated?

when one of the 2 addresses is not answering or when both addresses are not answering?

I suppose the latter, right? Sorry I just want to make sure I understood!

thanks a lot

regards Jojo

Hi Duncan,

Do you know if the isolation ping is always sent through the management vmkport?

I was thinking about adding a couple of extra das.isolationaddress entries, for things like my iSCSI storage, but then I wondered:

“If the ping is always sent through the management network, what is the point of checking anything other than the management network gateway, as if that is up, the isolation response won’t be triggered, and if it’s down, all addresses will not be able to respond (the pings to other addresses won’t be routed).”

However, if the isolation ping could be sent through the vmkport for iSCSI, then it would be worth doing.

Thanks for your input,

Nick