We (the Unexplored Territory team) have just published two brand-new episodes which discuss What’s New with vSphere 8.0 U1 and vSAN 8.0 U1. You can of course listen to them using your favorite podcast app, or you simply use the embedded players below to enjoy the content.

vsan

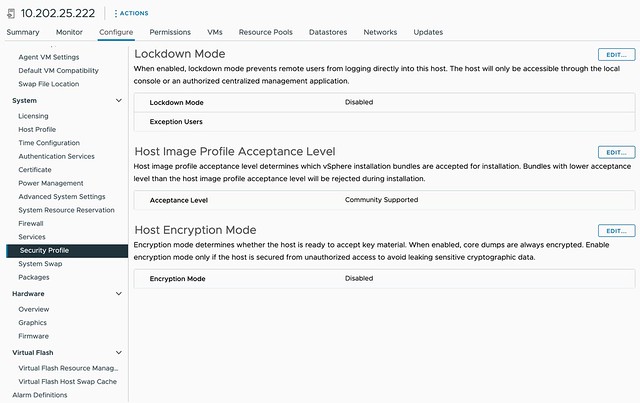

Can I change the “Host Image Profile Acceptance Level” for the vSAN Witness Appliance?

On VMTN a question was asked around the Host Image Profile Acceptance Level for the vSAN Witness Appliance, this is configured to “community supported”. The question was around whether it is supported to change this to “VMware certified” for instance. I had a conversation with the Product Manager for vSAN Stretched Clusters and it is indeed fully supported to make this change, I also filed a feature request to have the Host Image Profile Acceptance Level for the vSAN Witness increased to a higher, more secure, level by default.

So if you want to make that change, feel free to do so!

vSAN ESA is using more CPU cycles than vSAN OSA?

Over the last couple of weeks, I’ve had conversations with customers and partners who have been running performance benchmarks against both vSAN ESA and vSAN OSA. As you can imagine, people want to compare version 8 of OSA against version 8 of ESA, and that is completely fair. What I noticed though is that some of those customers came back with comments around CPU usage of vSAN OSA against ESA. The general comment we get is that vSAN ESA is using more CPU cycles than vSAN OSA.

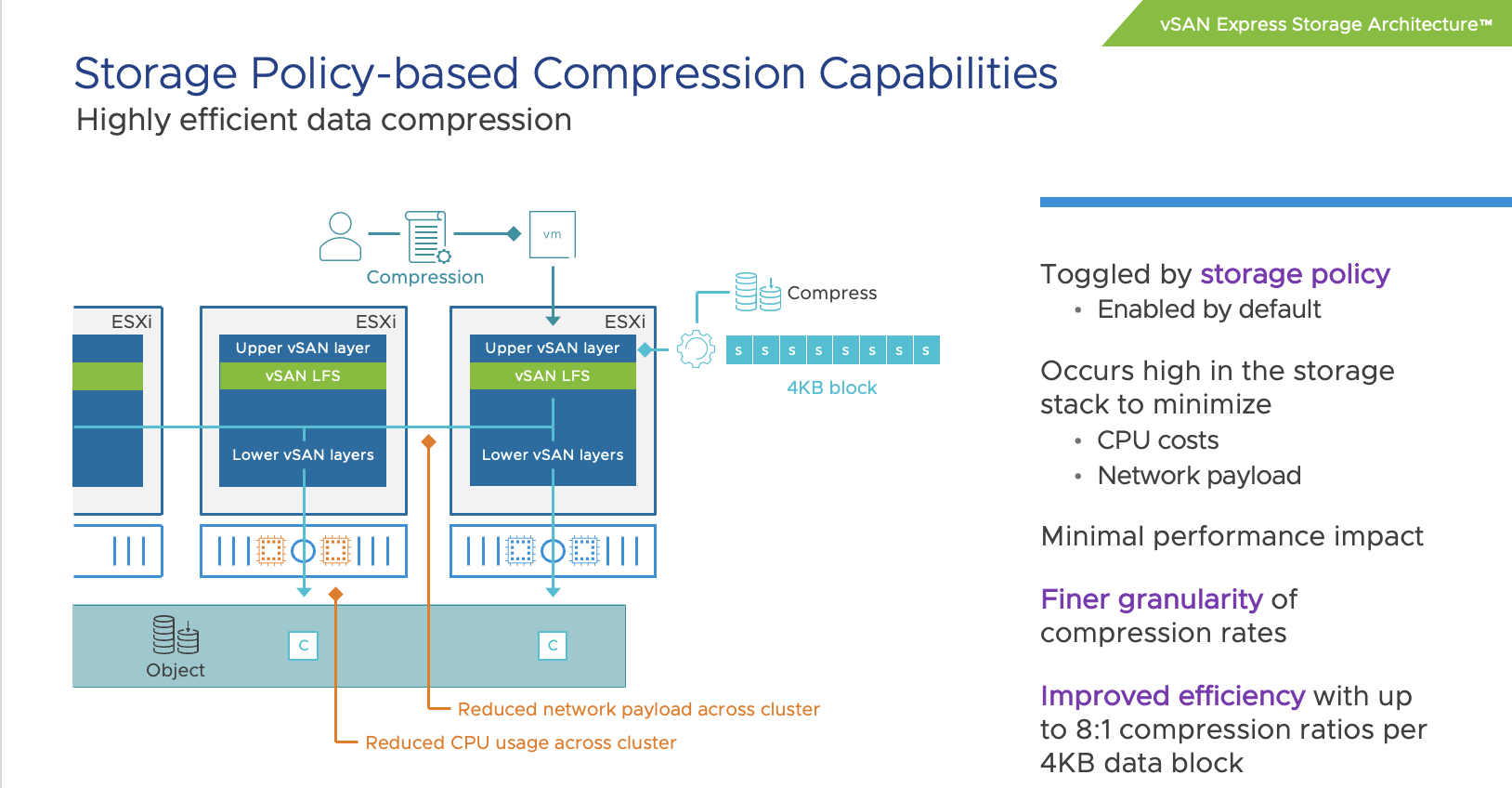

When looking at it from a total number point of view, or CPU cycles consumed, it is very likely you will see vSAN ESA using more cycles than vSAN OSA. The question then typically arises why that is the case, as VMware (the vSAN team) has been claiming that vSAN ESA is much more efficient than vSAN OSA. To be fair, it is much more efficient. For instance data services like checksumming, encryption, and compression have moved to the top of the stack (as shown below) resulting in the fact that we don’t have to compress/encrypt data 3/4/5/6 times but can do it once at the source and then send it over the network to the destination.

Still, it leaves the question, why is more CPU capacity used? The answer is simple, you are pushing much more IO. We’ve seen customers easily reaching 4x the number of IOPS with ESA than with OSA. Even though ESA is more efficient, if you are pushing 4x (or more) the amount of IO then you will need to remember that those additional IOs also come at a cost, and that cost is CPU cycles to process them. So when you make a comparison, please compare apples to apples, and not apples to oranges.

The last thing I want to add, and hopefully I can share some data in the future, the use of RDMA with vSAN 8 ESA seems to have a significant impact on CPU usage, as in lower the amount of CPU required to produce the same results (or better results). So it is worth considering RDMA for sure when adopting vSAN 8 ESA!

Cross connecting vSAN Datastores with HCI Mesh in vSAN 8 OSA

Yesterday I had a discussion internally on Slack about a configuration for a customer. The customer had multiple locations and had the potential to create various clusters within locations, or across locations. Now, as most of you know, I have been talking a lot about stretched clusters for the past decade. However, stretched clusters are not the answer to every problem. Especially not in situations where you end up with unbalanced configurations, or where you lack the ability to place a witness in a third location. Customers tend to gravitate towards stretched clusters as it provides them resiliency, and pooling of all resources even though they are across locations.

With vSAN when you have multiple clusters, you also have multiple vSAN Datastores. Having that separation of resources is typically appreciated. However, in some cases, customers prefer the flexibility of movement with limited overhead. Sure if you have multiple clusters you can simply Storage vMotion VMs from source cluster to destination cluster, but it does mean you need to move ALL the data with the VM, where in some cases you may not care where the data resides.

This is where HCI Mesh comes into play. With HCI Mesh you have the ability to mount a vSAN Datastore. Meaning, you have a “client” and a “server” cluster, and the client mounts the server. Within our documentation on core.vmware.com this is demonstrated as follows:

.png)

If you look at this diagram then the top two clusters are “client” clusters and the bottom one is the “server” cluster. This basically means that the “client” cluster consumed the datastore capacity from the “server” cluster. The above diagram however resulted in a bit of confusion as it does not show a situation where your client cluster can simultaneously be a server cluster. This is something I want to point out. You can create a true “mesh” with HCI Mesh! If you have two clusters, let’s say Cluster A and Cluster B, then you can mount the vSAN Datastore from Cluster A to Cluster B and the datastore from Cluster B to Cluster A. This is fully supported, and works great, as demonstrated in the below two screenshots. I tested this with vSphere/vSAN 8.0 OSA, but it is also supported with vSAN 7.0 U1 and up. Do note, vSAN ESA today does not support HCI Mesh just yet, hopefully it will in the near feature!

So before you decide how to configure vSAN, please look at all the capabilities provided, write down your requirements, and see what helps solving the challenges you are facing while meeting those requirements (in a supported way)!

vSAN Express Storage Architecture cluster sizes supported?

On VMTN a question was asked about the size of the cluster being supported for vSAN Express Storage Architecture (ESA). There appears to be some misinformation out there on various blogs. Let me first state that you should rely on official documentation when it comes to support statements, and not on third party blogs. VMware has the official documentation website, and of course, there’s core.vmware.com with material produced by the various tech marketing teams. This is what I would rely on for official statements and or insights on how things work, and then of course there are articles on personal blogs by VMware folks. Anyway. back to the question, which cluster size is supported?

For vSAN ESA, VMware supports the exact same configuration when it comes to the cluster size as it supports for OSA. In other words, as small as a 2-node configuration (with a witness), as large as a 64-node configuration, and anything in between!

Now when it comes to sizing your cluster, the same applies for ESA as it does for OSA, if you want VMs to automatically rebuild after a host failure or long-term maintenance mode action, you will need to make sure you have capacity available in your cluster. That capacity comes in the form of storage capacity (flash) as well as host capacity. Basically what that means is that you need to have additional hosts available where the components can be created, and the capacity to resync the data of the impacted objects.

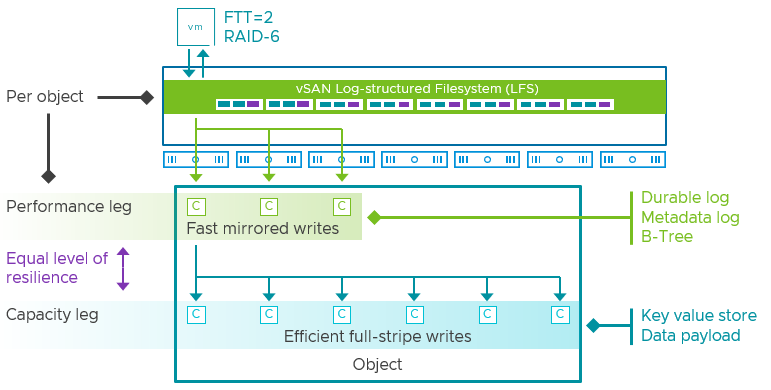

If you look at the diagram below, you see 6 components in the capacity leg and 7 hosts, which means that if a host fails you still have a host available to recreate that component, again, on this host you also still need to have capacity available to resync the data so that the object is compliant again when it comes to data availability.

I hope that explains first of all what is supported from a cluster size perspective, and secondly why you may want to consider adding additional hosts. This of course will depend on the requirements you have and the budget you have.