I got this question on the VMTN forum this week, does the Native Key Provider require a host to have a TPM? (Trusted Platform Module) The documentation does discuss the use of TPM 2.0 when you enable the Native Key Provider. Let’s be clear, the vCenter Server Native Key Provider does not require a TPM! If a TPM is available on each host then it will be used by the Native Key Provider to store a secret on, which enables us to encrypt and decrypt the ESXi configuration. Again, as stated, it is not a requirement to use a TPM. I have asked to get the documentation appended so that it is officially documented as well, just posting it here so that it indexed by google.

7

vSphere HA internals: restart placement changes in vSphere 7!

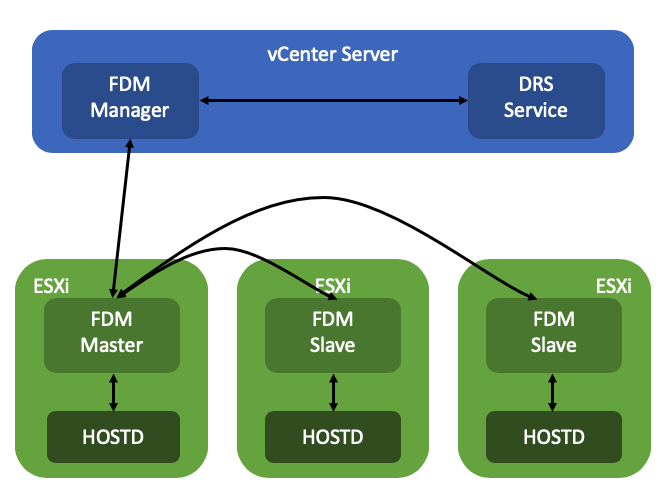

Frank and I are looking to update the vSphere Clustering deep dive to vSphere 7. While scoping the work I stumbled on to something interesting, and this is the change that was introduced for the vSphere HA restart mechanism, and specifically the placement of VMs in vSphere 7. In previous releases vSphere HA had a straight forward way of doing placement for VMs when VMs need to be restarted as a result of a failure. In vSphere 7.0 this mechanism was completely overhauled.

So how did it work pre-vSphere 7?

- HA uses the cluster configuration

- HA uses the latest compatibility list it received from vCenter

- HA leverages a local copy of the DRS algorithm with a basic (fake) set of stats and runs the VMs through the algorithm

- HA receives a placement recommendation from the local algorithm and restarts the VM on the suggested host

- Within 5 minutes DRS runs within vCenter, and will very likely move the VM to a different host based on actual load

As you can imagine this is far from optimal. So what is introduced in vSphere 7? Well, we introduce two different ways of doing placement for restarts in vSphere 7:

- Remote Placement Engine

- Simple Placement Engine

The Remote Placement Engine, in short, is the ability for vSphere HA to make a call to DRS for the recommendation of the placement of a VM. This will take the current load of the cluster, the VM happiness, and all configured affinity/anti-affinity/vm-host affinity rules into consideration! Will this result in a much slower restart? The great thing is that the DRS algorithm has been optimized over the past years and it is so fast that there will not be a noticeable difference between the old mechanism and the new mechanism. Added benefit of course for the engineering team is that they can remove the local DRS module, which means there’s less code to maintain. How this works is that the FDM Master communicated with the FDM Manager which runs in vCenter Server. FDM Manager communicates with the DRS service to request a placement recommendation.

Now some of you will probably wonder what happens when vCenter Server is unavailable, well this is where the Simple Placement Engine comes into play. The team has developed a new placement engine that basically takes a round-robin approach, but does consider of course “must rules” (VM to Host) and the compatibility list. Note, affinity, or anti-affinity rules, are not considered when SPE is used instead of RPE! This is a known limitation, which is considered to be fixed in the future. If a host, for instance, is not connected to the datastore the VM is running on that needs to be restarted than that host is excluded from the list of potential placement targets. By the way, before I forget, version 7 also introduced a vCenter heartbeat mechanism as a result. HA will be heart beating the vCenter Server instance to understand when it will need to resort to the Simple Placement Engine vs the Remote Placement Engine.

I dug through the FDM log to find some proof of these new mechanisms, (/var/log/fdm.log) and found an entry that shows there are indeed two placement engines:

Invoking the RPE + SPE Placement Engine

RPE stands for “remote placement engine”, and SPE for “simple placement engine”. Where Remote of course refers to DRS. You may ask yourself, how do you know if DRS is being called? Well, that is something you can see in the logs in the DRS log files, when a placement request is received, the below entry shows up in the log file:

FdmWaitForUpdates-vim.ClusterComputeResource:domain-c8-26307464

This even happens when DRS is disabled and also when you use a license edition which does not include DRS even, which is really cool if you ask me. If for whatever reason vCenter Server is unavailable, and as a result DRS can’t be called, you will see this mentioned in the FDM log, and as shown below, it will use the Simple Placement Engine’s recommendation for the placement of the VM:

Invoke the placement service to process the placement update from SPE

A cool and very useful small HA enhancement if you ask me for vSphere 7.0!

** Disclaimer: This article contains references to the words master and/or slave. I recognize these as exclusionary words. The words are used in this article for consistency because it’s currently the words that appear in the software, in the UI, and in the log files. When the software is updated to remove the words, this article will be updated to be in alignment. **

Inspecting vSAN File Services share objects

Today I was looking at vSAN File Services a bit more and I had some challenges figuring out the details on the objects associated with a File Share. Somehow I had never noticed this, but fortunately, Cormac pointed it out. In the Virtual Objects section of the UI you have the ability to filter, and it now includes the option to filter for objects associated to File Shares and to Persistent Volumes for containers as well. If you click on the different categories in the top right you will only see those specific objects, which is what the screenshot below points out.

Something really simple, but useful to know. I created a quick youtube video going over it for those who prefer to see it “in action”. Note that at the end of the demo I also show how you can inspect the object using RVC, although it is not a tool I would recommend for most users, it is interesting to see that RVC does identify the object as “VDFS”.

vSAN File Services and the different communication layers

I received a bunch of questions based on my vSAN File Services posts the past couple of days. Most questions were around how the different layers talk to each other, and where vSAN comes in to play in this platform. I understand why, I haven’t discussed this aspect yet, but that is primarily as I wasn’t sure what I could/should talk about. Let’s start with a description of how communication works, top to bottom.

- The NFS Client connects to the vSAN File Services NFS Server

- The NFS Server runs within the protocol stack container, the IPs provided during the configuration are assigned to the protocol stack container

- The protocol stack container runs within FS VM, the FS VM has no IP address assigned

- The FS VM has a VMCI device (vSocket interface), which is used to communicate with the ESXi host securely

- The ESXi host has VDFS kernel modules

- VDFS communicates with vSAN layer and SPBM

- vSAN is responsible for the lifecycle management of objects

- A file share has a 1:1 relationship with a VDFS volume and is formed out of vSAN objects

- Each file share / VDFS volume has a policy assigned, and the layout of the vSAN objects are determined by this policy

- Objects are formatted with the VDFS file system and presented as a single VDFS volume

I guess a visual may help clarify things a bit, as for me it also took a while to wrap my head around this. Look at the diagram below.

So in other words, every FS VM allows for communication to the kernel using the vSockets library through the VMCI device. I am not going to explain what vSocket is as the previous link refers to a lengthy document on this topic. The VDFS layer leverages vSAN and SPBM for the lifecycle management of the objects that form a file share. So what is this VDFS layer then? Well VDFS is the layer that exposes a (distributed) file system that resides within the vSAN object(s) and allows the protocol stack container to share it as NFS v3 or v4.1. As mentioned, the objects are presented as a single VDFS volume.

So even though vSAN File Services uses a VM to ultimately allow a client to connect to a share, the important part here is that the VM is only used for the protocol stack container. All of the distributed file system logic lives within the vSphere layer. I hope that helps to explain the architecture a bit and how the layers communicate. I also recorded a quick demo, including the diagram above with the explanation of the layers, that shows how a protocol stack container is moved from one FS VM to another when a host goes into maintenance mode. This allows for NFS clients to stay connected to the same IP-address for the file shares for NFS v3, for NFS v4.1 we do provide the ability to connect to a primary IP address and load balance automatically.

Enabling vSAN File Services in a vSAN cluster larger than 8 hosts

I noticed something over the weekend, and I want to make sure customers do not run in to this problem. If you have more than 8 hosts in your vSAN Cluster and enable vSAN File Services than the H5 client will ask your for more than 8 IP addresses. These IP addresses are used by the protocol stack containers. However, as described in this post, vSAN File Services will only ever instantiated 8 protocol stack containers in the current release. So do not provide more than 8 IPs, I tried it, and I also ran in to the scenario where vSAN File Services was not configured completely and properly as a result. You can simply click the “x” as pointed out in the screenshot below to remove the IP address entry line(s) to work around this issue. Hopefully it will be fixed soon in the UI.