I’ve posted about vSphere HA advanced settings various times in the past, and let me start by saying that you shouldn’t play around with them unless you have a requirement to do so. But if you do, there is a KB article which I can highly recommend as it lists all the known and lesser known advanced settings. I had the KB article updated with vSphere 5.5 advanced settings yesterday (Thanks KB team for being so responsive!) but it also applies to vSphere 5.0 and 5.1. Recommended read for those who want to get in to the nitty gritty details of vSphere HA.

5.0

With a single Datastore can I still use HA’s Datastore heartbeating?

I had a question last week around HA’s datastore heartbeating, the question was if datastore heartbeating still worked if you only have 1 datastore in your environment. I can understand where the question comes from as HA throws this error that you need to have 2 datastores at a minimum for HA datastore heartbeating to function correctly. I want to point out that even though HA says that 2 datastores is the minimum, even when only one datastore is available it will be used for heartbeat purposes. Yes this error will be there on your cluster, and yes you can suppress it using “das.ignoreInsufficientHbDatastore“. I figured others might be hitting the same error and have the same question so why not document it?!

Minimum bandwidth requirements per concurrent vMotion?

I have been digging for a long time now to figure out what the minimum bandwidth requirements are per concurrent vMotion. After a long time I finally managed to get a statement. In the past the statement was made that 622Mbps was the minimum required bandwidth for vMotion, it appears that this is incorrect for vSphere 5.0 and higher. With vSphere 5.0 a new feature called Stun During Page Send (SDPS) was introduced and this has decreased the bandwidth requirements from 622Mpbs down to 250Mbps per concurrent vMotion.

Always nice to know right?!

Unmounting datastore fails due to vSphere HA?

On the VMware Community Forums someone reported he was having issues unmounting datastores when vSphere HA was enabled. Internally I contacted various folks to see what was going on. The error that this customer was hitting was the following:

The vSphere HA agent on host '<hostname>' failed to quiesce file activity on datastore '/vmfs/volumes/<volume id>'

After some emails back and forth with Support and Engineering (awesome to work with such a team by the way!) the issue was discovered and it seems that in two separate instances issues were resolved that had to do with unmounting of datastores. Keith Farkas explained on the forums how you can figure out if you are hitting those exact problems or not and in which release they are fixed, but at I realize those kind of threads are difficult to find I figured I would post it here for future reference:

You can determine if you are encountering this issue by searching the VC log files. Find the task corresponding to the unmount request, and see if the follow error message is logged during the task’s execution (Fixed in 5.1 U1a) :

2012-09-28T11:24:08.707Z [7F7728EC5700 error 'DAS'] [VpxdDas::SetDatastoreDisabledForHACallback] Failed to disable datastore /vmfs/volumes/505dc9ea-2f199983-764a-001b7858bddc on host [vim.HostSystem:host-30,10.112.28.11]: N3Csi5Fault16NotAuthenticated9ExceptionE(csi.fault.NotAuthenticated)While we are on the subject, I’ll also mention that there is another know issue in VC 5.0 that was fixed in VC5.0U1 (the fix is in VC 5.1 too). This issue related to unmounting a force mounted VMFS datastore. You can determine whether you are hitting this error by again checking the VC log files. If you see an error message such as the following with VC 5.0, then you may be hitting this problem. A work around, like above, is to disable HA while you unmount the datastore.

2011-11-29T07:20:17.108-08:00 [04528 info 'Default' opID=19B77743-00000A40] [VpxLRO] -- ERROR task-396 -- host-384 -- vim.host.StorageSystem.unmountForceMountedVmfsVolume: vim.fault.PlatformConfigFault:

CPU Affinity and vSphere HA

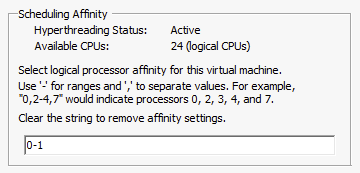

On the VMware Community Forums someone asked today if CPU Affinity and vSphere HA worked in conjunction and if it was supported. To be fair I never tested this scenario, but I was certain it was supported and would work… Never hurts to validate though before you answer a question like that. I connected to my lab and disabled a VM for DRS so I could enable CPU affinity. I pinned the CPUs down to core 0 and 1 as shown in the screenshot below:

After pinning the vCPUs to a set of logical CPUs I powered on the VM. The result was, as expected, a “Protected” virtual machine as shown in the screenshot below.

But would it get restarted if anything happened to the host? Yes it would, and I tested this of course. I switched the server off which was running this virtual machine and within a minute vSphere HA restarted the virtual machine on one of the other hosts in the cluster. So there you have it, CPU Affinity and vSphere HA work fine.

PS: Would I ever recommend using CPU Affinity? No I would not!