Every year over the past decade or so I have done this “Top 15/20 VMworld Sessions to attend” post, although VMworld no longer exists and VMware created this new event called VMware Explore, I feel that this tradition does need to live on! I went over the full catalog and picked the 15 sessions I feel are “must-watch” content. Now, to be clear, this is my opinion and you may not agree, and that is fine! If you feel a session is missing, just drop a note in the comment section so people can see what else they should consider watching/attending!

Before we start the list, I do want to mention that Frank Denneman, William Lam, and I will be part of a panel session hosted by Pete Flecha and John Nichsolson, aka the Virtually Speaking Podcast crew. The Title of the session is: “60 Minutes of Virtually Speaking Live: Accelerating Cloud Transformation [MCLB2804US]“. Make sure to register as soon as you can, as I am sure that this one will fill up fast!

Here we go, please note that this is a mix of “live/in-person” and “on-demand” sessions, and the sessions are in no particular order!

- Project Monterey Behind the Scenes: A Technical Deep Dive [CEIB1576US] by Dave Morera and Meghana Badrinath (In-Person)

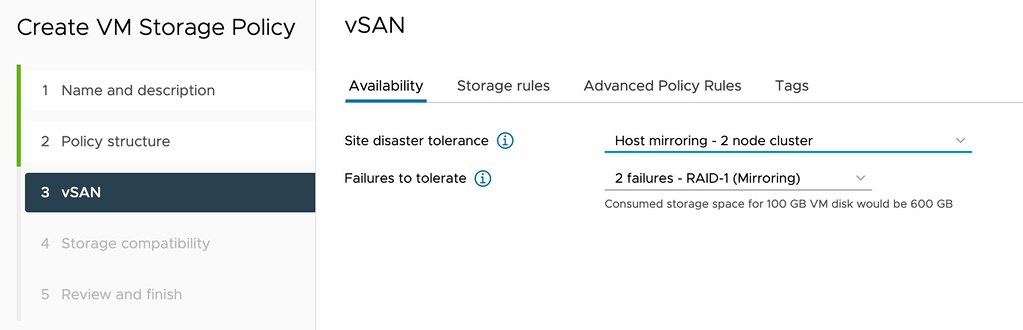

- Deconstructing vSAN – A Deep Dive into the Internals of vSAN [INDB2406USD] by Pete Koehler and John Nicholson (On-Demand)

- Get to Know the Next-Generation of vSAN Architecture [CEIB2172US] by Rakesh Radhakrishnan and Wenguang Wang (In-Person)

- Core Storage Best Practice Deep Dive [CEIB1382USD] by Cody Hosterman and Jason Massae (On-Demand)

- How Your Future Server Purchase Should Be Ready for Tiered Memory [VIB1390US] by Richard Brunner (In-Person)

- Project Capitola – Vision for a Disaggregated Memory World – Addressing Capacity and Cost Challenges for Business-Critical Applications [CEIB1268USD] by Sudhir Balasubramanian, Sridhar Kayathi, Arvind Jagannath (On-Demand)

- A Technical Deep Dive into Container Security [SECB2366USD] by Haim Helman and Stephane List (On-Demand)

- VMware Edge Compute Stack Reference Architecture Deep Dive [CEIB2325US] by Ken Guo and Michael Wright (In-Person)

- Architecting Multi-Cloud Horizon [EUSB2088USD] by Hilko Lantinga and Richard Terlep (On-Demand)

- Designing a Secure Multi-Cloud Infrastructure with VMware and Google [SECB2398US] by Wade Holmes and Chris McCain (In-Person)

- Tanzu Application Platform – A Deeper Dive for Developers [CNAB2045USD] by Ben Wilcock and Alex Barbato (On-Demand)

- Advanced Networking and Security for VMware Cloud on AWS [NETB2287US] by Sandeep Sharma and Jeremy Anderson (In-Person)

- Tanzu Platform for Kubernetes and the Multi-Cloud Journey [KUBB1940US] by Kendrick Coleman, Scott Rosenberg, Katarina Brookfield (In-Person)

- Technical Architecture and Advantages of VMware Cloud for Your Workloads [CEIB1429USD] by Niels Hagoort and Oleg Ulyanov (On-Demand)

- Vision for Data Protection as a Service and What’s New in VMware Cloud Disaster Recovery [CEIB1236US] by Nabil Quadri and Yoomi Yong (In-Person)