I was preparing a post on Storage I/O Control (SIOC) when I noticed this article by Alex Bakman. Alex managed to capture the essence of SIOC in just two sentences.

Without setting the shares you can simply enable Storage I/O controls on each datastore. This will prevent any one VM from monopolizing the datatore by leveling out all requests for I/O that the datastore receives.

This is exactly the reason why I would recommend anyone who has a large environment, and even more specifically in cloud environments, to enable SIOC. Especially in very large environments where compute, storage and network resources are designed to accommodate the highest common factor it is important to ensure that all entities can claim their fair share of resource and in this case SIOC will do just that.

Now the question is how does this actually work? I already wrote a short article on it a while back but I guess it can’t hurt to reiterate thing and to expand a bit.

First a bunch of facts I wanted to make sure were documented:

- SIOC is disabled by default

- SIOC needs to be enabled on a per Datastore level

- SIOC only engages when a specific level of latency has been reached

- SIOC has a default latency threshold of 30MS

- SIOC uses an average latency across hosts

- SIOC uses disk shares to assign I/O queue slots

- SIOC does not use vCenter, except for enabling the feature

When SIOC is enabled disk shares are used to give each VM its fair share of resources in times of contention. Contention in this case is measured in latency. As stated above when latency is equal or higher than 30MS, and the statistics around this are computed every 4 seconds, the “datastore-wide disk scheduler” will determine which action to take to reduce the overall / average latency and increase fairness. I guess the best way to explain what happens is by using an example.

As stated earlier, I want to keep this post fairly simple and I am using the example of an environment where every VM will have the same amount of shared. I have also limited the amount of VMs and hosts in the diagrams. Those of you who attended VMworld session TA8233 (Ajay and Chethan) will recognize these diagrams, I recreated and slightly modified them.

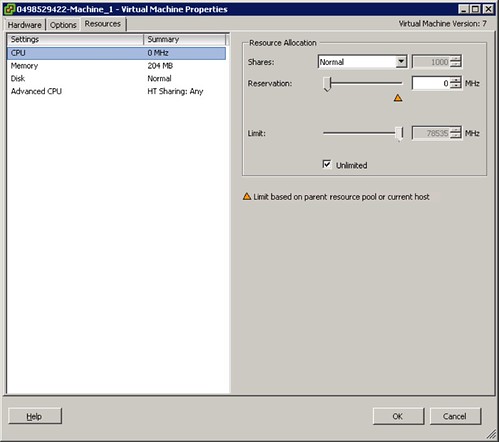

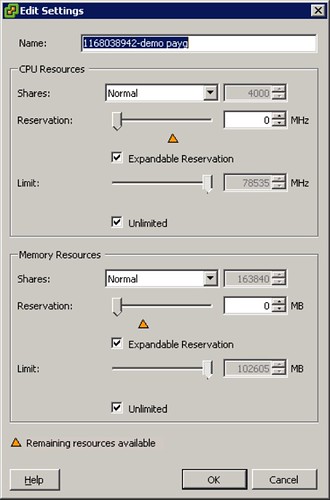

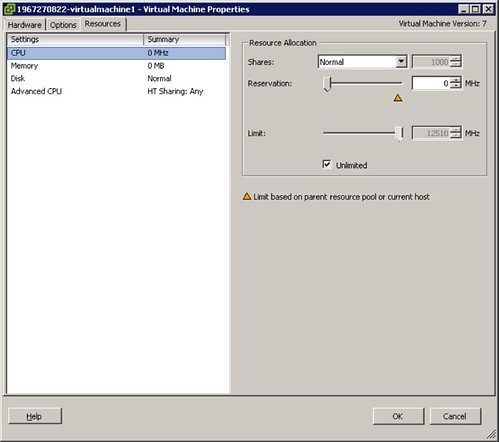

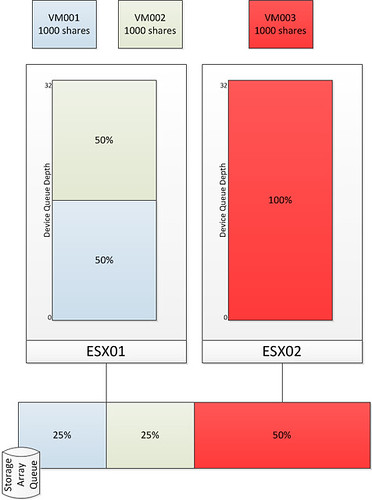

The first diagram shows three virtual machines. VM001 and VM002 are hosted on ESX01 and VM003 is hosted on ESX02. Each VM has disk shares set to a value of 1000. As Storage I/O Control is disabled there is no mechanism to regulate the I/O on a datastore level. As shown in the bottom by the Storage Array Queue in this case VM003 ends up getting more resources than VM001 and VM002 while all of them from a shares perspective were entitled to the exact same amount of resources. Please note that both Device Queue Depth’s are 32, which is the key to Storage I/O Control but I will explain that after the next diagram.

As stated without SIOC there is nothing that regulates the I/O on a datastore level. The next diagram shows the same scenario but with SIOC enabled.

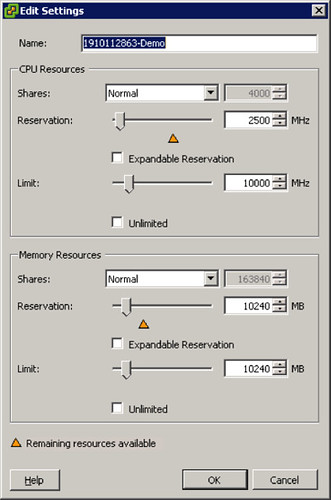

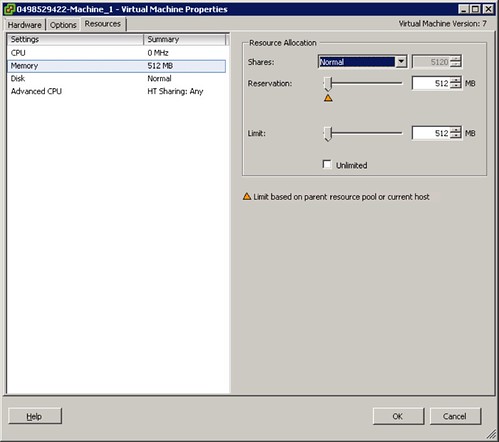

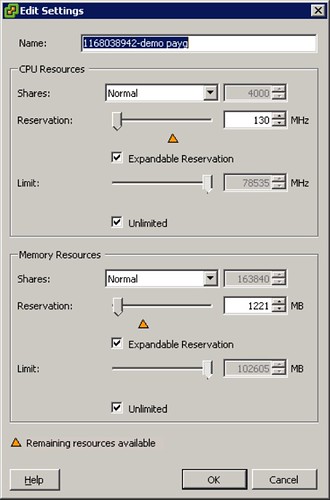

After SIOC has been enabled it will start monitoring the datastore. If the specified latency threshold has been reached (Default: Average I/O Latency of 30MS) for the datastore SIOC will be triggered to take action and to resolve this possible imbalance. SIOC will then limit the amount of I/Os a host can issue. It does this by throttling the host device queue which is shown in the diagram and labeled as “Device Queue Depth”. As can be seen the queue depth of ESX02 is decreased to 16. Note that SIOC will not go below a device queue depth of 4.

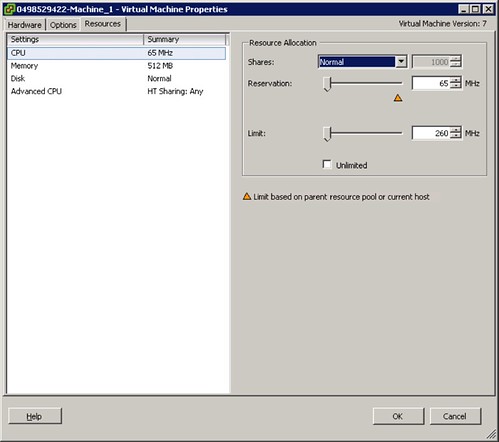

Before it will limit the host it will of course need to know what to limit it to. The “datastore-wide disk scheduler” will sum up the disk shares for each of the VMDKs. In the case of ESX01 that is 2000 and in the case of ESX02 it is 1000. Next the “datastore-wide disk scheduler” will calculate the I/O slot entitlement based on the the host level shares and it will throttle the queue. Now I can hear you think what about the VM will it be throttled at all? Well the VM is controlled by the Host Local Scheduler (also sometimes referred to as SFQ), and resources on a per VM level will be divided by the the Host Local Scheduler based on the VM level shares.

I guess to conclude all there is left to say is: Enable SIOC and benefit from its fairness mechanism…. You can’t afford a single VM flooding your array. SIOC is the foundation of your (virtual) storage architecture, use it!

ref:

PARDA whitepaper

storage i/o control whitepaper

vmworld storage drs session

vmworld storage i/o control session